What an unimaginable week we’ve already had at re:Invent 2023! If you happen to haven’t checked them out already, I encourage you to learn our crew’s weblog posts protecting Monday Night time Reside with Peter DeSantis and Tuesday’s keynote from Adam Selipsky.

Right this moment we heard Dr. Swami Sivasubramanian’s keynote handle at re:Invent 2023. Dr. Sivasubramanian is the Vice President of Information and AI at AWS. Now greater than ever, with the latest proliferation of generative AI companies and choices, this area is ripe for innovation and new service releases. Let’s see what this 12 months has in retailer!

Swami started his keynote by outlining how over 200 years of technological innovation and progress within the fields of mathematical computation, new architectures and algorithms, and new programming languages has led us to this present inflection level with generative AI. He challenged everybody to take a look at the alternatives that generative AI presents by way of intelligence augmentation. By combining information with generative AI, collectively in a symbiotic relationship with human beings, we are able to speed up new improvements and unleash our creativity.

Every of at present’s bulletins may be considered by means of the lens of a number of of the core parts of this symbiotic relationship between information, generative AI, and people. To that finish, Swami supplied an inventory of the next necessities for constructing a generative AI software:

- Entry to a wide range of basis fashions

- Non-public atmosphere to leverage your information

- Simple-to-use instruments to construct and deploy purposes

- Function-built ML infrastructure

On this submit, I might be highlighting the primary bulletins from Swami’s keynote, together with:

- Help for Anthropic’s Claude 2.1 basis mannequin in Amazon Bedrock

- Amazon Titan Multimodal Embeddings, Textual content fashions, and Picture Generator now out there in Amazon Bedrock

- Amazon SageMaker HyperPod

- Vector engine for Amazon OpenSearch Serverless

- Vector seek for Amazon DocumentDB (with MongoDB compatibility) and Amazon MemoryDB for Redis

- Amazon Neptune Analytics

- Amazon OpenSearch Service zero-ETL integration with Amazon S3

- AWS Clear Rooms ML

- New AI capabilities in Amazon Redshift

- Amazon Q generative SQL in Amazon Redshift

- Amazon Q information integration in AWS Glue

- Mannequin Analysis on Amazon Bedrock

Let’s start by discussing a number of the new basis fashions now out there in Amazon Bedrock!

Anthropic Claude 2.1

Simply final week, Anthropic introduced the discharge of its newest mannequin, Claude 2.1. Right this moment, this mannequin is now out there inside Amazon Bedrock. It presents important advantages over prior variations of Claude, together with:

- A 200,000 token context window

- A 2x discount within the mannequin hallucination price

- A 25% discount in the price of prompts and completions on Bedrock

These enhancements assist to boost the reliability and trustworthiness of generative AI purposes constructed on Bedrock. Swami additionally famous how gaining access to a wide range of basis fashions (FMs) is important and that “nobody mannequin will rule all of them.” To that finish, Bedrock presents help for a broad vary of FMs, together with Meta’s Llama 2 70B, which was additionally introduced at present.

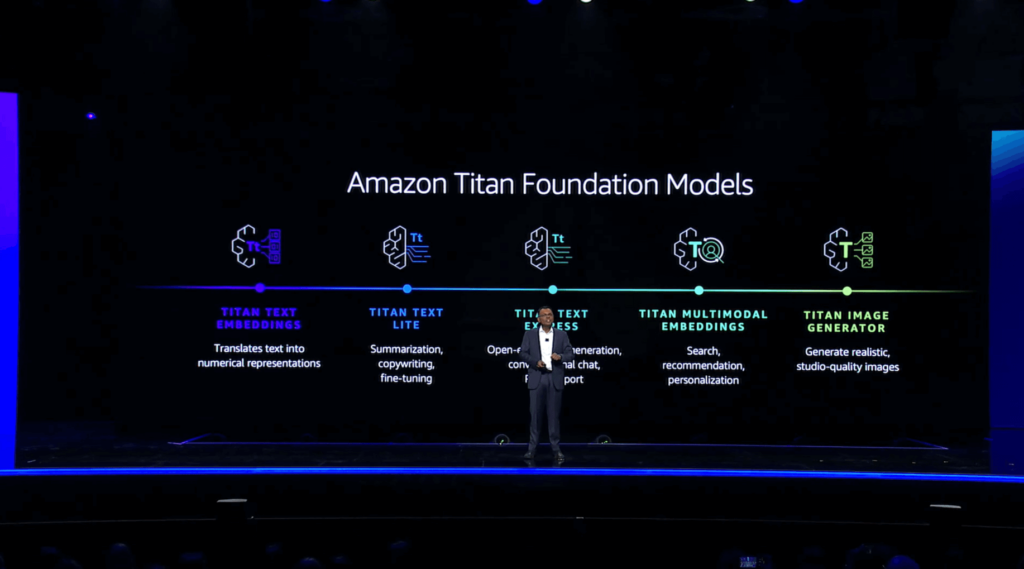

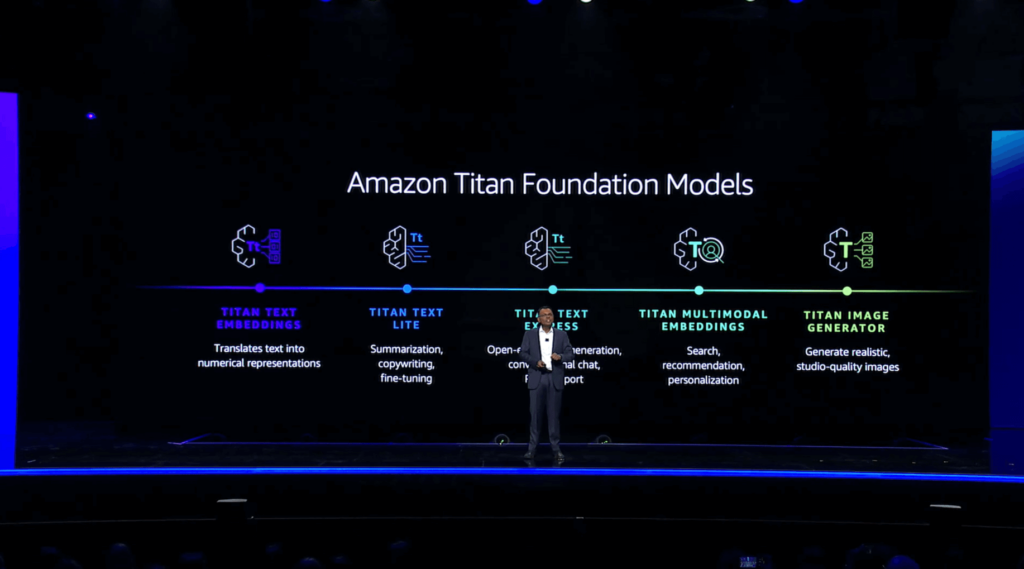

Amazon Titan Multimodal Embeddings, Textual content fashions, and Picture Generator now out there in Amazon Bedrock

Swami launched the idea of vector embeddings, that are numerical representations of textual content. These embeddings are essential when customizing and enhancing generative AI purposes with issues like multimodal search, which may contain a text-based question together with uploaded photos, video, or audio. To that finish, he launched Amazon Titan Multimodal Embeddings, which might settle for textual content, photos, or a mix of each to offer search, advice, and personalization capabilities inside generative AI purposes. He then demonstrated an instance software that leverages multimodal search to help clients find the required instruments and assets to finish a family transforming undertaking based mostly on a consumer’s textual content enter and image-based design selections.

He additionally introduced the final availability of Amazon Titan Textual content Lite and Amazon Titan Textual content Specific. Titan Textual content Lite is beneficial for performing duties like summarizing textual content and copywriting, whereas Titan Textual content Specific can be utilized for open-ended textual content technology and conversational chat. Titan Textual content Specific additionally helps retrieval-augmented technology, or RAG, which is beneficial when coaching your personal FMs based mostly in your group’s information.

He then launched Titan Picture Generator and confirmed how it may be used to each generate new photos from scratch and edit present photos based mostly on pure language prompts. Titan Picture Generator additionally helps the accountable use of AI by embedding an invisible watermark inside each picture it generates indicating that the picture was generated by AI.

Amazon SageMaker HyperPod

Swami then moved on to a dialogue concerning the complexities and challenges confronted by organizations when coaching their very own FMs. These embrace needing to interrupt up giant datasets into chunks which might be then unfold throughout nodes inside a coaching cluster. It’s additionally essential to implement checkpoints alongside the way in which to protect in opposition to information loss from a node failure, including additional delays to an already time and resource-intensive course of. SageMaker HyperPod reduces the time required to coach FMs by permitting you to separate your coaching information and mannequin throughout resilient nodes, permitting you to coach FMs for months at a time whereas taking full benefit of your cluster’s compute and community infrastructure, decreasing the time required to coach fashions by as much as 40%.

Vector engine for Amazon OpenSearch Serverless

Returning to the topic of vectors, Swami defined the necessity for a robust information basis that’s complete, built-in, and ruled when constructing generative AI purposes. In help of this effort, AWS has developed a set of companies in your group’s information basis that features investments in storing vectors and information collectively in an built-in trend. This lets you use acquainted instruments, keep away from further licensing and administration necessities, present a sooner expertise to finish customers, and scale back the necessity for information motion and synchronization. AWS is investing closely in enabling vector search throughout all of its companies. The primary announcement associated to this funding is the final availability of the vector engine for Amazon OpenSearch Serverless, which lets you retailer and question embeddings straight alongside your small business information, enabling extra related similarity searches whereas additionally offering a 20x enchancment in queries per second, all while not having to fret about sustaining a separate underlying vector database.

Vector seek for Amazon DocumentDB (with MongoDB compatibility) and Amazon MemoryDB for Redis

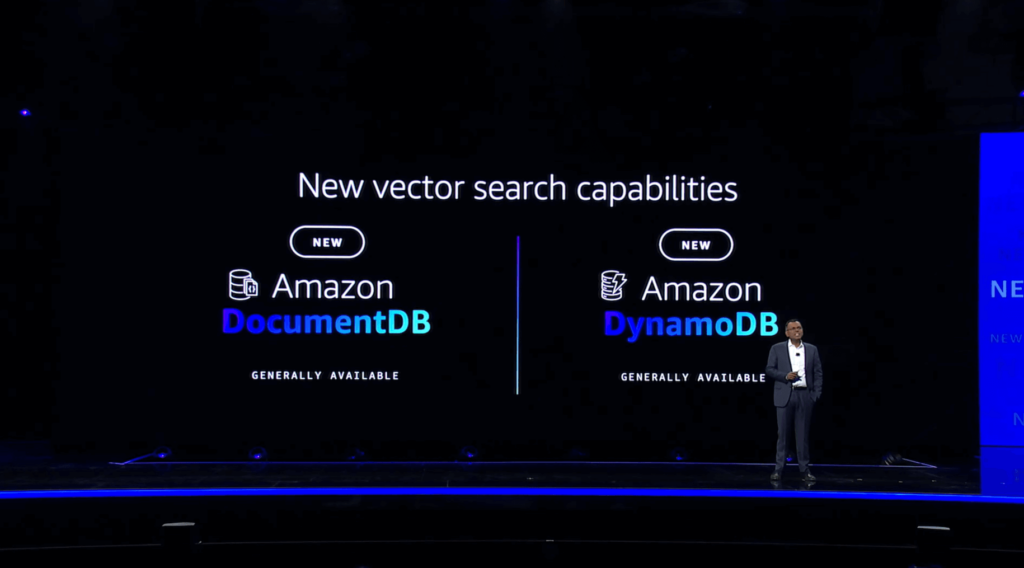

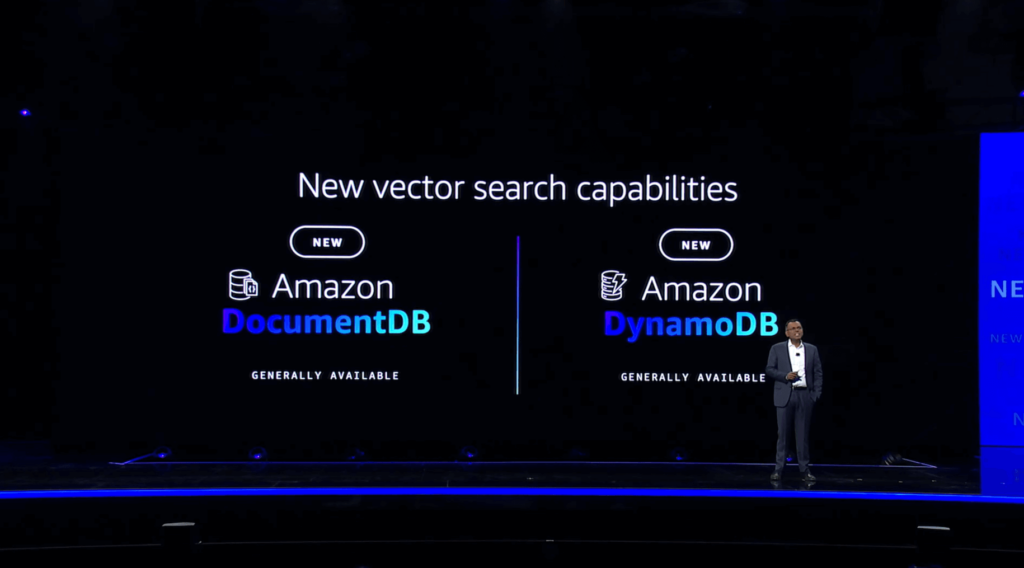

Vector search capabilities have been additionally introduced for Amazon DocumentDB (with MongoDB compatibility) and Amazon MemoryDB for Redis, becoming a member of their present providing of vector search inside DynamoDB. These vector search choices all present help for each excessive throughput and excessive recall, with millisecond response occasions even at concurrency charges of tens of hundreds of queries per second. This degree of efficiency is very vital inside purposes involving fraud detection or interactive chatbots, the place any diploma of delay could also be pricey.

Amazon Neptune Analytics

Staying throughout the realm of AWS database companies, the following announcement centered round Amazon Neptune, a graph database that lets you symbolize relationships and connections between information entities. Right this moment’s announcement of the final availability of Amazon Neptune Analytics makes it sooner and simpler for information scientists to rapidly analyze giant volumes of knowledge saved inside Neptune. Very like the opposite vector search capabilities talked about above, Neptune Analytics permits sooner vector looking by storing your graph and vector information collectively. This lets you discover and unlock insights inside your graph information as much as 80x sooner than with present AWS options by analyzing tens of billions of connections inside seconds utilizing built-in graph algorithms.

Amazon OpenSearch Service zero-ETL integration with Amazon S3

Along with enabling vector search throughout AWS database companies, Swami additionally outlined AWS’ dedication to a “zero-ETL” future, with out the necessity for sophisticated and costly extract, rework, and cargo, or ETL pipeline improvement. AWS has already introduced numerous new zero-ETL integrations this week, together with Amazon DynamoDB zero-ETL integration with Amazon OpenSearch Service and varied zero-ETL integrations with Amazon Redshift. Right this moment, Swami introduced one other new zero-ETL integration, this time between Amazon OpenSearch Service and Amazon S3. Now out there in preview, this integration lets you seamlessly search, analyze, and visualize your operational information saved in S3, akin to VPC Move Logs and Elastic Load Balancing logs, in addition to S3-based information lakes. You’ll additionally be capable of leverage OpenSearch’s out of the field dashboards and visualizations.

AWS Clear Rooms ML

Swami went on to debate AWS Clear Rooms, which have been launched earlier this 12 months and permit AWS clients to securely collaborate with companions in “clear rooms” that don’t require you to repeat or share any of your underlying uncooked information. Right this moment, AWS introduced a preview launch of AWS Clear Rooms ML, extending the clear rooms paradigm to incorporate collaboration on machine studying fashions by means of using AWS-managed lookalike fashions. This lets you prepare your personal customized fashions and work with companions while not having to share any of your personal uncooked information. AWS additionally plans to launch a healthcare mannequin to be used inside Clear Rooms ML throughout the subsequent few months.

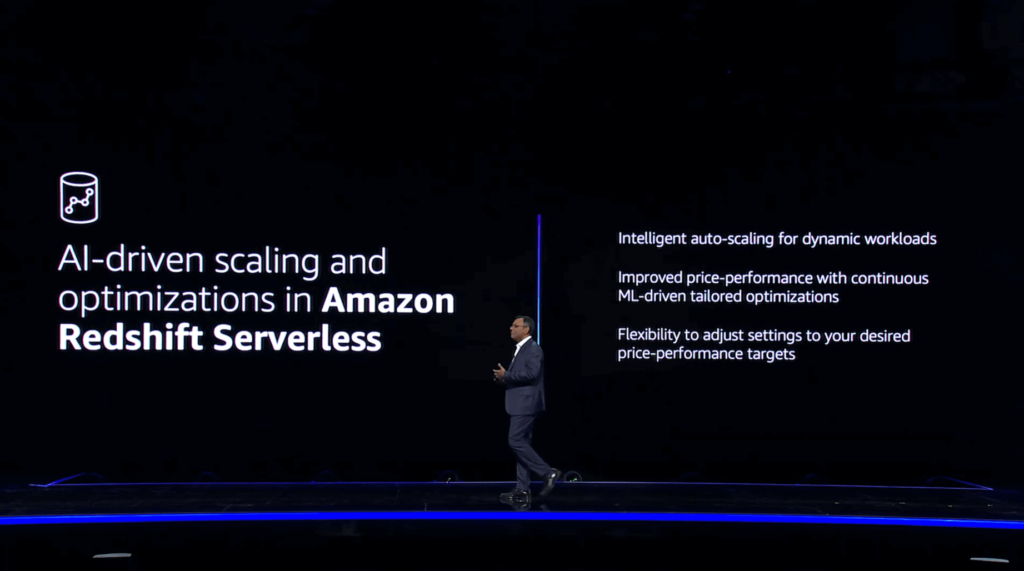

New AI capabilities in Amazon Redshift

The subsequent two bulletins each contain Amazon Redshift, starting with some AI-driven scaling and optimizations in Amazon Redshift Serverless. These enhancements embrace clever auto-scaling for dynamic workloads, which presents proactive scaling based mostly on utilization patterns that embrace the complexity and frequency of your queries together with the scale of your information units. This lets you concentrate on deriving vital insights out of your information relatively than worrying about efficiency tuning your information warehouse. You may set price-performance targets and benefit from ML-driven tailor-made optimizations that may do every part from adjusting your compute to modifying the underlying schema of your database, permitting you to optimize for value, efficiency, or a steadiness between the 2 based mostly in your necessities.

Amazon Q generative SQL in Amazon Redshift

The subsequent Redshift announcement is unquestionably one in all my favorites. Following yesterday’s bulletins about Amazon Q, Amazon’s new generative AI-powered assistant that may be tailor-made to your particular enterprise wants and information, at present we discovered about Amazon Q generative SQL in Amazon Redshift. Very like the “pure language to code” capabilities of Amazon Q that have been unveiled yesterday with Amazon Q Code Transformation, Amazon Q generative SQL in Amazon Redshift lets you write pure language queries in opposition to information that’s saved in Redshift. Amazon Q makes use of contextual details about your database, its schema, and any question historical past in opposition to your database to generate the required SQL queries based mostly in your request. You may even configure Amazon Q to leverage the question historical past of different customers inside your AWS account when producing SQL. You may also ask questions of your information, akin to “what was the highest promoting merchandise in October” or “present me the 5 highest rated merchandise in our catalog,” while not having to grasp your underlying desk construction, schema, or any difficult SQL syntax.

Amazon Q information integration in AWS Glue

One further Amazon Q-related announcement concerned an upcoming information integration in AWS Glue. This promising function will simplify the method of establishing customized ETL pipelines in situations the place AWS doesn’t but provide a zero-ETL integration, leveraging brokers for Amazon Bedrock to interrupt down a pure language immediate right into a sequence of duties. As an illustration, you would ask Amazon Q to “write a Glue ETL job that reads information from S3, removes all null data, and masses the information into Redshift” and it’ll deal with the remainder for you routinely.

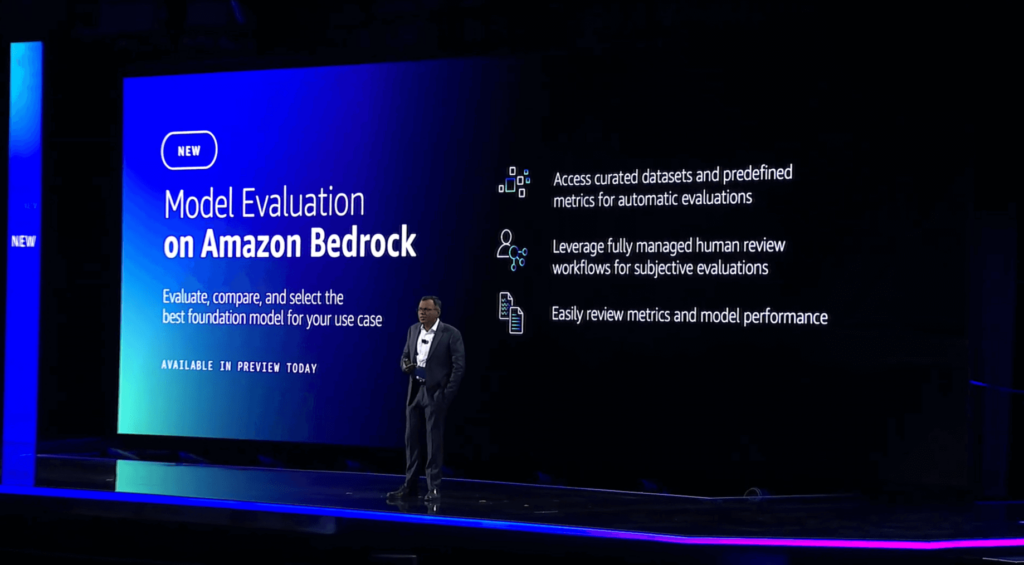

Mannequin Analysis on Amazon Bedrock

Swami’s closing announcement circled again to the number of basis fashions which might be out there inside Amazon Bedrock and his earlier assertion that “nobody mannequin will rule all of them.” Due to this, mannequin evaluations are an vital software that must be carried out continuously by generative AI software builders. Right this moment’s preview launch of Mannequin Analysis on Amazon Bedrock lets you consider, evaluate, and choose the very best FM in your use case. You may select to make use of automated analysis based mostly on metrics akin to accuracy and toxicity, or human analysis for issues like fashion and acceptable “model voice.” As soon as an analysis job is full, Mannequin Analysis will produce a mannequin analysis report that comprises a abstract of metrics detailing the mannequin’s efficiency.

Swami concluded his keynote by addressing the human aspect of generative AI and reaffirming his perception that generative AI purposes will speed up human productiveness. In spite of everything, it’s people who should present the important inputs obligatory for generative AI purposes to be helpful and related. The symbiotic relationship between information, generative AI, and people creates longevity, with collaboration strengthening every aspect over time. He concluded by asserting that people can leverage information and generative AI to “create a flywheel of success.” With the upcoming generative AI revolution, human smooth abilities akin to creativity, ethics, and flexibility might be extra vital than ever. In keeping with a World Financial Discussion board survey, practically 75% of firms will undertake generative AI by the 12 months 2027. Whereas generative AI might eradicate the necessity for some roles, numerous new roles and alternatives will little doubt emerge within the years to return.

I entered at present’s keynote full of pleasure and anticipation, and as ordinary, Swami didn’t disappoint. I’ve been completely impressed by the breadth and depth of bulletins and new function releases already this week, and it’s solely Wednesday! Regulate our weblog for extra thrilling keynote bulletins from re:Invent 2023!