Cloudera DataFlow for the Public Cloud (CDF-PC) is a whole self-service streaming information seize and motion platform primarily based on Apache NiFi. It permits builders to interactively design information flows in a drag and drop designer, which could be deployed as repeatedly working, auto-scaling move deployments or event-driven serverless capabilities. CDF-PC comes with a monitoring dashboard out of the field for information move well being and efficiency monitoring. Key efficiency indicators (KPIs) and related alerts assist clients monitor what issues for his or her use circumstances.

Many organizations have invested in central monitoring and observability instruments resembling Prometheus and Grafana and are in search of methods to combine key information move metrics into their present structure.

On this weblog we are going to dive into how CDF-PC’s assist for NiFi reporting duties can be utilized to watch key metrics in Prometheus and Grafana.

Goal structure: connecting the items

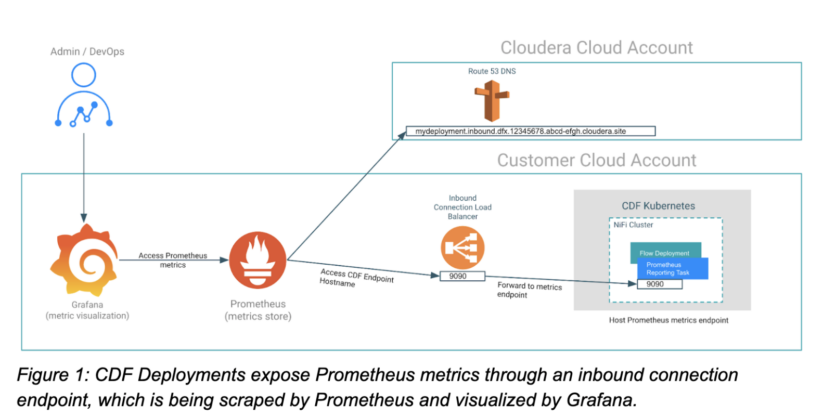

The important thing to insightful Grafana dashboards is accessing related utility metrics. In our case, these are NiFi metrics of our move deployment. We due to this fact want to have the ability to expose NiFi metrics for Prometheus so it will probably scrape them earlier than we will construct dashboards in Grafana. CDF-PC’s assist for Prometheus reporting duties and inbound connections permits Prometheus to scrape metrics in actual time. As soon as the metrics are in Prometheus, querying it and constructing dashboards on prime of it in Grafana is easy. So let’s take a more in-depth take a look at how we get from having metrics in our move deployment to a totally featured Grafana dashboard by implementing the goal structure proven in Determine 1 beneath.

Configuring a CDF deployment to be scraped by Prometheus

Beginning with CDF-PC 2.6.1, now you can programmatically create NiFi reporting duties to make related metrics accessible to varied third celebration monitoring techniques. The Prometheus reporting process that we’ll use for this instance creates an HTTP(S) metrics endpoint that may be scraped by Prometheus brokers or servers. To make use of this reporting process in a CDF-PC deployment, now we have to finish the next steps:

- Make sure that the HTTP(s) metrics endpoint is reachable from Prometheus by configuring an inbound connections endpoint when creating the deployment.

- Create and configure the Prometheus reporting process utilizing the CDP CLI after profitable deployment creation.

Making a deployment with an inbound connections endpoint

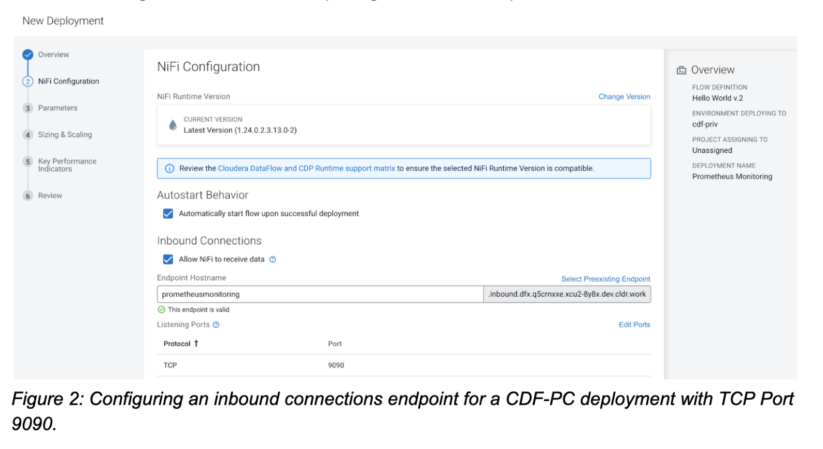

When making a deployment, CDF-PC offers you the choice to permit NiFi to obtain information by configuring an inbound connections endpoint. When the choice is checked, CDF-PC will recommend an endpoint hostname you could customise as wanted.

The inbound connections endpoint offers exterior functions the flexibility to ship information to a deployment, or in our case, hook up with a deployment to scrape its metrics. Along with the endpoint hostname we even have to offer at the least one port that we wish to expose. In our case we’re utilizing Port 9090 and are exposing it with the TCP protocol.

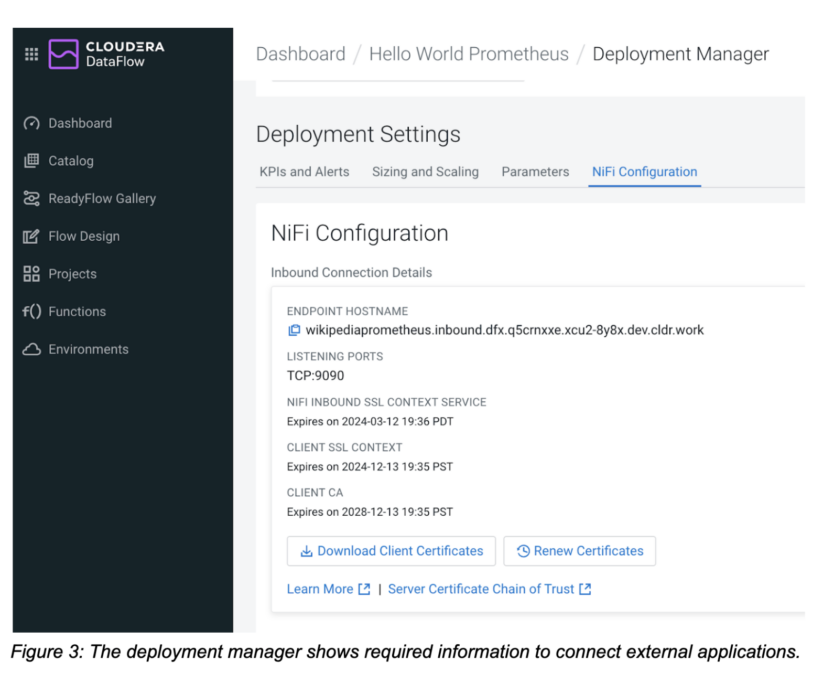

After you’ve gotten created your deployment with an inbound connection endpoint, navigate to the NiFi configuration tab within the deployment supervisor the place you will note all related info to attach exterior functions to the deployment. Now that the deployment has been created, we will transfer on to the subsequent step—creating the reporting process.

Creating and configuring the NiFi Prometheus reporting process

We will now use the CDP CLI to create and configure the Prometheus reporting process. Obtain and configure the CDP CLI. Just be sure you are working at the least model 0.9.101 by working cdp –model.

The command we’re going to make use of is the cdp dfworkload create-reporting-task command. It requires the deployment CRN, atmosphere CRN and a JSON definition of the reporting process that we wish to create. Copy the deployment CRN from the deployment supervisor, get the atmosphere CRN for the related CDP atmosphere, and begin setting up the command.

cdp dfworkload create-reporting-task --deployment-crn crn:cdp:df:us-west-1:9d74eee4-1cad-45d7-b645-7ccf9edbb73d:deployment:eb2717f3-1bdf-4150-bd33-5b15d715bc7d/5cdc4d43-2991-4d4c-99fc-c400cd15853d --environment-crn crn:cdp:environments:us-west-1:9d74eee4-1cad-45d7-b645-7ccf9edbb73d:atmosphere:bf58748f-7ef4-477a-9c63-448b51e5c98f

The lacking piece is offering the details about which reporting process we wish to create. All supported reporting duties could be handed in utilizing their configuration JSON file. Right here’s the JSON configuration for our Prometheus reporting process. It consists of a Prometheus-specific properties part adopted by generic reporting process configuration properties resembling whether or not the reporting process ought to be began, how steadily it ought to run, and the way it ought to be scheduled.

{ "title": "PrometheusReportingTask", "sort": "org.apache.nifi.reporting.prometheus.PrometheusReportingTask", "properties": { "prometheus-reporting-task-metrics-endpoint-port": "9090", "prometheus-reporting-task-metrics-strategy": "All Parts", "prometheus-reporting-task-instance-id": "${hostname(true)}", "prometheus-reporting-task-client-auth": "No Authentication", "prometheus-reporting-task-metrics-send-jvm": "false" }, "propertyDescriptors": {}, "scheduledState": "RUNNING", "schedulingPeriod": "60 sec", "schedulingStrategy": "TIMER_DRIVEN", "componentType": "REPORTING_TASK" }

| Configuration Property | Description |

| prometheus-reporting-task-metrics-endpoint-port | The port that this reporting process will use to reveal metrics. This port should match the port you specified earlier when configuring the inbound connection endpoint. |

| prometheus-reporting-task-metrics-strategy | Defines granularity on which to report metrics. Supported values are “All Parts,” “Root Course of Group,” and “All Course of Teams.” Use this to restrict metrics as wanted. |

| prometheus-reporting-task-instance-id | The ID that will likely be despatched alongside the metrics. You need to use this property to establish your deployments in Prometheus. |

| prometheus-reporting-task-client-auth | Does the endpoint require authentication? Supported values are “No Authentication,” “Need Authentication,” or “Want Authentication”. |

| prometheus-reporting-task-metrics-send-jvm | Defines whether or not JVM metrics are additionally uncovered. Supported values are “true” and “false.” |

Desk 1: Prometheus configuration properties of the NiFi Prometheus reporting process.

You may both cross the JSON file as a parameter to the CLI command or reference a file. Let’s assume we’re saving the above JSON content material in a file referred to as prometheus_reporting_task.json.

Now we will assemble our ultimate CLI command that may create the specified reporting process:

cdp dfworkload create-reporting-task --deployment-crn crn:cdp:df:us-west-1:9d74eee4-1cad-45d7-b645-7ccf9edbb73d:deployment:eb2717f3-1bdf-4150-bd33-5b15d715bc7d/5cdc4d43-2991-4d4c-99fc-c400cd15853d --environment-crn crn:cdp:environments:us-west-1:9d74eee4-1cad-45d7-b645-7ccf9edbb73d:atmosphere:bf58748f-7ef4-477a-9c63-448b51e5c98f --file-path prometheus_reporting_task.json

After executing the command, it is best to get a response again that comprises the reporting process CRN: { "crn": "crn:cdp:df:us-west-1:9d74eee4-1cad-45d7-b645-7ccf9edbb73d:reportingTask:eb2717f3-1bdf-4150-bd33-5b15d715bc7d/66a746af-018c-1000-0000-00005212b3ea" }

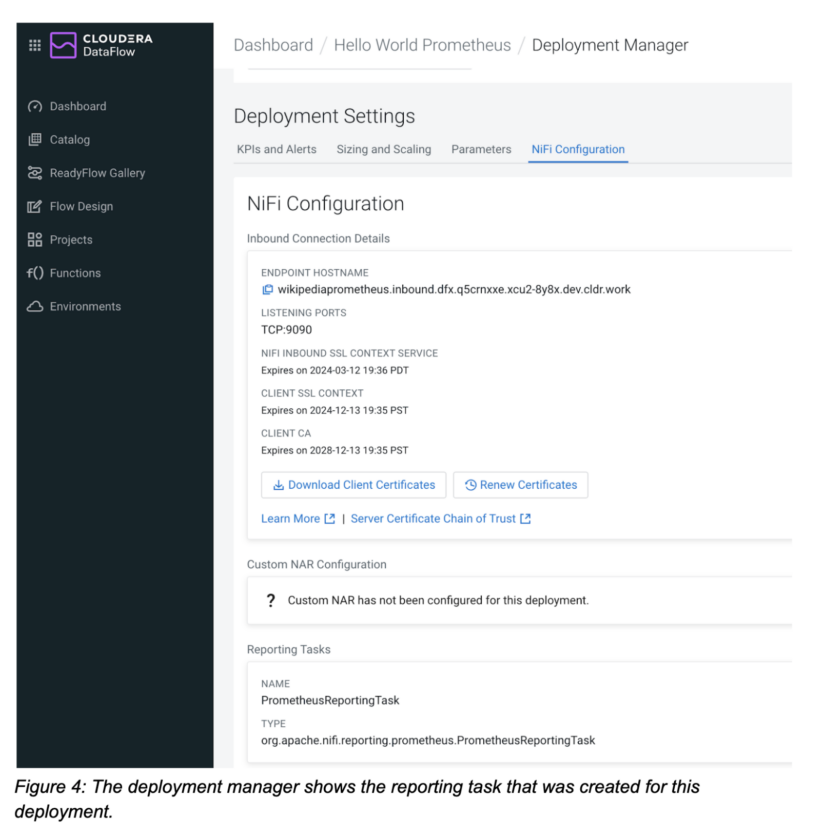

To substantiate that the reporting process was created, navigate to the NiFi configuration tab within the deployment supervisor and confirm that the reporting process part displays the reporting duties you created utilizing the CLI.

Now that our move deployment and reporting process have been created, we will transfer on to the subsequent step and configure the Prometheus server to scrape this deployment.

Configuring Prometheus to watch a CDF deployment

To outline a brand new scraping goal for Prometheus, we have to edit the Prometheus configuration file. Open the prometheus.yaml file so as to add the CDF deployment as a goal.

Create a brand new job, e.g. with CDF deployment as its title. Subsequent, copy the endpoint hostname of your CDF deployment from the deployment supervisor and add it as a brand new goal.

scrape_configs:

# The job title is added as a label `job=<job_name>` to any timeseries scraped from this config. - job_name: "CDF Deployment" # metrics_path defaults to '/metrics' # scheme defaults to 'http'. static_configs: - targets: ["wikipediaprometheus.inbound.dfx.q5crnxxe.xcu2-8y8x.dev.cldr.work:9090"]

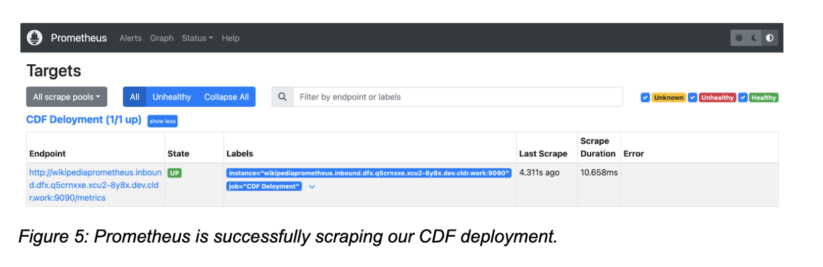

Apply the configuration adjustments and navigate to the Prometheus internet console to verify that our CDF deployment is being scraped. Go to the Standing→Targets and confirm that your CDF Deployment is “Up.”

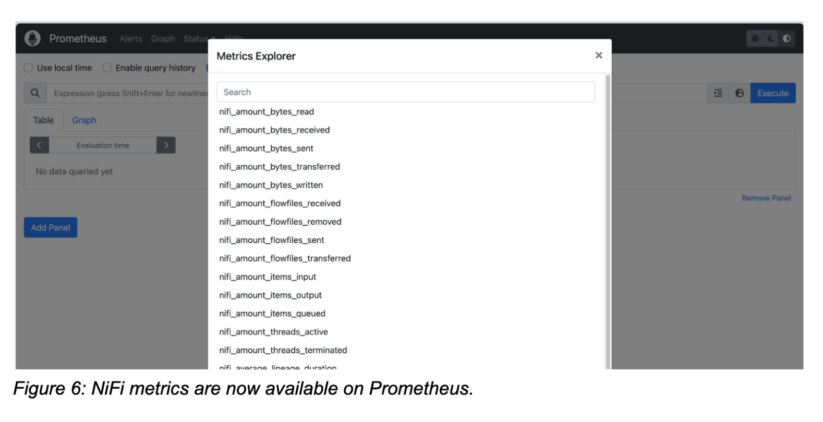

As soon as Prometheus has began scraping, you’ll be able to discover all NiFi metrics within the metrics explorer and begin constructing your Prometheus queries.

Pattern Grafana dashboard

Grafana is a well-liked alternative for visualizing Prometheus metrics, and it makes it simple to watch key NiFi metrics of our deployment.

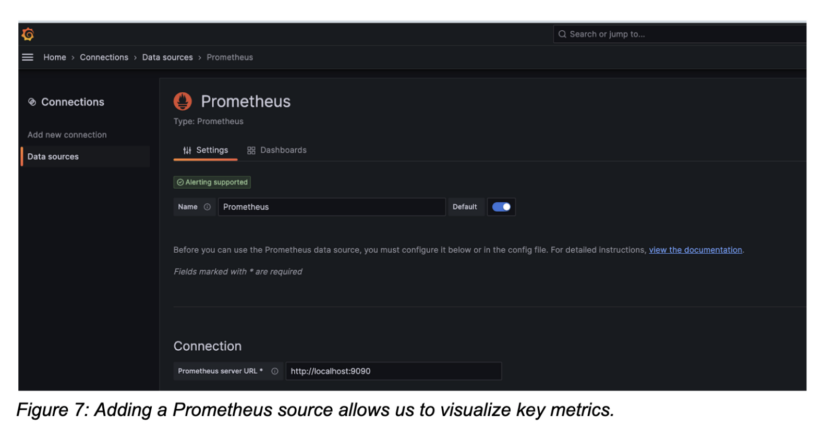

Create a Prometheus connection to make all metrics and queries accessible in Grafana.

Now that Grafana is linked to Prometheus, you’ll be able to create a brand new dashboard and add visualizations.

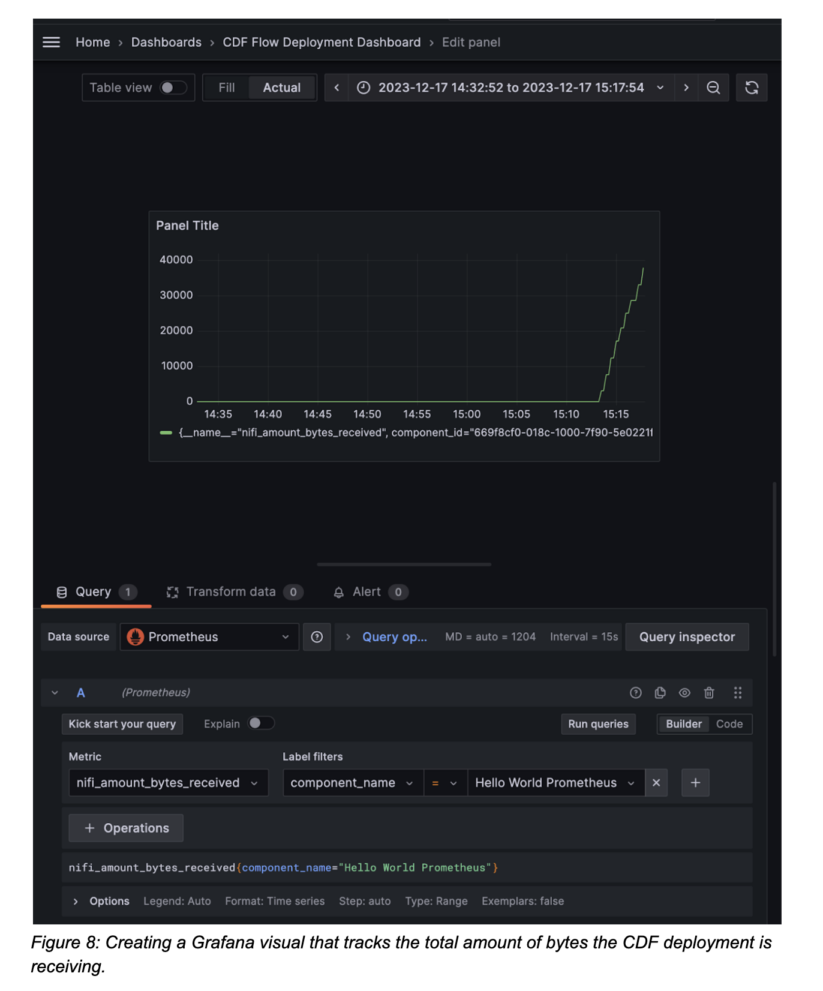

Let’s say we wish to create a graph that represents the information that this deployment has obtained from exterior sources. Choose “add visualization” in your dashboard and ensure your Prometheus connection is chosen as the information supply.

Choose the nifi_amount_bytes_received metric. Use the label filters to slender down the part within the move. Through the use of component_name and “Whats up World Prometheus,” we’re monitoring the bytes obtained aggregated by your entire course of group and due to this fact the move. Alternatively you’ll be able to monitor all elements if no filter is outlined or monitor particular person processors too.

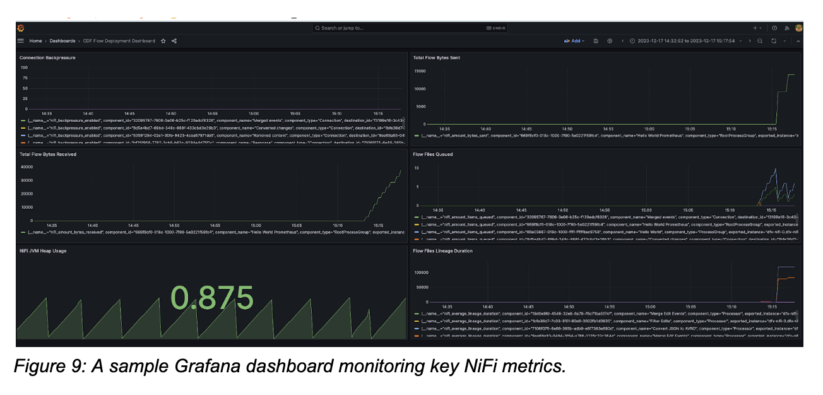

With all NiFi metrics being accessible in Grafana, we will now construct a full dashboard monitoring all related metrics. Within the instance beneath we’re monitoring complete bytes obtained/despatched, the variety of move recordsdata queued in all elements, the common lineage period and the present NiFi JVM heap utilization which assist us perceive how our flows are doing.

Conclusion

Conclusion

The NiFi Prometheus reporting process, along with CDF inbound connections makes it simple to watch key metrics in Prometheus and create Grafana dashboards. With the lately added create-reporting-task CDF CLI command, clients can now automate establishing Prometheus monitoring for each new deployment as a part of their commonplace CI/CD pipeline.

Check out CDF-PC utilizing the general public 5 day trial and take a look at the Prometheus monitoring demo video for a step-by-step tutorial.