Right this moment, we’re excited to announce Databricks LakeFlow, a brand new resolution that incorporates every thing it’s good to construct and function manufacturing information pipelines. It consists of new native, extremely scalable connectors for databases together with MySQL, Postgres, SQL Server and Oracle and enterprise functions like Salesforce, Microsoft Dynamics, NetSuite, Workday, ServiceNow and Google Analytics. Customers can remodel information in batch and streaming utilizing commonplace SQL and Python. We’re additionally asserting Actual Time Mode for Apache Spark, permitting stream processing at orders of magnitude sooner latencies than microbatch. Lastly, you may orchestrate and monitor workflows and deploy to manufacturing utilizing CI/CD. Databricks LakeFlow is native to the Information Intelligence Platform, offering serverless compute and unified governance with Unity Catalog.

On this weblog submit we focus on the explanation why we consider LakeFlow will assist information groups meet the rising demand of dependable information and AI in addition to LakeFlow’s key capabilities built-in right into a single product expertise.

Challenges in constructing and working dependable information pipelines

Information engineering – amassing and making ready contemporary, high-quality and dependable information – is a vital ingredient for democratizing information and AI in your small business. But reaching this stays filled with complexity and requires stitching collectively many various instruments.

First, information groups must ingest information from a number of programs every with their very own codecs and entry strategies. This requires constructing and sustaining in-house connectors for databases and enterprise functions. Simply maintaining with enterprise functions’ API adjustments generally is a full-time job for a complete information crew. Information then must be ready in each batch and streaming, which requires writing and sustaining advanced logic for triggering and incremental processing. When latency spikes or a failure happens, it means getting paged, a set of sad information shoppers and even disruptions to the enterprise that have an effect on the underside line. Lastly, information groups must deploy these pipelines utilizing CI/CD and monitor the standard and lineage of knowledge property. This usually requires deploying, studying and managing one other solely new instrument like Prometheus or Grafana.

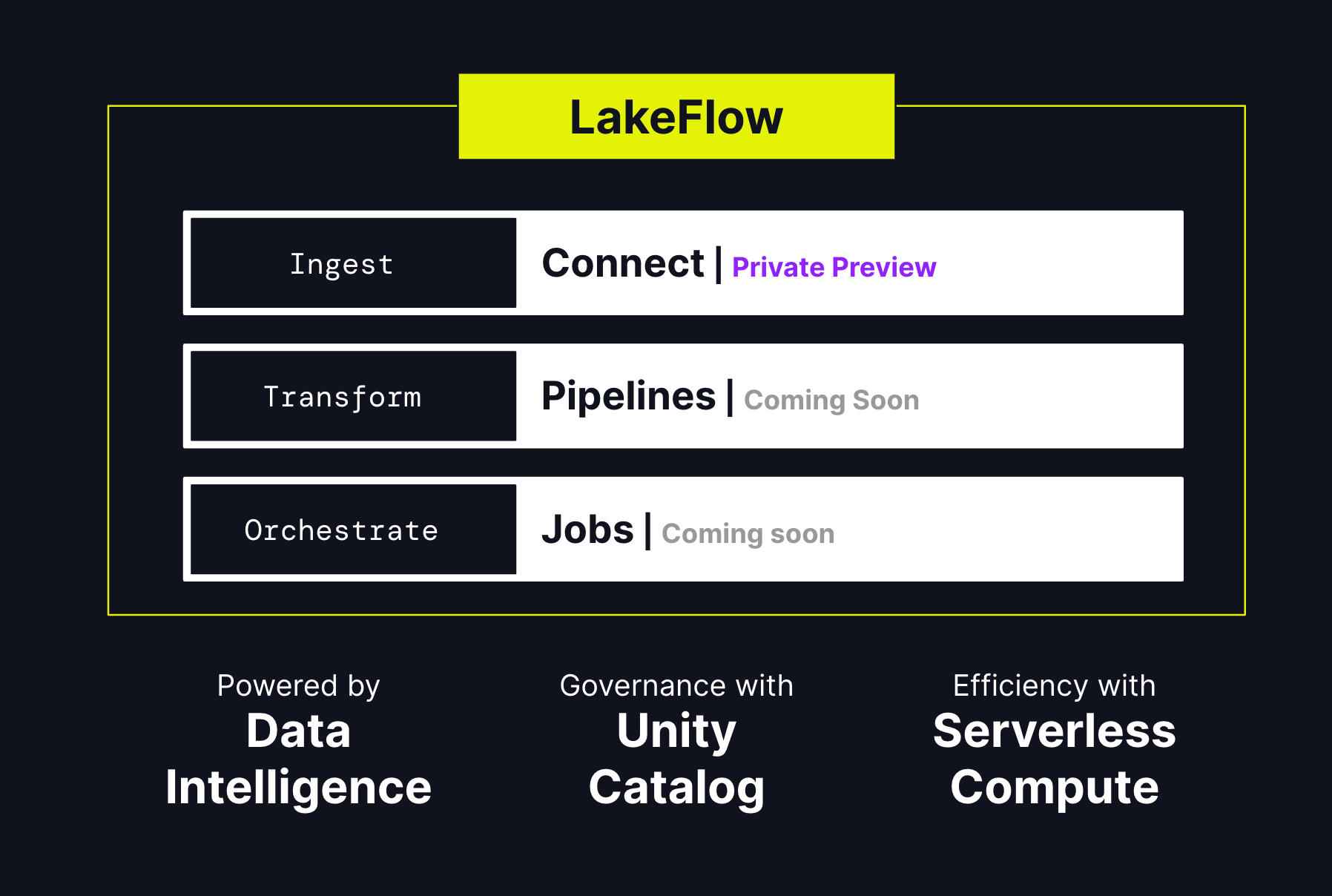

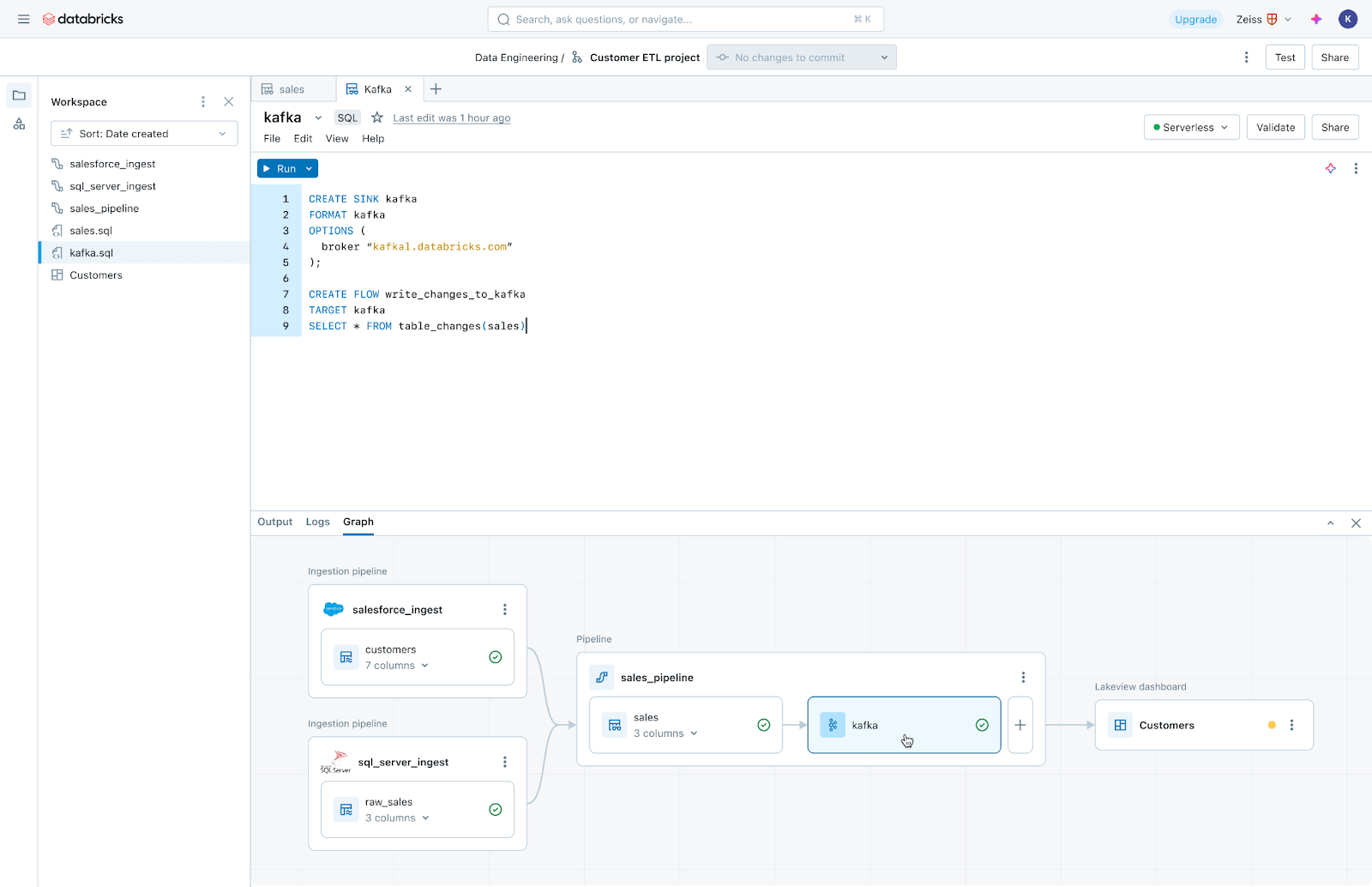

This is the reason we determined to construct LakeFlow, a unified resolution for information ingestion, transformation, and orchestration powered by information intelligence. Its three key elements are: LakeFlow Join, LakeFlow Pipelines and LakeFlow Jobs.

LakeFlow Join: Easy and scalable information ingestion

LakeFlow Join gives point-and-click information ingestion from databases similar to MySQL, Postgres, SQL Server and Oracle and enterprise functions like Salesforce, Microsoft Dynamics, NetSuite, Workday, ServiceNow and Google Analytics. LakeFlow Join may also ingest unstructured information similar to PDFs and Excel spreadsheets from sources like SharePoint.

It extends our fashionable native connectors for cloud storage (e.g. S3, ADLS Gen2 and GCS) and queues (e.g. Kafka, Kinesis, Occasion Hub and Pub/Sub connectors), and accomplice options similar to Fivetran, Qlik and Informatica.

We’re significantly enthusiastic about database connectors, that are powered by our acquisition of Arcion. An unimaginable quantity of priceless information is locked away in operational databases. As a substitute of naive approaches to load this information, which hit operational and scaling points, LakeFlows makes use of change information seize (CDC) expertise to make it easy, dependable and operationally environment friendly to carry this information to your lakehouse.

Databricks clients who’re utilizing LakeFlow Join discover {that a} easy ingestion resolution improves productiveness and lets them transfer sooner from information to insights. Insulet, a producer of a wearable insulin administration system, the Omnipod, makes use of the Salesforce ingestion connector to ingest information associated to buyer suggestions into their information resolution which is constructed on Databricks. This information is made out there for evaluation by means of Databricks SQL to realize insights relating to high quality points and monitor buyer complaints. The crew discovered important worth in utilizing the brand new capabilities of LakeFlow Join.

“With the brand new Salesforce ingestion connector from Databricks, we have considerably streamlined our information integration course of by eliminating fragile and problematic middleware. This enchancment permits Databricks SQL to straight analyze Salesforce information inside Databricks. In consequence, our information practitioners can now ship up to date insights in near-real time, decreasing latency from days to minutes.”

— Invoice Whiteley, Senior Director of AI, Analytics, and Superior Algorithms, Insulet

LakeFlow Pipelines: Environment friendly declarative information pipelines

LakeFlow Pipelines decrease the complexity of constructing and managing environment friendly batch and streaming information pipelines. Constructed on the declarative Delta Dwell Tables framework, they free you as much as write enterprise logic in SQL and Python whereas Databricks automates information orchestration, incremental processing and compute infrastructure autoscaling in your behalf. Furthermore, LakeFlow Pipelines presents built-in information high quality monitoring and its Actual Time Mode permits you to allow persistently low-latency supply of time-sensitive datasets with none code adjustments.

LakeFlow Jobs: Dependable orchestration for each workload

LakeFlow Jobs reliably orchestrates and screens manufacturing workloads. Constructed on the superior capabilities of Databricks Workflows, it orchestrates any workload, together with ingestion, pipelines, notebooks, SQL queries, machine studying coaching, mannequin deployment and inference. Information groups may also leverage triggers, branching and looping to satisfy advanced information supply use instances.

LakeFlow Jobs additionally automates and simplifies the method of understanding and monitoring information well being and supply. It takes a data-first view of well being, giving information groups full lineage together with relationships between ingestion, transformations, tables and dashboards. Moreover, it tracks information freshness and high quality, permitting information groups so as to add screens by way of Lakehouse Monitoring with the press of a button.

Constructed on the Information Intelligence Platform

Databricks LakeFlow is natively built-in with our Information Intelligence Platform, which brings these capabilities:

- Information intelligence: AI-powered intelligence isn’t just a characteristic of LakeFlow, it’s a foundational functionality that touches each facet of the product. Databricks Assistant powers the invention, authoring and monitoring of knowledge pipelines, so you may spend extra time constructing dependable information.

- Unified governance: LakeFlow can also be deeply built-in with Unity Catalog, which powers lineage and information high quality.

- Serverless compute: Construct and orchestrate pipelines at scale and assist your crew give attention to work with out having to fret about infrastructure.

The way forward for information engineering is straightforward, unified and clever

We consider that LakeFlow will allow our clients to ship brisker, extra full and higher-quality information to their companies. LakeFlow will enter preview quickly beginning with LakeFlow Join. If you need to request entry, enroll right here. Over the approaching months, search for extra LakeFlow bulletins as extra capabilities develop into out there.