The Databricks Knowledge Intelligence Platform affords unparalleled flexibility, permitting customers to entry practically immediate, horizontally scalable compute assets. This ease of creation can result in unchecked cloud prices if not correctly managed.

Implement Observability to Observe & Chargeback Value

Learn how to successfully use observability to trace & cost again prices in Databricks

When working with complicated technical ecosystems, proactively understanding the unknowns is essential to sustaining platform stability and controlling prices. Observability offers a technique to analyze and optimize techniques primarily based on the info they generate. That is completely different from monitoring, which focuses on figuring out new patterns fairly than monitoring recognized points.

Key options for price monitoring in Databricks

Tagging: Use tags to categorize assets and prices. This enables for extra granular price allocation.

System Tables: Leverage system tables for automated price monitoring and chargeback. Cloud-native price monitoring instruments: Make the most of these instruments for insights into prices throughout all assets.

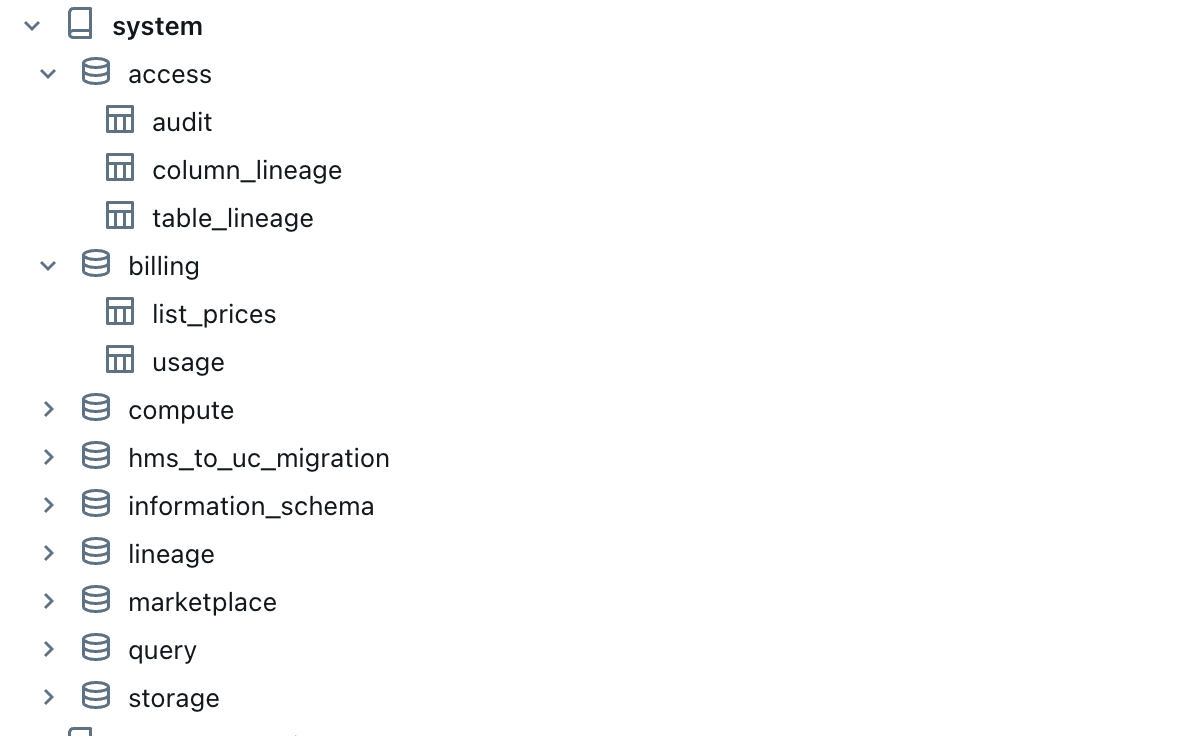

What are System Tables & how one can use them

Databricks present nice observability capabilities utilizing System tables are Databricks-hosted analytical shops of a buyer account’s operational information discovered within the system catalog. They supply historic observability throughout the account and embody user-friendly tabular info on platform telemetry. .Key insights like Billing utilization information can be found in system tables (this at present solely contains DBU’s Checklist Value), with every utilization report representing an hourly combination of a useful resource’s billable utilization.

Learn how to allow system tables

System tables are managed by Unity Catalog and require a Unity Catalog-enabled workspace to entry. They embody information from all workspaces however can solely be queried from enabled workspaces. Enabling system tables occurs on the schema degree – enabling a schema permits all its tables. Admins should manually allow new schemas utilizing the API.

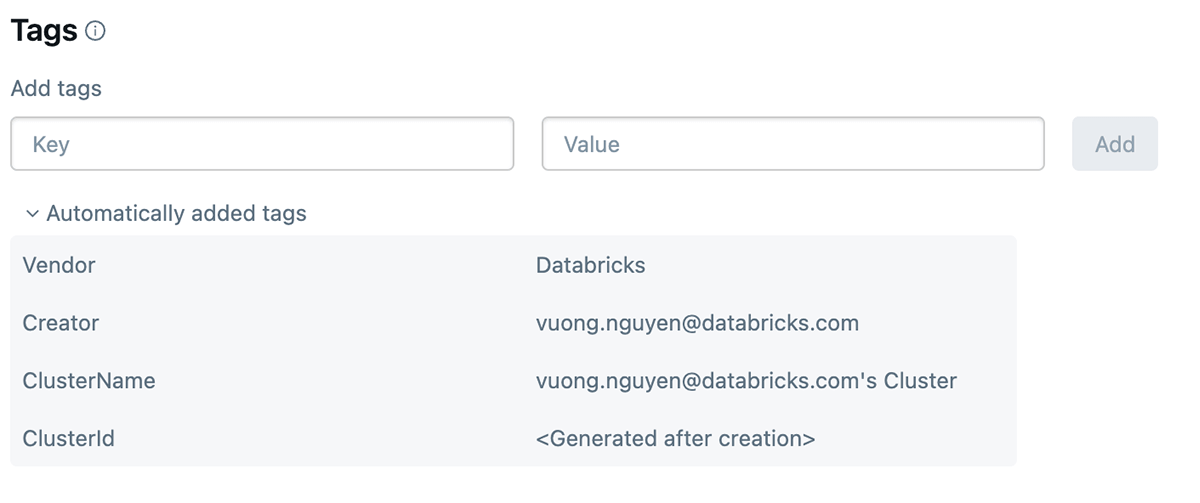

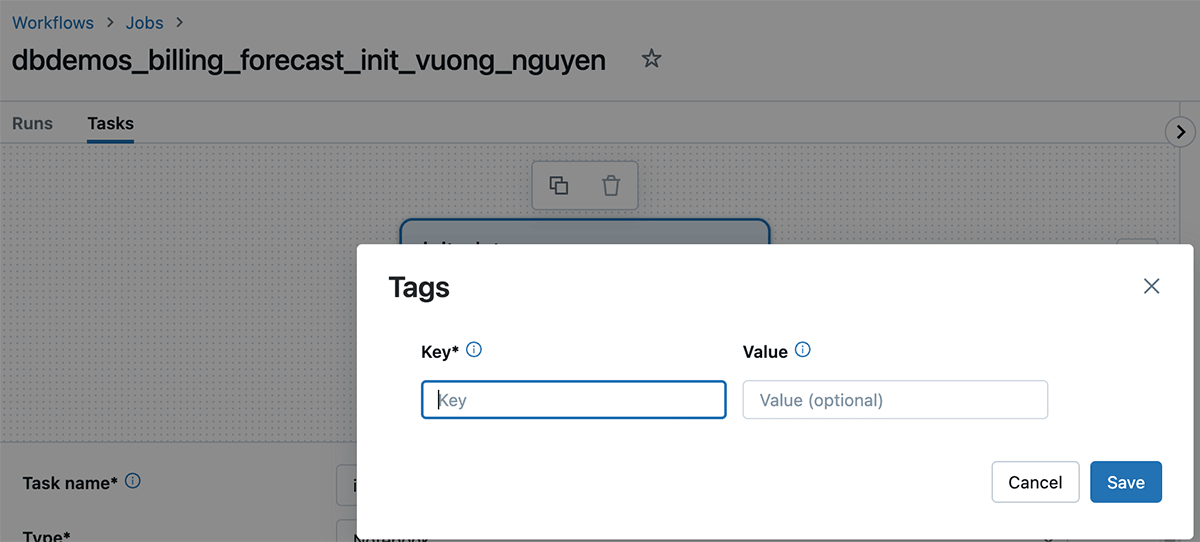

What are Databricks tags & how one can use them

Databricks tagging permits you to apply attributes (key-value pairs) to assets for higher group, search, and administration. For monitoring price and cost again groups can tag their databricks jobs and compute (Clusters, SQL warehouse), which can assist them monitor utilization, prices, and attribute them to particular groups or items.

Learn how to apply tags

Tags may be utilized to the next databricks assets for monitoring utilization and value:

- Databricks Compute

- Databricks Jobs

As soon as these tags are utilized, detailed price evaluation may be carried out utilizing the billable utilization system tables.

Learn how to establish price utilizing cloud native instruments

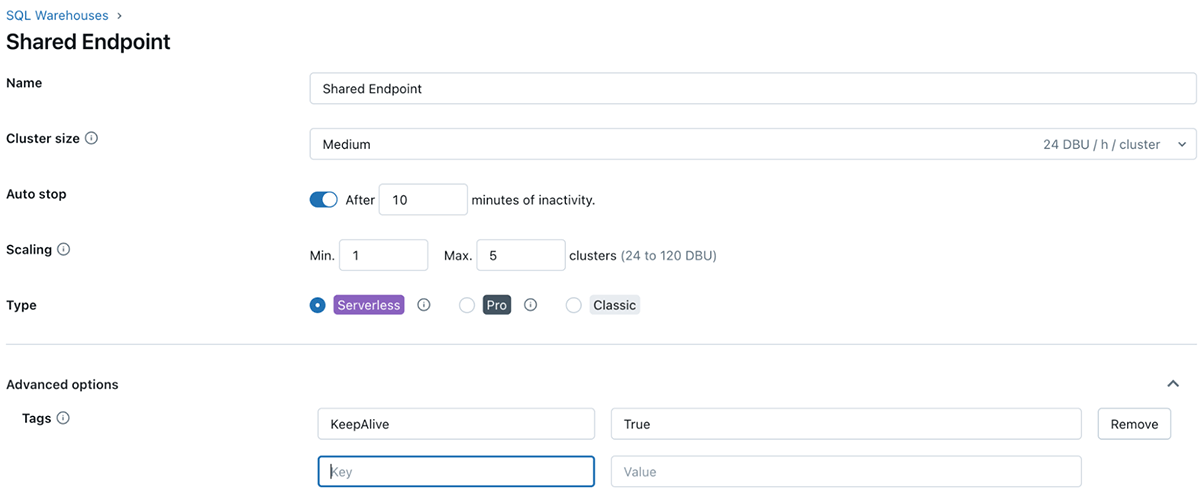

To watch price and precisely attribute Databricks utilization to your group’s enterprise items and groups (for chargebacks, for instance), you’ll be able to tag workspaces (and the related managed useful resource teams) in addition to compute assets.

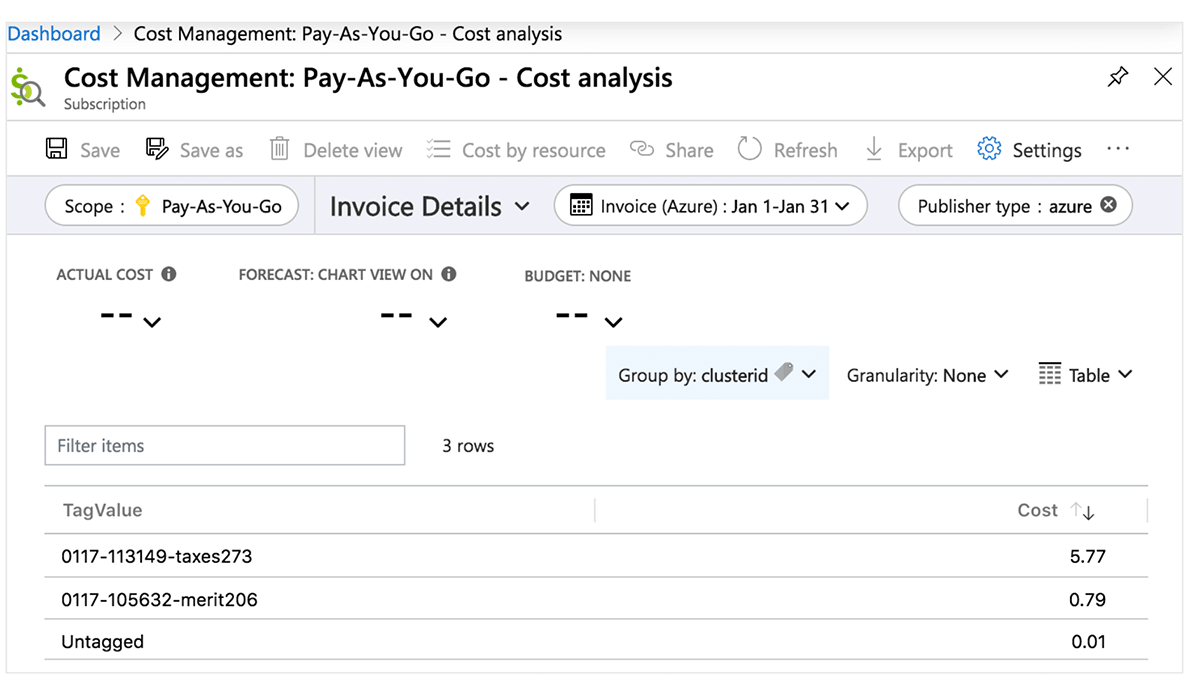

Azure Value Heart

The next desk elaborates Azure Databricks objects the place tags may be utilized. These tags can propagate to detailed price evaluation stories you can entry within the portal and to the billable utilization system desk. Discover extra particulars on tag propagation and limitations in Azure.

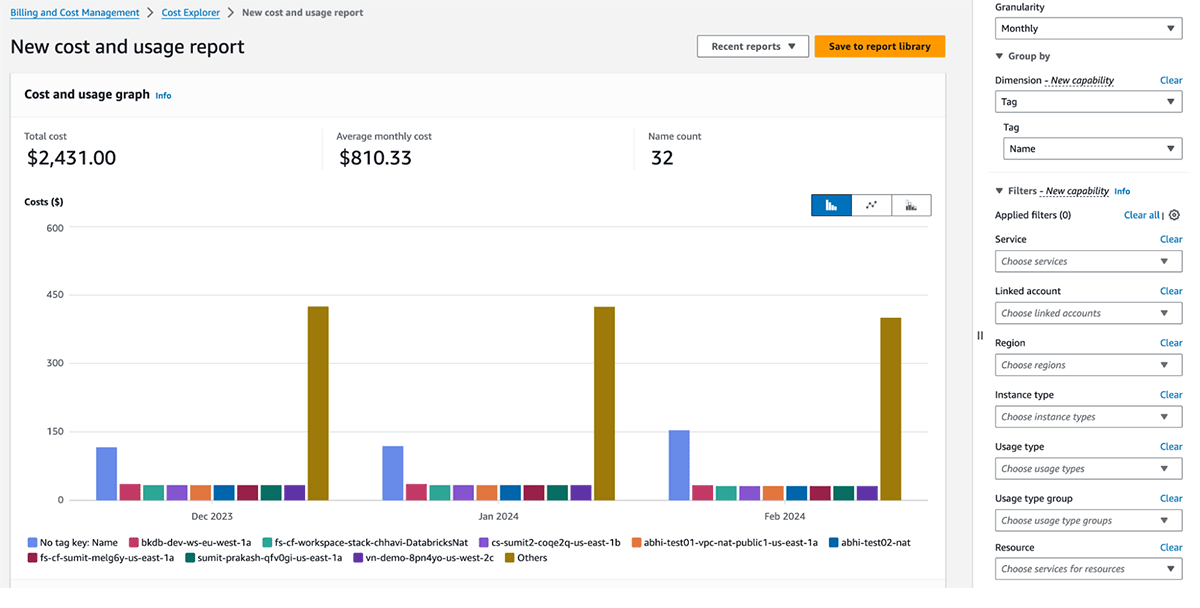

AWS Value Explorer

The next desk elaborates AWS Databricks Objects the place tags may be utilized.These tags can propagate each to utilization logs and to AWS EC2 and AWS EBS cases for price evaluation. Databricks recommends utilizing system tables (Public Preview) to view billable utilization information. Discover extra particulars on tags propagation and limitations in AWS.

| AWS Databricks Object | Tagging Interface (UI) | Tagging Interface (API) |

|---|---|---|

| Workspace | N/A | Account API |

| Pool | Swimming pools UI within the Databricks workspace | Occasion Pool API |

| All-purpose & Job compute | Compute UI within the Databricks workspace | Clusters API |

| SQL Warehouse | SQL warehouse UI within the Databricks workspace | Warehouse API |

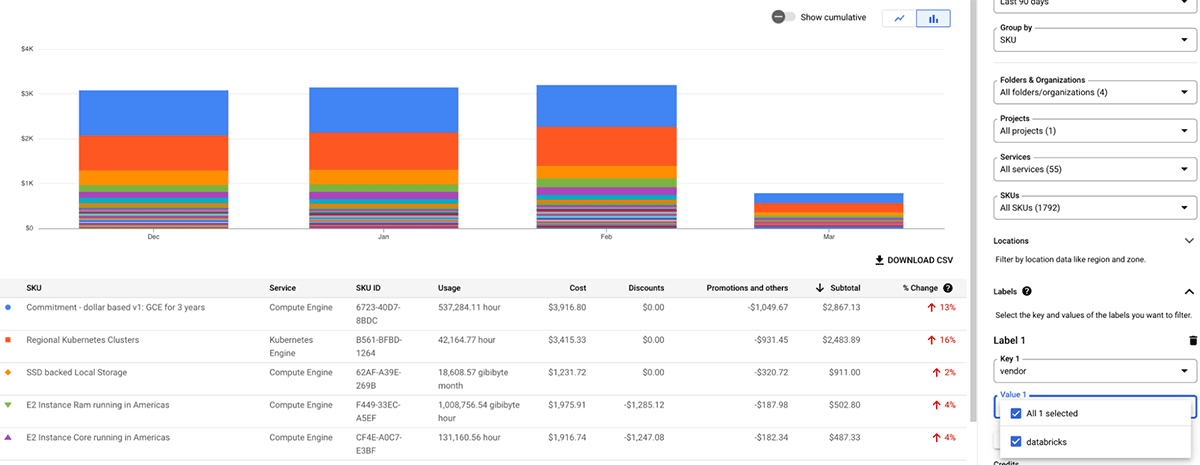

GCP Value administration and billing

The next desk elaborates GCP databricks objects the place tags may be utilized. These tags/labels may be utilized to compute assets. Discover extra particulars on tags/labels propagation and limitations in GCP.

The Databricks billable utilization graphs within the account console can combination utilization by particular person tags. The billable utilization CSV stories downloaded from the identical web page additionally embody default and customized tags. Tags additionally propagate to GKE and GCE labels.

| GCP Databricks Object | Tagging Interface (UI) | Tagging Interface (API) |

|---|---|---|

| Pool | Swimming pools UI within the Databricks workspace | Occasion Pool API |

| All-purpose & Job compute | Compute UI within the Databricks workspace | Clusters API |

| SQL Warehouse | SQL warehouse UI within the Databricks workspace | Warehouse API |

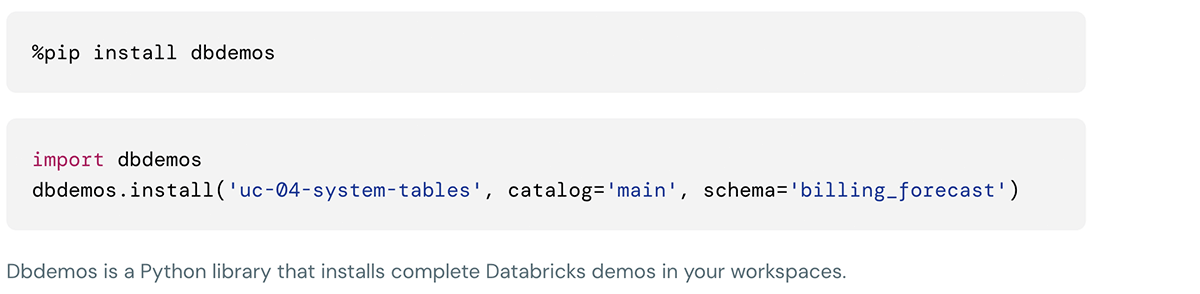

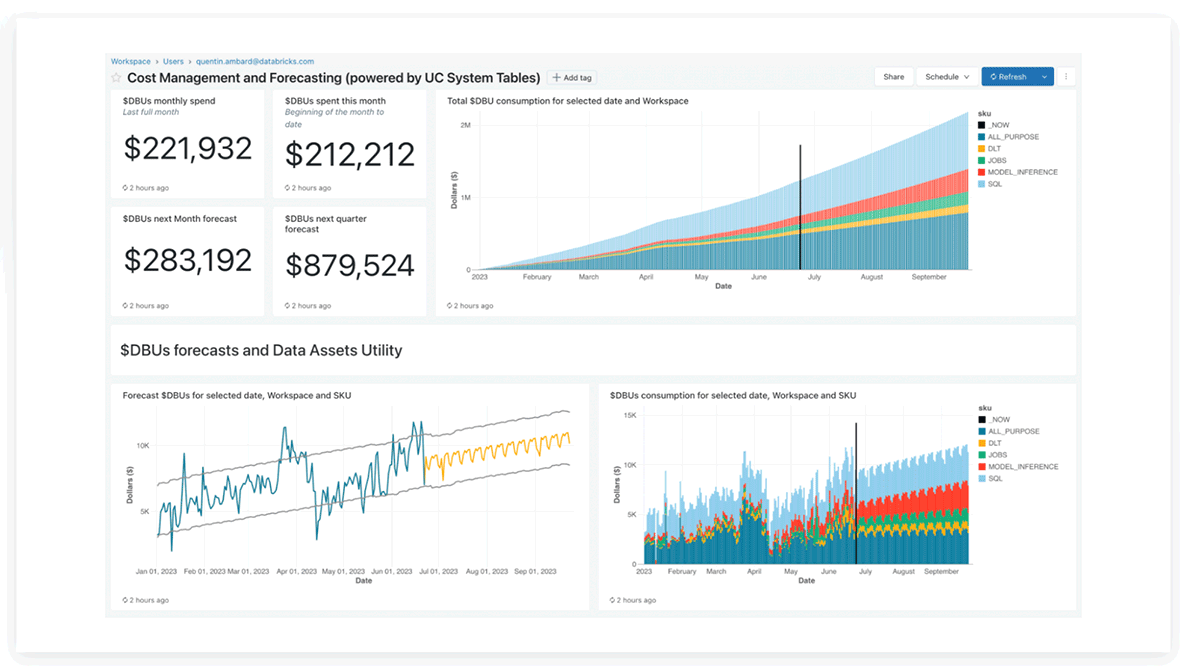

Databricks System tables Lakeview dashboard

The Databricks product crew has supplied precreated lakeview dashboards for price evaluation and forecasting utilizing system tables, which prospects can customise as nicely.

This demo may be put in utilizing following instructions within the databricks notebooks cell:

Greatest Practices to Maximize Worth

When operating workloads on Databricks, selecting the best compute configuration will considerably enhance the associated fee/efficiency metrics. Beneath are some sensible price optimizations strategies:

Utilizing the appropriate compute kind for the appropriate job

For interactive SQL workloads, SQL warehouse is essentially the most cost-efficient engine. Much more environment friendly might be Serverless compute, which comes with a really quick beginning time for SQL warehouses and permits for shorter auto-termination time.

For non-interactive workloads, Jobs clusters price considerably lower than an all-purpose clusters. Multitask workflows can reuse compute assets for all duties, bringing prices down even additional

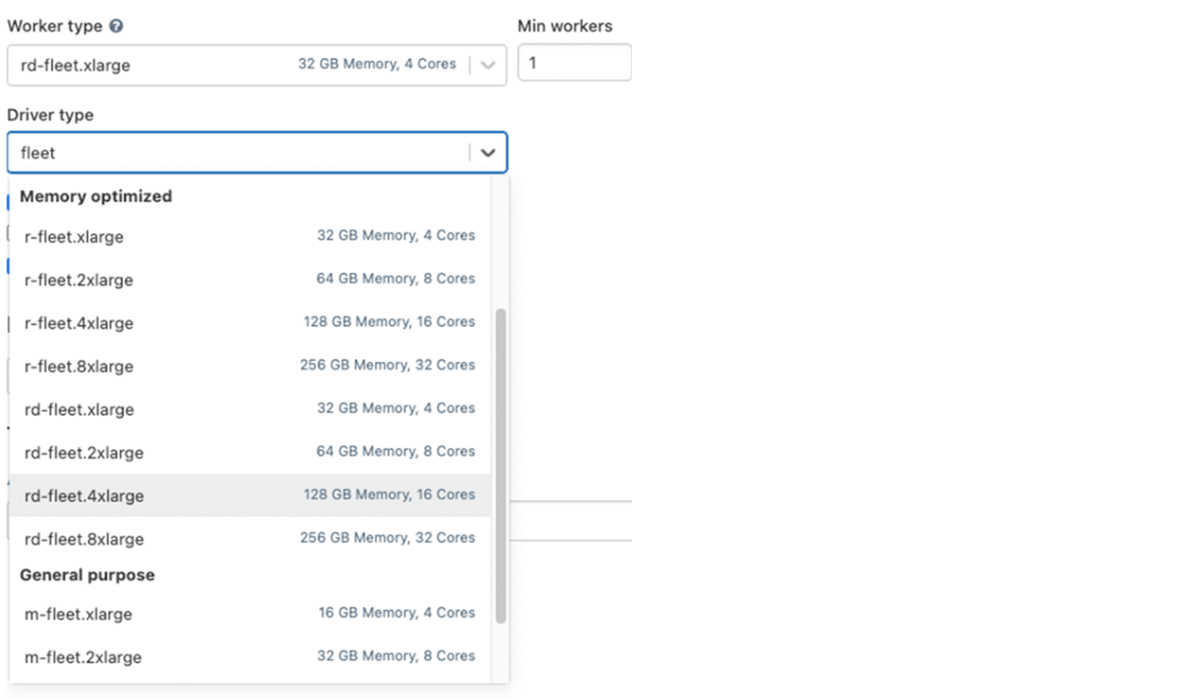

Selecting the correct occasion kind

Utilizing the newest technology of cloud occasion sorts will virtually at all times carry efficiency advantages, as they arrive with the most effective efficiency and newest options. On AWS, Graviton2-based Amazon EC2 cases can ship as much as 3x higher price-performance than comparable Amazon EC2 cases.

Based mostly in your workloads, additionally it is essential to select the appropriate occasion household. Some easy guidelines of thumb are:

- Reminiscence optimized for ML, heavy shuffle & spill workloads

- Compute optimized for Structured Streaming workloads, upkeep jobs (e.g. Optimize & Vacuum)

- Storage optimized for workloads that profit from caching, e.g. ad-hoc & interactive information evaluation

- GPU optimized for particular ML & DL workloads

- Normal goal in absence of particular necessities

Selecting the Proper Runtime

The newest Databricks Runtime (DBR) normally comes with improved efficiency and can virtually at all times be sooner than the one earlier than it.

Photon is a high-performance Databricks-native vectorized question engine that runs your SQL workloads and DataFrame API calls sooner to cut back your whole price per workload. For these workloads, enabling Photon may carry vital price financial savings.

Leveraging Autoscaling in Databricks Compute

Databricks offers a singular function of cluster autoscaling making it simpler to realize excessive cluster utilization since you don’t must provision the cluster to match a workload. That is notably helpful for interactive workloads or batch workloads with various information load. Nonetheless, basic Autoscaling doesn’t work with Structured Streaming workloads, which is why we’ve got developed Enhanced Autoscaling in Delta Dwell Tables to deal with streaming workloads that are spiky and unpredictable.

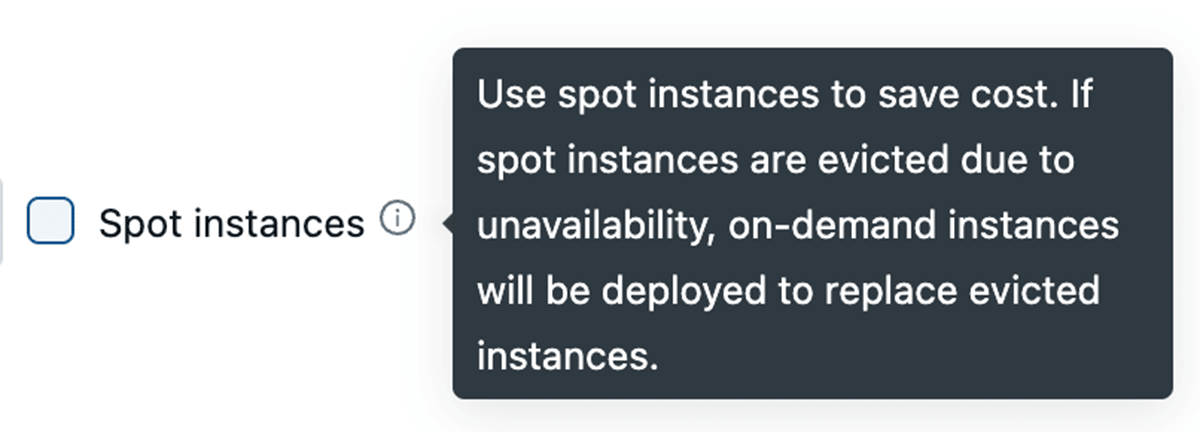

Leveraging Spot Cases

All main cloud suppliers provide spot cases which let you entry unused capability of their information facilities for as much as 90% lower than common On-Demand cases. Databricks permits you to leverage these spot cases, with the flexibility to fallback to On-Demand cases robotically in case of termination to attenuate disruption. For cluster stability, we advocate utilizing On-Demand driver nodes.

Leveraging Fleet occasion kind (on AWS)

Underneath the hood, when a cluster makes use of one among these fleet occasion sorts, Databricks will choose the matching bodily AWS occasion sorts with the most effective value and availability to make use of in your cluster.

Cluster Coverage

Efficient use of cluster insurance policies permits directors to implement price particular restrictions for finish customers:

- Allow cluster auto termination with an inexpensive worth (for instance, 1 hour) to keep away from paying for idle occasions.

- Make sure that solely cost-efficient VM cases may be chosen

- Implement necessary tags for price chargeback

- Management total price profile by limiting per-cluster most price, e.g. max DBUs per hour or max compute assets per person

AI-powered Value Optimisation

The Databricks Knowledge Intelligence Platform integrates superior AI options which optimizes efficiency, reduces prices, improves governance, and simplifies enterprise AI utility improvement. Predictive I/O and Liquid Clustering improve question speeds and useful resource utilization, whereas clever workload administration optimizes autoscaling for price effectivity. General, Databricks’ platform affords a complete suite of AI instruments to drive productiveness and value financial savings whereas enabling progressive options for industry-specific use instances.

Liquid clustering

Delta Lake liquid clustering replaces desk partitioning and ZORDER to simplify information structure choices and optimize question efficiency. Liquid clustering offers flexibility to redefine clustering keys with out rewriting present information, permitting information structure to evolve alongside analytical wants over time.

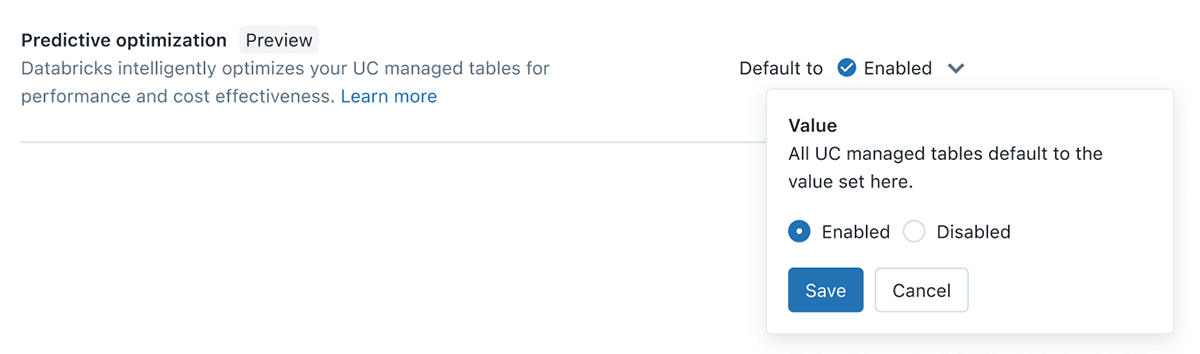

Predictive Optimization

Knowledge engineers on the lakehouse shall be aware of the necessity to usually OPTIMIZE & VACUUM their tables, nevertheless this creates ongoing challenges to determine the appropriate tables, the suitable schedule and the appropriate compute dimension for these duties to run. With Predictive Optimization, we leverage Unity Catalog and Lakehouse AI to find out the most effective optimizations to carry out in your information, after which run these operations on purpose-built serverless infrastructure. This all occurs robotically, guaranteeing the most effective efficiency with no wasted compute or guide tuning effort.

Materialized View with Incremental Refresh

In Databricks, Materialized Views (MVs) are Unity Catalog managed tables that permit customers to precompute outcomes primarily based on the newest model of knowledge in supply tables. Constructed on prime of Delta Dwell Tables & serverless, MVs cut back question latency by pre-computing in any other case gradual queries and incessantly used computations. When attainable, outcomes are up to date incrementally, however outcomes are an identical to those who can be delivered by full recomputation. This reduces computational price and avoids the necessity to preserve separate clusters

Serverless options for Mannequin Serving & Gen AI use instances

To higher help mannequin serving and Gen AI use instances, Databricks have launched a number of capabilities on prime of our serverless infrastructure that robotically scales to your workflows with out the necessity to configure cases and server sorts.

- Vector Search: Vector index that may be synchronized from any Delta Desk with 1-click – no want for complicated, customized constructed information ingestion/sync pipelines.

- On-line Tables: Absolutely serverless tables that auto-scale throughput capability with the request load and supply low latency and excessive throughput entry to information of any scale

- Mannequin Serving: extremely obtainable and low-latency service for deploying fashions. The service robotically scales up or down to satisfy demand adjustments, saving infrastructure prices whereas optimizing latency efficiency

Predictive I/O for updates and Deletes

With these AI powered options Databricks SQL now can analyze historic learn and write patterns to intelligently construct indexes and optimize workloads. Predictive I/O is a group of Databricks optimizations that enhance efficiency for information interactions. Predictive I/O capabilities are grouped into the next classes:

- Accelerated reads cut back the time it takes to scan and skim information. It makes use of deep studying strategies to realize this. Extra particulars may be discovered on this documentation

- Accelerated updates cut back the quantity of knowledge that must be rewritten throughout updates, deletes, and merges.Predictive I/O leverages deletion vectors to speed up updates by decreasing the frequency of full file rewrites throughout information modification on Delta tables. Predictive I/O optimizes

DELETE,MERGE, andUPDATEoperations.Extra particulars may be discovered on this documentation

Predictive I/O is unique to the Photon engine on Databricks.

Clever workload administration (IWM)

One of many main ache factors of technical platform admins is to handle completely different warehouses for small and huge workloads and ensure code is optimized and superb tuned to run optimally and leverage the total capability of the compute infrastructure. IWM is a collection of options that helps with above challenges and helps run these workloads sooner whereas holding the associated fee down. It achieves this by analyzing actual time patterns and guaranteeing that the workloads have the optimum quantity of compute to execute the incoming SQL statements with out disrupting already-running queries.

The precise FinOps basis – by tagging, insurance policies, and reporting – is essential for transparency and ROI on your Knowledge Intelligence Platform. It helps you notice enterprise worth sooner and construct a extra profitable firm.

Use serverless and DatabricksIQ for speedy setup, cost-efficiency, and computerized optimizations that adapt to your workload patterns. This results in decrease TCO, higher reliability, and less complicated, more cost effective operations.