Availability is an important function

— Mike Fisher, former CTO of Etsy

“I get knocked down, however I rise up once more…”

— Tubthumping, Chumbawumba

Each group pays consideration to resilience. The large query is

when.

Startups are likely to solely handle resilience when their programs are already

down, typically taking a really reactive strategy. For a scaleup, extreme system

downtime represents a big bottleneck to the group, each from

the hassle expended on restoring perform and likewise from the impression of buyer

dissatisfaction.

To maneuver previous this, resilience must be constructed into the enterprise

aims, which is able to affect the structure, design, product

administration, and even governance of enterprise programs. On this article, we’ll

discover the Resilience and Observability Bottleneck: how one can acknowledge

it coming, the way you would possibly notice it has already arrived, and what you are able to do

to outlive the bottleneck.

How did you get into the bottleneck?

One of many first objectives of a startup is getting an preliminary product out

to market. Getting it in entrance of as many customers as potential and receiving

suggestions from them is often the very best precedence. If prospects use

your product and see the distinctive worth it delivers, your startup will carve

out market share and have a reliable income stream. Nonetheless, getting

there typically comes at a price to the resilience of your product.

A startup might resolve to skip automating restoration processes, as a result of at

a small scale, the group believes it could present resilience by means of

the builders that know the system effectively. Incidents are dealt with in a

reactive nature, and resolutions come by hand. Potential options is likely to be

spinning up one other occasion to deal with elevated load, or restarting a

service when it’s failing. Your first prospects would possibly even pay attention to

your lack of true resilience as they expertise system outages.

At one in all our scaleup engagements, to get the system out to manufacturing

shortly, the consumer deprioritized well being test mechanisms within the

cluster. The builders managed the startup course of efficiently for the

few instances when it was vital. For an vital demo, it was determined to

spin up a brand new cluster in order that there could be no externalities impacting

the system efficiency. Sadly, actively managing the standing of all

the providers working within the cluster was neglected. The demo began

earlier than the system was totally operational and an vital element of the

system failed in entrance of potential prospects.

Essentially, your group has made an express trade-off

prioritizing user-facing performance over automating resilience,

playing that the group can recuperate from downtime by means of handbook

intervention. The trade-off is probably going acceptable as a startup whereas it’s

at a manageable scale. Nonetheless, as you expertise excessive progress charges and

rework from a

startup to a scaleup, the shortage of resilience proves to be a scaling

bottleneck, manifesting as an rising incidence of service

interruptions translating into extra work on the Ops facet of the DevOps

staff’s tasks, decreasing the productiveness of groups. The impression

appears to look all of a sudden, as a result of the impact tends to be non-linear

relative to the expansion of the client base. What was just lately manageable

is all of a sudden extraordinarily impactful. Ultimately, the size of the system

creates handbook work past the capability of your staff, which bubbles as much as

have an effect on the client experiences. The mixture of diminished productiveness

and buyer dissatisfaction results in a bottleneck that’s arduous to

survive.

The query then is, how do I do know if my product is about to hit a

scaling bottleneck? And additional, if I learn about these indicators, how can I

keep away from or preserve tempo with my scale? That’s what we’ll look to reply as we

describe frequent challenges we’ve skilled with our purchasers and the

options we’ve seen to be only.

Indicators you’re approaching a scaling bottleneck

It is all the time troublesome to function in an setting through which the size

of the enterprise is altering quickly. Investing in dealing with excessive site visitors

volumes too early is a waste of sources. Investing too late means your

prospects are already feeling the results of the scaling bottleneck.

To shift your working mannequin from reactive to proactive, you must

be capable of predict future habits with a confidence stage adequate to

assist vital enterprise selections. Making information pushed selections is

all the time the purpose. The secret’s to search out the main indicators which is able to

information you to organize for, and hopefully keep away from the bottleneck, reasonably than

react to a bottleneck that has already occurred. Based mostly on our expertise,

we’ve discovered a set of indicators associated to the frequent preconditions as

you strategy this bottleneck.

Resilience shouldn’t be a firstclass consideration

This can be the least apparent signal, however is arguably an important.

Resilience is considered purely a technical drawback and never a function

of the product. It’s deprioritized for brand spanking new options and enhancements. In

some circumstances, it’s not even a priority to be prioritized.

Right here’s a fast check. Pay attention to the completely different discussions that

happen inside your groups, and be aware the context through which resilience is

mentioned. Chances are you’ll discover that it isn’t included as a part of a standup, however

it does make its approach right into a developer assembly. When the event staff isn’t

chargeable for operations, resilience is successfully siloed away.

In these circumstances, pay shut consideration to how resilience is mentioned.

Proof of insufficient deal with resilience is usually oblique. At one

consumer, we’ve seen it come within the type of technical debt playing cards that not

solely aren’t prioritized, however grow to be a continuing rising record. At one other

consumer, the operations staff had their backlog stuffed purely with

buyer incidents, nearly all of which handled the system both

not being up or being unable to course of requests. When resilience considerations

aren’t a part of a staff’s backlog and roadmap, you’ll have proof that

it isn’t core to the product.

Fixing resilience by hand (reactive handbook resilience)

How your group resolve service outages is usually a key indicator

of whether or not your product can scaleup successfully or not. The traits

we describe listed below are essentially attributable to a

lack of automation, leading to extreme handbook effort. Are service

outages resolved by way of restarts by builders? Underneath excessive load, is there

coordination required to scale compute cases?

Usually, we discover

these approaches don’t comply with sustainable operational practices and are

brittle options for the following system outage. They embody bandaid options

which alleviate a symptom, however by no means really clear up it in a approach that permits

for future resilience.

Possession of programs aren’t effectively outlined

When your group is shifting shortly, creating new providers and

capabilities, very often key items of the service ecosystem, and even

the infrastructure, can grow to be “orphaned” – with out clear accountability

for operations. Consequently, manufacturing points might stay unnoticed till

prospects react. After they do happen, it takes longer to troubleshoot which

causes delays in resolving outages. Decision is delayed whereas ping ponging points

between groups in an effort to search out the accountable occasion, losing

everybody’s time as the difficulty bounces from staff to staff.

This drawback shouldn’t be distinctive to microservice environments. At one

engagement, we witnessed comparable conditions with a monolith structure

missing clear possession for elements of the system. On this case, readability

of possession points stemmed from an absence of clear system boundaries in a

“ball of mud” monolith.

Ignoring the fact of distributed programs

A part of creating efficient programs is having the ability to outline and use

abstractions that allow us to simplify a fancy system to the purpose

that it truly matches within the developer’s head. This permits builders to

make selections in regards to the future modifications essential to ship new worth

and performance to the enterprise. Nonetheless, as in all issues, one can go

too far, not realizing that these simplifications are literally

assumptions hiding vital constraints which impression the system.

Riffing off the fallacies of distributed computing:

- The community shouldn’t be dependable.

- Your system is affected by the pace of sunshine. Latency is rarely zero.

- Bandwidth is finite.

- The community shouldn’t be inherently safe.

- Topology all the time modifications, by design.

- The community and your programs are heterogeneous. Completely different programs behave

in another way beneath load. - Your digital machine will disappear whenever you least anticipate it, at precisely the

incorrect time. - As a result of folks have entry to a keyboard and mouse, errors will

occur. - Your prospects can (and can) take their subsequent motion in <

500ms.

Fairly often, testing environments present excellent world

situations, which avoids violating these assumptions. Methods which

don’t account for (and check for) these real-world properties are

designed for a world through which nothing dangerous ever occurs. Consequently,

your system will exhibit unanticipated and seemingly non-deterministic

habits because the system begins to violate the hidden assumptions. This

interprets into poor efficiency for purchasers, and extremely troublesome

troubleshooting processes.

Not planning for potential site visitors

Estimating future site visitors quantity is troublesome, and we discover that we

are incorrect extra typically than we’re proper. Over-estimating site visitors means

the group is losing effort designing for a actuality that doesn’t

exist. Underneath-estimating site visitors could possibly be much more catastrophic. Surprising

excessive site visitors hundreds may occur for a wide range of causes, and a social media advertising

marketing campaign which unexpectedly goes viral is an efficient instance. Instantly your

system can’t handle the incoming site visitors, elements begin to fall over,

and all the pieces grinds to a halt.

As a startup, you’re all the time trying to entice new prospects and acquire

further market share. How and when that manifests may be extremely

troublesome to foretell. On the scale of the web, something may occur,

and you must assume that it’s going to.

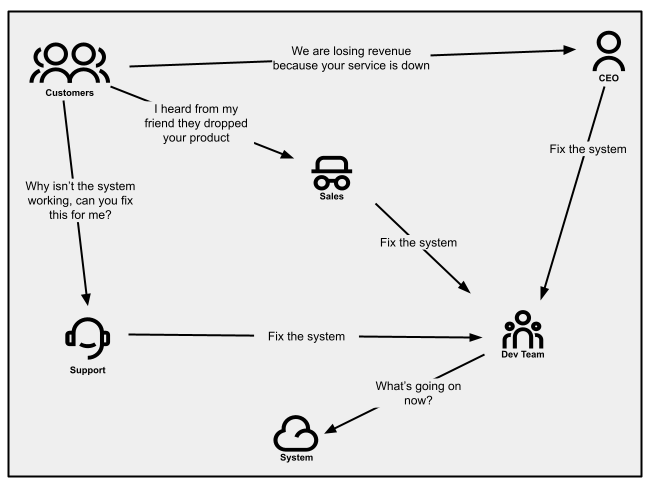

Alerted by way of buyer notifications

When prospects are invested in your product and imagine the difficulty is

resolvable, they could attempt to contact your assist workers for

assist. That could be by means of electronic mail, calling in, or opening a assist

ticket. Service failures trigger spikes in name quantity or electronic mail site visitors.

Your gross sales folks might even be relaying these messages as a result of

(potential) prospects are telling them as effectively. And if service outages

have an effect on strategic prospects, your CEO would possibly inform you straight (this can be

okay early on, however it’s definitely not a state you wish to be in long run).

Buyer communications won’t all the time be clear and easy, however

reasonably might be primarily based on a buyer’s distinctive expertise. If buyer success workers

don’t notice that these are indications of resilience issues,

they may proceed with enterprise as typical and your engineering workers will

not obtain the suggestions. After they aren’t recognized and managed

appropriately, notifications might then flip non-verbal. For instance, you might

all of a sudden discover the speed at which prospects are canceling subscriptions

will increase.

When working with a small buyer base, figuring out about an issue

by means of your prospects is “largely” manageable, as they’re pretty

forgiving (they’re on this journey with you in any case). Nonetheless, as

your buyer base grows, notifications will start to pile up in the direction of

an unmanageable state.

Determine 1:

Communication patterns as seen in a company the place buyer notifications

aren’t managed effectively.

How do you get out of the bottleneck?

After you have an outage, you wish to recuperate as shortly as potential and

perceive intimately why it occurred, so you may enhance your system and

guarantee it by no means occurs once more.

Tackling the resilience of your services and products whereas within the bottleneck

may be troublesome. Tactical options typically imply you find yourself caught in fireplace after fireplace.

Nonetheless if it’s managed strategically, even whereas within the bottleneck, not

solely are you able to relieve the stress in your groups, however you may be taught from previous restoration

efforts to assist handle by means of the hypergrowth stage and past.

The next 5 sections are successfully methods your group can implement.

We imagine they circulate so as and ought to be taken as a complete. Nonetheless, relying

in your group’s maturity, you might resolve to leverage a subset of

methods. Inside every, we lay out a number of options that work in the direction of it is

respective technique.

Guarantee you could have carried out fundamental resilience methods

There are some fundamental methods, starting from structure to

group, that may enhance your resiliency. They preserve your product

in the fitting place, enabling your group to scale successfully.

Use a number of zones inside a area

For extremely vital providers (and their information), configure and allow

them to run throughout a number of zones. This could give a bump to your

system availability, and enhance your resiliency within the case of

disruption (inside a zone).

Specify applicable computing occasion sorts and specs

Enterprise vital providers ought to have computing capability

appropriately assigned to them. If providers are required to run 24/7,

your infrastructure ought to replicate these necessities.

Match funding to vital service tiers

Many organizations handle funding by figuring out vital

service tiers, with the understanding that not all enterprise programs

share the identical significance by way of delivering buyer expertise

and supporting income. Figuring out service tiers and related

resilience outcomes knowledgeable by service stage agreements (SLAs), paired with structure and

design patterns that assist the outcomes, gives useful guardrails

and governance in your product growth groups.

Clearly outline house owners throughout your total system

Every service that exists inside your system ought to have

well-defined house owners. This info can be utilized to assist direct points

to the fitting place, and to individuals who can successfully resolve them.

Implementing a developer portal which gives a software program providers

catalog with clearly outlined staff possession helps with inside

communication patterns.

Automate handbook resilience processes (inside a timebox)

Sure resilience issues which have been solved by hand may be

automated: actions like restarting a service, including new cases or

restoring database backups. Many actions are simply automated or just

require a configuration change inside your cloud service supplier.

Whereas within the bottleneck, implementing these capabilities may give the

staff the reduction it wants, offering a lot wanted respiratory room and

time to unravel the foundation trigger(s).

Ensure that to maintain these implementations at their easiest and

timeboxed (couple of days at max). Keep in mind these began out as

bandaids, and automating them is simply one other (albeit higher) kind of

bandaid. Combine these into your monitoring resolution, permitting you

to stay conscious of how often your system is mechanically recovering and the way lengthy it

takes. On the identical time, these metrics can help you prioritize

shifting away from reliance on these bandaid options and make your

entire system extra sturdy.

Enhance imply time to revive with observability and monitoring

To work your approach out of a bottleneck, it’s essential to perceive your

present state so you can also make efficient selections about the place to speculate.

If you wish to be 5 nines, however haven’t any sense of what number of nines are

truly presently supplied, then it’s arduous to even know what path you

ought to be taking.

To know the place you’re, it’s essential to put money into observability.

Observability means that you can be extra proactive in timing funding in

resilience earlier than it turns into unmanageable.

Centralize your logs to be viewable by means of a single interface

Combination logs from core providers and programs to be accessible

by means of a central interface. This may preserve them accessible to

a number of eyes simply and scale back troubleshooting efforts (doubtlessly

enhancing imply time to restoration).

Outline a transparent structured format for log messages

Anybody who’s needed to parse by means of aggregated log messages can inform

you that when a number of providers comply with differing log buildings it’s

an unbelievable mess to search out something. Each service simply finally ends up

talking its personal language, and solely the unique authors perceive

the logs. Ideally, as soon as these logs are aggregated, anybody from

builders to assist groups ought to be capable of perceive the logs, no

matter their origin.

Construction the log messages utilizing an organization-wide standardized

format. Most logging instruments assist a JSON format as a typical, which

permits the log message construction to include metadata like timestamp,

severity, service and/or correlation-id. And with log administration

providers (by means of an observability platform), one can filter and search throughout these

properties to assist debug bottleneck points. To assist make search extra

environment friendly, choose fewer log messages with extra fields containing

pertinent info over many messages with a small variety of

fields. The precise messages themselves should be distinctive to a

particular service, however the attributes related to the log message

are useful to everybody.

Deal with your log messages as a key piece of knowledge that’s

seen to extra than simply the builders that wrote them. Your assist staff can

grow to be more practical when debugging preliminary buyer queries, as a result of

they’ll perceive the construction they’re viewing. If each service

can communicate the identical language, the barrier to offer assist and

debugging help is eliminated.

Add observability that’s near your buyer expertise

What will get measured will get managed.

— Peter Drucker

Although infrastructure metrics and repair message logs are

helpful, they’re pretty low stage and don’t present any context of

the precise buyer expertise. Alternatively, buyer

notifications are a direct indication of a difficulty, however they’re

often anecdotal and don’t present a lot by way of sample (until

you place within the work to search out one).

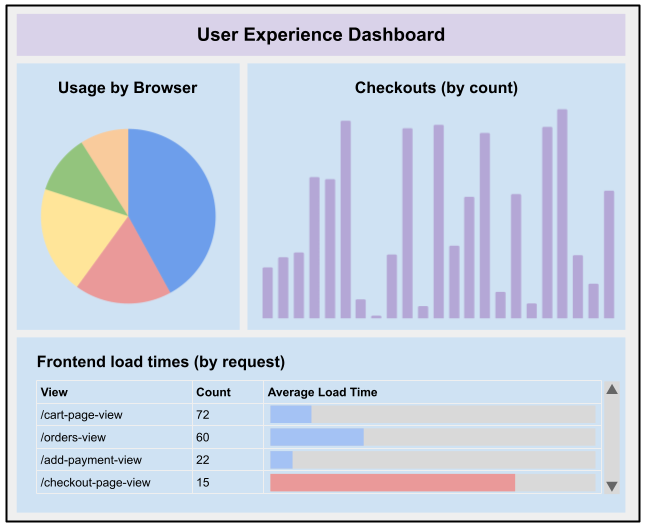

Monitoring core enterprise metrics permits groups to watch a

buyer’s expertise. Sometimes outlined by means of the product’s

necessities and options, they supply excessive stage context round

many buyer experiences. These are metrics like accomplished

transactions, begin and cease charge of a video, API utilization or response

time metrics. Implicit metrics are additionally helpful in measuring a

buyer’s experiences, like frontend load time or search response

time. It is essential to match what’s being noticed straight

to how a buyer is experiencing your product. Additionally

vital to notice, metrics aligned to the client expertise grow to be

much more vital in a B2B setting, the place you won’t have

the quantity of knowledge factors vital to pay attention to buyer points

when solely measuring particular person elements of a system.

At one consumer, providers began to publish area occasions that

had been associated to the product expertise: occasions like added to cart,

failed so as to add to cart, transaction accomplished, cost authorised, and so forth.

These occasions may then be picked up by an observability platform (like

Splunk, ELK or Datadog) and displayed on a dashboard, categorized and

analyzed even additional. Errors could possibly be captured and categorized, permitting

higher drawback fixing on errors associated to surprising buyer

expertise.

Determine 2:

Instance of what a dashboard specializing in the consumer expertise may appear to be

Information gathered by means of core enterprise metrics may also help you perceive

not solely what is likely to be failing, however the place your system thresholds are and

the way it manages when it’s outdoors of that. This provides additional perception into

the way you would possibly get by means of the bottleneck.

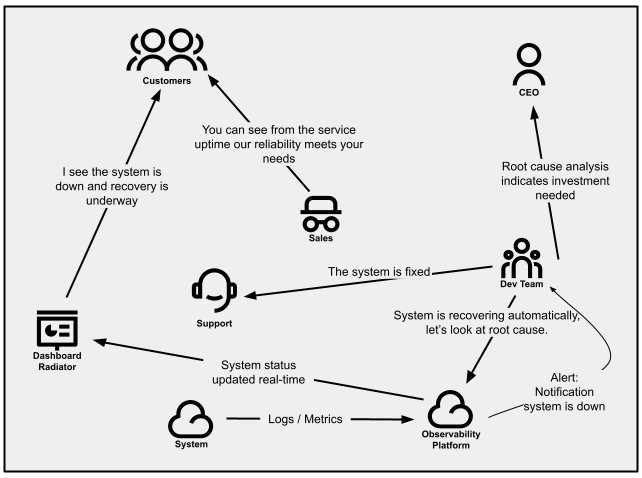

Present product standing perception to prospects utilizing standing indicators

It may be troublesome to handle incoming buyer inquiries of

completely different points they’re going through, with assist providers shortly discovering

they’re combating fireplace after fireplace. Managing difficulty quantity may be essential

to a startup’s success, however throughout the bottleneck, it’s essential to search for

systemic methods of decreasing that site visitors. The power to divert name

site visitors away from assist will give some respiratory room and a greater probability to

clear up the fitting drawback.

Service standing indicators can present prospects the data they’re

searching for with out having to achieve out to assist. This might are available

the type of public dashboards, electronic mail messages, and even tweets. These can

leverage backend service well being and readiness checks, or a mix

of metrics to find out service availability, degradation, and outages.

Throughout instances of incidents, standing indicators can present a approach of updating

many shoppers directly about your product’s standing.

Constructing belief together with your prospects is simply as vital as making a

dependable and resilient service. Offering strategies for purchasers to grasp

the providers’ standing and anticipated decision timeframe helps construct

confidence by means of transparency, whereas additionally giving the assist workers

the house to problem-solve.

Determine 3:

Communication patterns inside a company that proactively manages how prospects are notified.

Shift to express resilience enterprise necessities

As a startup, new options are sometimes thought-about extra helpful

than technical debt, together with any work associated to resilience. And as said

earlier than, this definitely made sense initially. New options and

enhancements assist preserve prospects and herald new ones. The work to

present new capabilities ought to, in concept, result in a rise in

income.

This doesn’t essentially maintain true as your group

grows and discovers new challenges to rising income. Failures of

resilience are one supply of such challenges. To maneuver past this, there

must be a shift in the way you worth the resilience of your product.

Perceive the prices of service failure

For a startup, the implications of not hitting a income goal

this ‘quarter’ is likely to be completely different than for a scaleup or a mature

product. However as typically occurs, the preliminary “new options are extra

helpful than technical debt” determination turns into a everlasting fixture within the

organizational tradition – whether or not the precise income impression is provable

or not; and even calculated. A side of the maturity wanted when

shifting from startup to scaleup is within the data-driven ingredient of

decision-making. Is the group monitoring the worth of each new

function shipped? And is the group analyzing the operational

investments as contributing to new income reasonably than only a

cost-center? And are the prices of an outage or recurring outages recognized

each by way of wasted inside labor hours in addition to misplaced income?

As a startup, in most of those regards, you have obtained nothing to lose.

However this isn’t true as you develop.

Subsequently, it’s vital to begin analyzing the prices of service

failures as a part of your general product administration and income

recognition worth stream. Understanding your income “velocity” will

present a simple approach to quantify the direct cost-per-minute of

downtime. Monitoring the prices to the staff for everybody concerned in an

outage incident, from buyer assist calls to builders to administration

to public relations/advertising and even to gross sales, may be an eye-opening expertise.

Add on the chance prices of coping with an outage reasonably than

increasing buyer outreach or delivering new options and the true

scope and impression of failures in resilience grow to be obvious.

Handle resilience as a function

Begin treating resilience as greater than only a technical

expectation. It’s a core function that prospects will come to anticipate.

And since they anticipate it, it ought to grow to be a firstclass

consideration amongst different options. A part of this evolution is about shifting the place the

accountability lies. As a substitute of it being purely a accountability for

tech, it’s one for product and the enterprise. A number of layers inside

the group might want to contemplate resilience a precedence. This

demonstrates that resilience will get the identical quantity of consideration that

another function would get.

Shut collaboration between

the product and know-how is significant to be sure to’re capable of

set the right expectations throughout story definition, implementation

and communication to different elements of the group. Resilience,

although a core function, remains to be invisible to the client (in contrast to new

options like additions to a UI or API). These two teams have to

collaborate to make sure resilience is prioritized appropriately and

carried out successfully.

The target right here is shifting resilience from being a reactionary

concern to a proactive one. And in case your groups are capable of be

proactive, you can even react extra appropriately when one thing

important is going on to what you are promoting.

Necessities ought to replicate sensible expectations

Realizing sensible expectations for resilience relative to

necessities and buyer expectations is vital to maintaining your

engineering efforts price efficient. Completely different ranges of resilience, as

measured by uptime and availability, have vastly completely different prices. The

price distinction between “three nines” and “4 nines” of availability

(99.9% vs 99.99%) could also be an element of 10x.

It’s vital to grasp your buyer necessities for every

enterprise functionality. Do you and your prospects anticipate a 24x7x365

expertise? The place are your prospects

primarily based? Are they native to a selected area or are they world?

Are they primarily consuming your service by way of cell units, or are

your prospects built-in by way of your public API? For instance, it’s an

ineffective use of capital to offer 99.999% uptime on a service delivered by way of

cell units which solely take pleasure in 99.9% uptime on account of mobile phone

reliability limits.

These are vital inquiries to ask

when fascinated with resilience, since you don’t wish to pay for the

implementation of a stage of resiliency that has no perceived buyer

worth. Additionally they assist to set and handle

expectations for the product being constructed, the staff constructing and

sustaining it, the oldsters in your group promoting it and the

prospects utilizing it.

Really feel out your issues first and keep away from overengineering

If you happen to’re fixing resiliency issues by hand, your first intuition

is likely to be to simply automate it. Why not, proper? Although it could assist, it is most

efficient when the implementation is time-boxed to a really brief interval

(a few days at max). Spending extra time will doubtless result in

overengineering in an space that was truly only a symptom.

A considerable amount of time, power and cash might be invested into one thing that’s

simply one other bandaid and almost definitely shouldn’t be sustainable, and even worse,

causes its personal set of second-order challenges.

As a substitute of going straight to a tactical resolution, that is an

alternative to essentially really feel out your drawback: The place do the fault strains

exist, what’s your observability attempting to inform you, and what design

selections correlate to those failures. You might be able to uncover these

fault strains by means of stress, chaos or exploratory testing. Use this

alternative to your benefit to find different system stress factors

and decide the place you will get the most important worth in your funding.

As what you are promoting grows and scales, it’s vital to re-evaluate

previous selections. What made sense in the course of the startup section might not get

you thru the hypergrowth phases.

Leverage a number of methods when gathering necessities

Gathering necessities for technically oriented options

may be troublesome. Product managers or enterprise analysts who aren’t

versed within the nomenclature of resilience can discover it arduous to

perceive. This typically interprets into obscure necessities like “Make x service

extra resilient” or “100% uptime is our purpose”. The necessities you outline are as

vital because the ensuing implementations. There are numerous methods

that may assist us collect these necessities.

Strive working a pre-mortem earlier than writing necessities. On this

light-weight exercise, people in numerous roles give their

views about what they assume may fail, or what’s failing. A

pre-mortem gives helpful insights into how people understand

potential causes of failure, and the associated prices. The following

dialogue helps prioritize issues that should be made resilient,

earlier than any failure happens. At a minimal, you may create new check

situations to additional validate system resilience.

Another choice is to jot down necessities alongside tech leads and

structure SMEs. The accountability to create an efficient resilient system

is now shared amongst leaders on the staff, and every can communicate to

completely different elements of the design.

These two methods present that necessities gathering for

resilience options isn’t a single accountability. It ought to be shared

throughout completely different roles inside a staff. All through each method you

strive, bear in mind who ought to be concerned and the views they convey.

Evolve your structure and infrastructure to satisfy resiliency wants

For a startup, the design of the structure is dictated by the

pace at which you will get to market. That usually means the design that

labored at first can grow to be a bottleneck in your transition to scaleup.

Your product’s resilience will in the end come right down to the know-how

selections you make. It could imply analyzing your general design and

structure of the system and evolving it to satisfy the product

resilience wants. A lot of what we spoke to earlier may also help offer you

information factors and slack throughout the bottleneck. Inside that house, you may

evolve the structure and incorporate patterns that allow a very

resilient product.

Broadly have a look at your structure and decide applicable trade-offs

Both implicitly or explicitly, when the preliminary structure was

created, trade-offs had been made. Throughout the experimentation and gaining

traction phases of a startup, there’s a excessive diploma of deal with

getting one thing to market shortly, maintaining growth prices low,

and having the ability to simply modify or pivot product path. The

trade-off is sacrificing the advantages of resilience

that may come out of your preferrred structure.

Take an API backed by Capabilities as a Service (FaaS). This strategy is a good way to

create one thing with little to no administration of the infrastructure it

runs on, doubtlessly ticking all three packing containers of our focus space. On the

different hand, it is restricted primarily based on the infrastructure it’s allowed to

run on, timing constraints of the service and the potential

communication complexity between many various capabilities. Although not

unachievable, the constraints of the structure might make it

troublesome or complicated to realize the resilience your product wants.

Because the product and group grows and matures, its constraints

additionally evolve. It’s vital to acknowledge that early design selections

might not be applicable to the present working setting, and

consequently new architectures and applied sciences should be launched.

If not addressed, the trade-offs made early on will solely amplify the

bottleneck throughout the hypergrowth section.

Improve resilience with efficient error restoration methods

Information gathered from displays can present the place excessive failure

charges are coming from, be it third-party integrations, backed-up queues,

backoffs or others. This information can drive selections on what are

applicable restoration methods to implement.

Use caching the place applicable

When retrieving info, caching methods may also help in two

methods. Primarily, they can be utilized to cut back the load on the service by

offering cached outcomes for a similar queries. Caching can be

used because the fallback response when a backend service fails to return

efficiently.

The trade-off is doubtlessly serving stale information to prospects, so

be certain that your use case shouldn’t be delicate to stale information. For instance,

you wouldn’t wish to use cached outcomes for real-time inventory worth

queries.

Use default responses the place applicable

As an alternative choice to caching, which gives the final recognized

response for a question, it’s potential to offer a static default worth

when the backend service fails to return efficiently. For instance,

offering retail pricing because the fallback response for a pricing

low cost service will do no hurt whether it is higher to danger dropping a sale

reasonably than danger dropping cash on a transaction.

Use retry methods for mutation requests

The place a consumer is looking a service to impact a change within the information,

the use case might require a profitable request earlier than continuing. In

this case, retrying the decision could also be applicable so as to decrease

how typically error administration processes should be employed.

There are some vital trade-offs to contemplate. Retries with out

delays danger inflicting a storm of requests which carry the entire system

down beneath the load. Utilizing an exponential backoff delay mitigates the

danger of site visitors load, however as a substitute ties up connection sockets ready

for a long-running request, which causes a unique set of

failures.

Use idempotency to simplify error restoration

Shoppers implementing any kind of retry technique will doubtlessly

generate a number of equivalent requests. Make sure the service can deal with

a number of equivalent mutation requests, and also can deal with resuming a

multi-step workflow from the purpose of failure.

Design enterprise applicable failure modes

In a system, failure is a given and your purpose is to guard the tip

consumer expertise as a lot as potential. Particularly in circumstances which can be

supported by downstream providers, you might be able to anticipate

failures (by means of observability) and supply an alternate circulate. Your

underlying providers that leverage these integrations may be designed

with enterprise applicable failure modes.

Contemplate an ecommerce system supported by a microservice

structure. Ought to downstream providers supporting the ordering

perform grow to be overwhelmed, it could be extra applicable to

quickly disable the order button and current a restricted error

message to a buyer. Whereas this gives clear suggestions to the consumer,

Product Managers involved with gross sales conversions would possibly as a substitute permit

for orders to be captured and alert the client to a delay so as

affirmation.

Failure modes ought to be embedded into upstream programs, in order to make sure

enterprise continuity and buyer satisfaction. Relying in your

structure, this would possibly contain your CDN or API gateway returning

cached responses if requests are overloading your subsystems. Or as

described above, your system would possibly present for an alternate path to

eventual consistency for particular failure modes. It is a much more

efficient and buyer targeted strategy than the presentation of a

generic error web page that conveys ‘one thing has gone incorrect’.

Resolve single factors of failure

A single service can simply go from managing a single

accountability of the product to a number of. For a startup, appending to

an present service is usually the best strategy, because the

infrastructure and deployment path is already solved. Nonetheless,

providers can simply bloat and grow to be a monolith, creating some extent of

failure that may carry down many or all elements of the product. In circumstances

like this, you may want to grasp methods to separate up the structure,

whereas additionally maintaining the product as a complete practical.

At a fintech consumer, throughout a hyper-growth interval, load

on their monolithic system would spike wildly. As a result of monolithic

nature, the entire capabilities had been introduced down concurrently,

leading to misplaced income and sad prospects. The long-term

resolution was to begin splitting the monolith into a number of separate

providers that could possibly be scaled horizontally. As well as, they

launched occasion queues, so transactions had been by no means misplaced.

Implementing a microservice strategy shouldn’t be a easy and easy

activity, and does take effort and time. Begin by defining a site that

requires a resiliency enhance, and extract it is capabilities piece by piece.

Roll out the brand new service, regulate infrastructure configuration as wanted (enhance

provisioned capability, implement auto scaling, and so forth) and monitor it.

Be certain that the consumer journey hasn’t been affected, and resilience as

a complete has improved. As soon as stability is achieved, proceed to iterate over

every functionality within the area. As famous within the consumer instance, that is

additionally a possibility to introduce architectural parts that assist enhance

the overall resilience of your system. Occasion queues, circuit breakers, bulkheads and

anti-corruption layers are all helpful architectural elements that

enhance the general reliability of the system.

Regularly optimize your resilience

It is one factor to get by means of the bottleneck, it is one other to remain

out of it. As you develop, your system resiliency might be frequently

examined. New options lead to new pathways for elevated system load.

Architectural modifications introduces unknown system stability. Your

group might want to keep forward of what’s going to ultimately come. Because it

matures and grows, so ought to your funding into resilience.

Recurrently chaos check to validate system resilience

Chaos engineering is the bedrock of really resilient merchandise. The

core worth is the flexibility to generate failure in ways in which you would possibly

by no means consider. And whereas that chaos is creating failures, working

by means of consumer situations on the identical time helps to grasp the consumer

expertise. This will present confidence that your system can face up to

surprising chaos. On the identical time, it identifies which consumer

experiences are impacted by system failures, giving context on what to

enhance subsequent.

Although you might really feel extra snug testing towards a dev or QA

setting, the worth of chaos testing comes from manufacturing or

production-like environments. The purpose is to grasp how resilient

the system is within the face of chaos. Early environments are (often)

not provisioned with the identical configurations present in manufacturing, thus

won’t present the arrogance wanted. Working a check like

this in manufacturing may be daunting, so be sure to have faith in

your capacity to revive service. This implies your complete system may be

spun again up and information may be restored if wanted, all by means of automation.

Begin with small comprehensible situations that may give helpful information.

As you acquire expertise and confidence, think about using your load/efficiency

checks to simulate customers whilst you execute your chaos testing. Guarantee groups and

stakeholders are conscious that an experiment is about to be run, in order that they

are ready to watch (in case issues go incorrect). Frameworks like

Litmus or Gremlin can present construction to chaos engineering. As

confidence and maturity in your resilience grows, you can begin to run

experiments the place groups aren’t alerted beforehand.

Recruit specialists with information of resilience at scale

Hiring generalists when constructing and delivering an preliminary product

is sensible. Money and time are extremely helpful, so having

generalists gives the pliability to make sure you will get out to

market shortly and never eat away on the preliminary funding. Nonetheless,

the groups have taken on greater than they’ll deal with and as your product

scales, what was as soon as ok is not the case. A barely

unstable system that made it to market will proceed to get extra

unstable as you scale, as a result of the abilities required to handle it have

overtaken the abilities of the present staff. In the identical vein as

technical

debt,

this is usually a slippery slope and if not addressed, the issue will

proceed to compound.

To maintain the resilience of your product, you’ll have to recruit

for that experience to deal with that functionality. Consultants herald a

contemporary view on the system in place, together with their capacity to

establish gaps and areas for enchancment. Their previous experiences can

have a two-fold impact on the staff, offering a lot wanted steering in

areas that sorely want it, and an additional funding within the progress of

your workers.

All the time keep or enhance your reliability

In 2021, the State of Devops report expanded the fifth key metric from availability to reliability.

Underneath operational efficiency, it asserts a product’s capacity to

retain its guarantees. Resilience ties straight into this, because it’s a

key enterprise functionality that may guarantee your reliability.

With many organizations pushing extra often to manufacturing,

there must be assurances that reliability stays the identical or will get higher.

Together with your observability and monitoring in place, guarantee what it

tells you matches what your service stage aims (SLOs) state. With each deployment to

manufacturing, the displays shouldn’t deviate from what your SLAs

assure. Sure deployment buildings, like blue/inexperienced or canary

(to some extent), may also help to validate the modifications earlier than being

launched to a large viewers. Working checks successfully in manufacturing

can enhance confidence that your agreements haven’t swayed and

resilience has remained the identical or higher.

Resilience and observability as your group grows

Section 1

Experimenting

Prototype options, with hyper deal with getting a product to market shortly

Section 2

Getting Traction

Resilience and observability are manually carried out by way of developer intervention

Prioritization for fixing resilience primarily comes from technical debt

Dashboards replicate low stage providers statistics like CPU and RAM

Majority of assist points are available by way of calls or textual content messages from prospects

Section 3

(Hyper) Progress

Resilience is a core function delivered to prospects, prioritized in the identical vein as options

Observability is ready to replicate the general buyer expertise, mirrored by means of dashboards and monitoring

Re-architect or recreate problematic providers, enhancing the resilience within the course of

Section 4

Optimizing

Platforms evolve from inside going through providers, productizing observability and compute environments

Run periodic chaos engineering workout routines, with little to no discover

Increase groups with engineers which can be versed in resilience at scale

Abstract

As a scaleup, what determines your capacity to successfully navigate the

hyper(progress) section is partially tied to the resilience of your

product. The excessive progress charge begins to place stress on a system that was

developed in the course of the startup section, and failure to handle the resilience of

that system typically leads to a bottleneck.

To attenuate danger, resilience must be handled as a first-class citizen.

The main points might differ in keeping with your context, however at a excessive stage the

following issues may be efficient:

- Resilience is a key function of your product. It’s not only a

technical element, however a key element that your prospects will come to anticipate,

shifting the corporate in the direction of a proactive strategy. - Construct buyer standing indicators to assist divert some assist requests,

permitting respiratory room in your staff to unravel the vital issues. - The shopper expertise ought to be mirrored inside your observability stack.

Monitor core enterprise metrics that replicate experiences your prospects have. - Perceive what your dashboards and displays are telling you, to get a way

of what are essentially the most vital areas to unravel. - Evolve your structure to satisfy your resiliency objectives as you establish

particular challenges. Preliminary designs may go at small scale however grow to be

more and more limiting as you transition to a scaleup. - When architecting failure modes, discover methods to fail which can be pleasant to the

shopper, serving to to make sure continuity and buyer satisfaction. - Outline sensible resilience expectations in your product, and perceive the

limitations with which it’s being served. Use this data to offer your

prospects with efficient SLAs and cheap SLOs. - Optimize your resilience whenever you’re by means of the bottleneck. Make chaos

engineering a part of an everyday observe or recruiting specialists.

Efficiently incorporating these practices leads to a future group

the place resilience is constructed into enterprise aims, throughout all dimensions of

folks, course of, and know-how.