Picture by Creator

Diffusers is a Python library developed and maintained by HuggingFace. It simplifies the event and inference of Diffusion fashions for producing pictures from user-defined prompts. The code is brazenly accessible on GitHub with 22.4k stars on the repository. HuggingFace additionally maintains all kinds of Steady DIffusion and varied different diffusion fashions could be simply used with their library.

Set up and Setup

It’s good to begin with a contemporary Python surroundings to keep away from clashes between library variations and dependencies.

To arrange a contemporary Python surroundings, run the next instructions:

python3 -m venv venv

supply venv/bin/activate

Putting in the Diffusers library is easy. It’s offered as an official pip bundle and internally makes use of the PyTorch library. As well as, a number of diffusion fashions are primarily based on the Transformers structure so loading a mannequin would require the transformers pip bundle as nicely.

pip set up 'diffusers[torch]' transformers

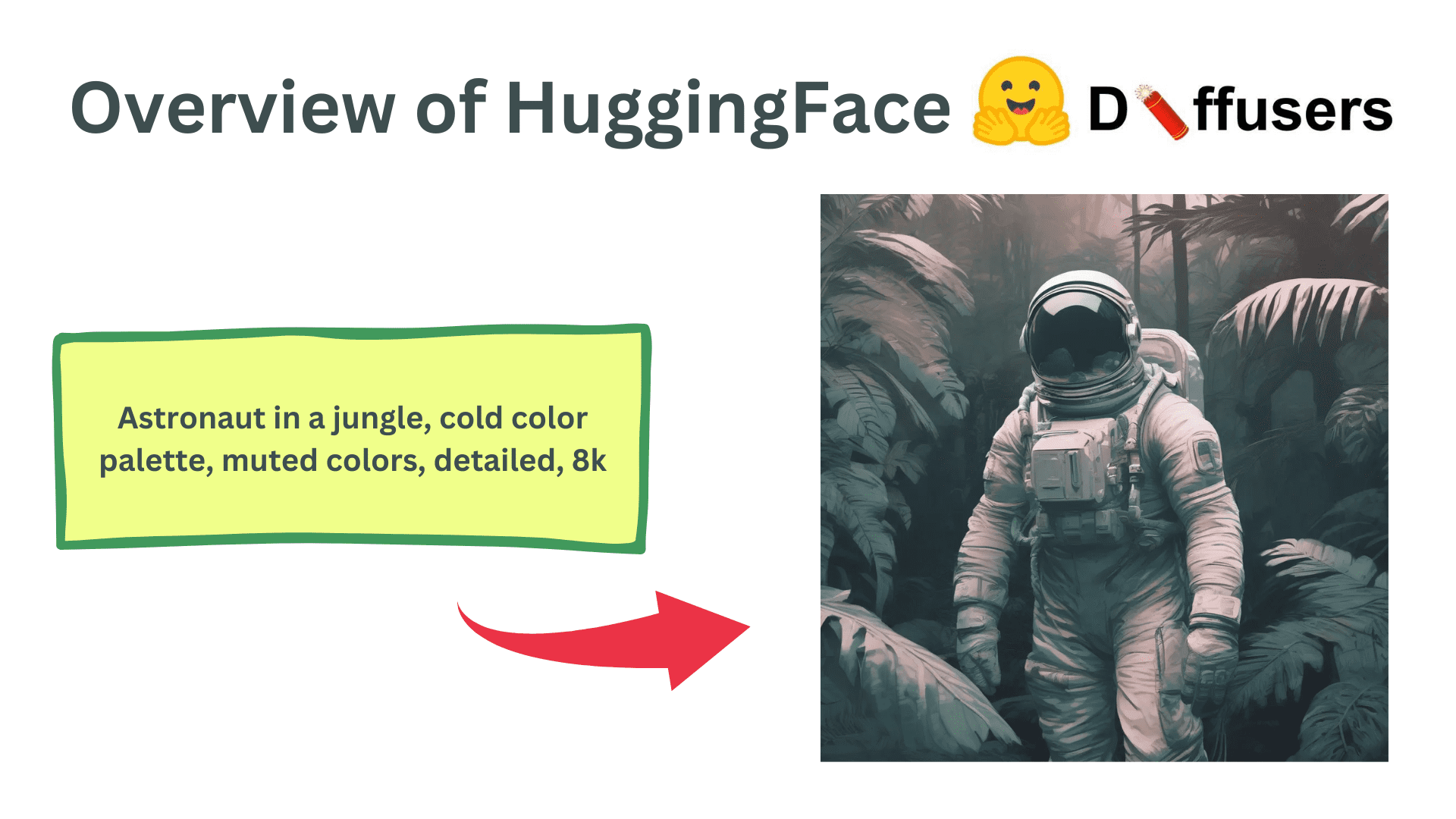

Utilizing Diffusers for AI-Generated Pictures

The diffuser library makes it extraordinarily straightforward to generate pictures from a immediate utilizing steady diffusion fashions. Right here, we’ll undergo a easy code line by line to see totally different components of the Diffusers library.

Imports

import torch

from diffusers import AutoPipelineForText2Image

The torch bundle can be required for the overall setup and configuration of the diffuser pipeline. The AutoPipelineForText2Image is a category that routinely identifies the mannequin that’s being loaded, for instance, StableDiffusion1-5, StableDiffusion2.1, or SDXL, and hundreds the suitable lessons and modules internally. This protects us from the effort of adjusting the pipeline every time we need to load a brand new mannequin.

Loading the Fashions

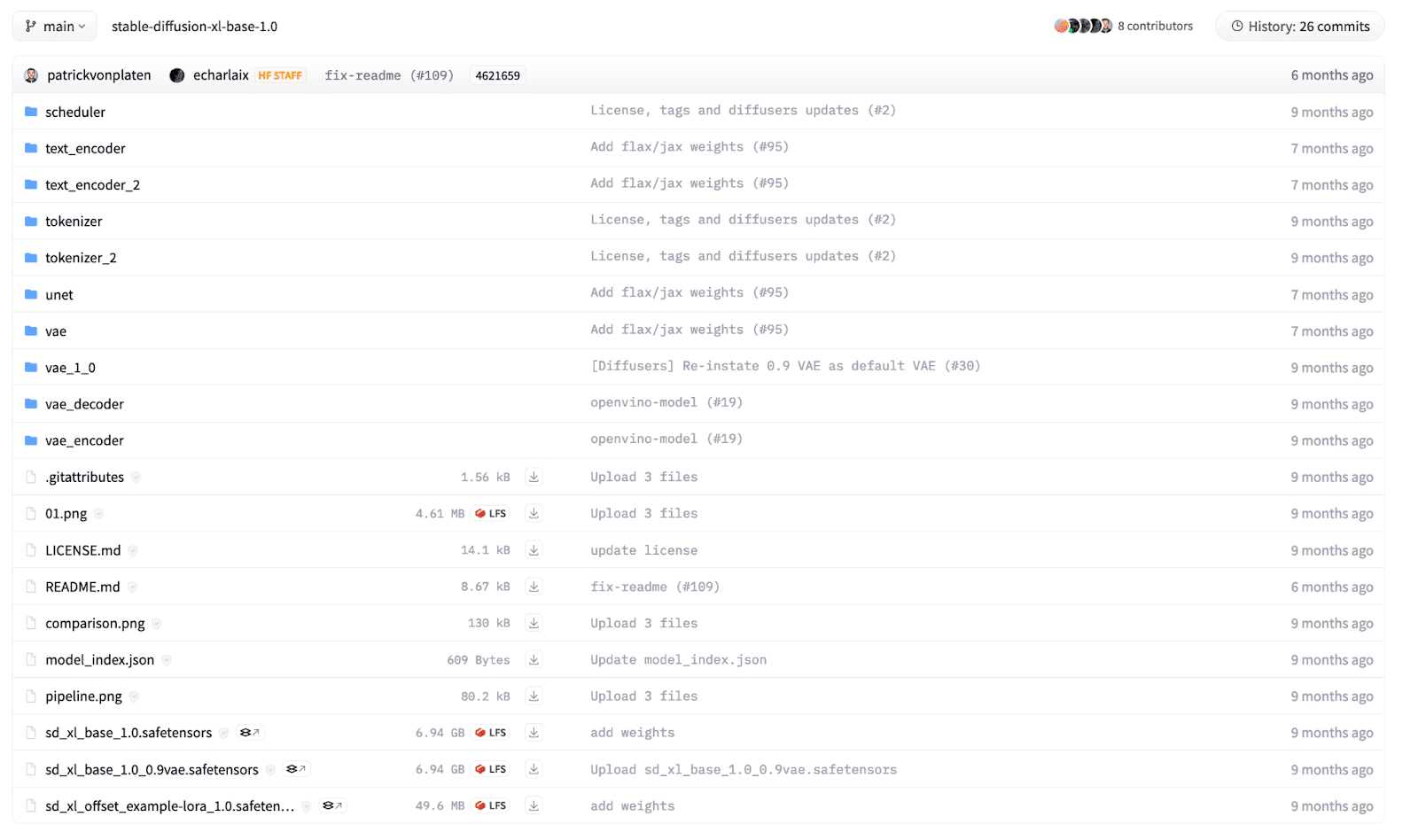

A diffusion mannequin consists of a number of elements, together with however not restricted to Textual content Encoder, UNet, Schedulers, and Variational AutoEncoder. We will individually load the modules however the diffusers library supplies a builder methodology that may load a pre-trained mannequin given a structured checkpoint listing. For a newbie, it could be tough to know which pipeline to make use of, so AutoPipeline makes it simpler to load a mannequin for a particular activity.

On this instance, we’ll load an SDXL mannequin that’s brazenly accessible on HuggingFace, skilled by Stability AI. The recordsdata within the listing are structured in keeping with their names and every listing has its personal safetensors file. The listing construction for the SDXL mannequin appears as beneath:

To load the mannequin in our code, we use the AutoPipelineForText2Image class and name the from_pretrained operate.

pipeline = AutoPipelineForText2Image.from_pretrained(

"stability/stable-diffusion-xl-base-1.0",

torch_dtype=torch.float32 # Float32 for CPU, Float16 for GPU,

)

We offer the mannequin path as the primary argument. It may be the HuggingFace mannequin card title as above or an area listing the place you’ve the mannequin downloaded beforehand. Furthermore, we outline the mannequin weights precisions as a key phrase argument. We usually use 32-bit floating-point precision when we have now to run the mannequin on a CPU. Nevertheless, operating a diffusion mannequin is computationally costly, and operating an inference on a CPU gadget will take hours! For GPU, we both use 16-bit or 32-bit knowledge varieties however 16-bit is preferable because it makes use of decrease GPU reminiscence.

The above command will obtain the mannequin from HuggingFace and it will probably take time relying in your web connection. Mannequin sizes can fluctuate from 1GB to over 10GBs.

As soon as a mannequin is loaded, we might want to transfer the mannequin to the suitable {hardware} gadget. Use the next code to maneuver the mannequin to CPU or GPU. Observe, for Apple Silicon chips, transfer the mannequin to an MPS gadget to leverage the GPU on MacOS gadgets.

# "mps" if on M1/M2 MacOS Machine

DEVICE = "cuda" if torch.cuda.is_available() else "cpu"

pipeline.to(DEVICE)

Inference

Now, we’re able to generate pictures from textual prompts utilizing the loaded diffusion mannequin. We will run an inference utilizing the beneath code:

immediate = "Astronaut in a jungle, chilly coloration palette, muted colours, detailed, 8k"

outcomes = pipeline(

immediate=immediate,

peak=1024,

width=1024,

num_inference_steps=20,

)

We will use the pipeline object and name it with a number of key phrase arguments to regulate the generated pictures. We outline a immediate as a string parameter describing the picture we need to generate. Additionally, we will outline the peak and width of the generated picture however it needs to be in multiples of 8 or 16 because of the underlying transformer structure. As well as, the whole inference steps could be tuned to regulate the ultimate picture high quality. Extra denoising steps end in higher-quality pictures however take longer to generate.

Lastly, the pipeline returns an inventory of generated pictures. We will entry the primary picture from the array and might manipulate it as a Pillow picture to both save or present the picture.

img = outcomes.pictures[0]

img.save('outcome.png')

img # To point out the picture in Jupyter pocket book

Generated Picture

Advance Makes use of

The text-2-image instance is only a fundamental tutorial to focus on the underlying utilization of the Diffusers library. It additionally supplies a number of different functionalities together with Picture-2-image technology, inpainting, outpainting, and control-nets. As well as, they supply advantageous management over every module within the diffusion mannequin. They can be utilized as small constructing blocks that may be seamlessly built-in to create your customized diffusion pipelines. Furthermore, in addition they present further performance to coach diffusion fashions by yourself datasets and use circumstances.

Wrapping Up

On this article, we went over the fundamentals of the Diffusers library and the right way to make a easy inference utilizing a Diffusion mannequin. It is without doubt one of the most used Generative AI pipelines through which options and modifications are made day by day. There are a number of totally different use circumstances and options you’ll be able to attempt to the HuggingFace documentation and GitHub code is the very best place so that you can get began.

Kanwal Mehreen Kanwal is a machine studying engineer and a technical author with a profound ardour for knowledge science and the intersection of AI with medication. She co-authored the book “Maximizing Productiveness with ChatGPT”. As a Google Technology Scholar 2022 for APAC, she champions variety and educational excellence. She’s additionally acknowledged as a Teradata Range in Tech Scholar, Mitacs Globalink Analysis Scholar, and Harvard WeCode Scholar. Kanwal is an ardent advocate for change, having based FEMCodes to empower ladies in STEM fields.