Whatnot is a venture-backed e-commerce startup constructed for the streaming age. We’ve constructed a dwell video market for collectors, vogue fanatics, and superfans that permits sellers to go dwell and promote something they’d like by way of our video public sale platform. Suppose eBay meets Twitch.

Coveted collectibles have been the primary gadgets on our livestream after we launched in 2020. At present, by way of dwell purchasing movies, sellers provide merchandise in additional than 100 classes, from Pokemon and baseball playing cards to sneakers, vintage cash and rather more.

Essential to Whatnot’s success is connecting communities of consumers and sellers by way of our platform. It gathers indicators in real-time from our viewers: the movies they’re watching, the feedback and social interactions they’re leaving, and the merchandise they’re shopping for. We analyze this knowledge to rank the most well-liked and related movies, which we then current to customers within the residence display of Whatnot’s cell app or web site.

Nonetheless, to keep up and improve our development, we would have liked to take our residence feed to the following degree: rating our present solutions to every person primarily based on essentially the most fascinating and related content material in actual time.

This is able to require a rise within the quantity and number of knowledge we would wish to ingest and analyze, all of it in actual time. To assist this, we sought a platform the place knowledge science and machine studying professionals might iterate rapidly and deploy to manufacturing sooner whereas sustaining low-latency, high-concurrency workloads.

Excessive Value of Operating Elasticsearch

On the floor, our legacy knowledge pipeline seemed to be performing nicely and constructed upon essentially the most trendy of parts. This included AWS-hosted Elasticsearch to do the retrieval and rating of content material utilizing batch options loaded on ingestion. This course of returns a single question in tens of milliseconds, with concurrency charges topping out at 50-100 queries per second.

Nonetheless, we’ve plans to develop utilization 5-10x within the subsequent yr. This is able to be by way of a mix of increasing into much-larger product classes, and boosting the intelligence of our suggestion engine.

The larger ache level was the excessive operational overhead of Elasticsearch for our small group. This was draining productiveness and severely limiting our skill to enhance the intelligence of our suggestion engine to maintain up with our development.

Say we needed so as to add a brand new person sign to our analytics pipeline. Utilizing our earlier serving infrastructure, the information must be despatched by way of Confluent-hosted cases of Apache Kafka and ksqlDB after which denormalized and/or rolled up. Then, a selected Elasticsearch index must be manually adjusted or constructed for that knowledge. Solely then might we question the information. The whole course of took weeks.

Simply sustaining our current queries was additionally an enormous effort. Our knowledge adjustments continuously, so we have been continuously upserting new knowledge into current tables. That required a time-consuming replace to the related Elasticsearch index each time. And after each Elasticsearch index was created or up to date, we needed to manually take a look at and replace each different part in our knowledge pipeline to ensure we had not created bottlenecks, launched knowledge errors, and so on.

Fixing for Effectivity, Efficiency, and Scalability

Our new real-time analytics platform can be core to our development technique, so we rigorously evaluated many choices.

We designed a knowledge pipeline utilizing Airflow to tug knowledge from Snowflake and push it into one in every of our OLTP databases that serves the Elasticsearch-powered feed, optionally with a cache in entrance. It was potential to schedule this job to run on 5, 10, 20 minute intervals, however with the extra latency we have been unable to fulfill our SLAs, whereas the technical complexity diminished our desired developer velocity.

So we evaluated many real-time options to Elasticsearch, together with Rockset, Materialize, Apache Druid and Apache Pinot. Each one in every of these SQL-first platforms met our necessities, however we have been searching for a associate that would tackle the operational overhead as nicely.

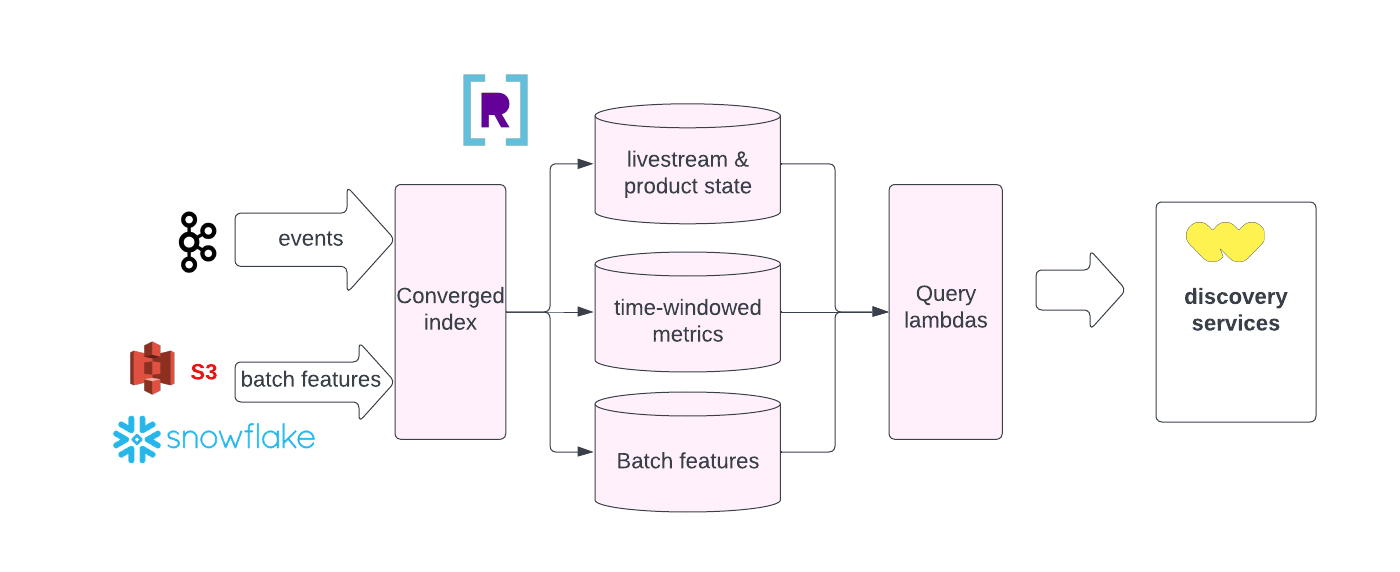

In the long run, we deployed Rockset over these different choices as a result of it had the most effective mix of options to underpin our development: a fully-managed, developer-enhancing platform with real-time ingestion and question speeds, excessive concurrency and computerized scalability.

Let’s have a look at our highest precedence, developer productiveness, which Rockset turbocharges in a number of methods. With Rockset’s Converged Index™ characteristic, all fields, together with nested ones, are listed, which ensures that queries are mechanically optimized, operating quick regardless of the kind of question or the construction of the information. We now not have to fret concerning the time and labor of constructing and sustaining indexes, as we needed to with Elasticsearch. Rockset additionally makes SQL a first-class citizen, which is nice for our knowledge scientists and machine studying engineers. It provides a full menu of SQL instructions, together with 4 sorts of joins, searches and aggregations. Such advanced analytics have been more durable to carry out utilizing Elasticsearch.

With Rockset, we’ve a a lot sooner improvement workflow. When we have to add a brand new person sign or knowledge supply to our rating engine, we will be a part of this new dataset with out having to denormalize it first. If the characteristic is working as meant and the efficiency is nice, we will finalize it and put it into manufacturing inside days. If the latency is excessive, then we will think about denormalizing the information or do some precalcuations in KSQL first. Both approach, this slashes our time-to-ship from weeks to days.

Rockset’s fully-managed SaaS platform is mature and a primary mover within the area. Take how Rockset decouples storage from compute. This offers Rockset on the spot, computerized scalability to deal with our rising, albeit spiky site visitors (resembling when a preferred product or streamer comes on-line). Upserting knowledge can also be a breeze because of Rockset’s mutable structure and Write API, which additionally makes inserts, updates and deletes easy.

As for efficiency, Rockset additionally delivered true real-time ingestion and queries, with sub-50 millisecond end-to-end latency. That didn’t simply match Elasticsearch, however did so at a lot decrease operational effort and value, whereas dealing with a a lot increased quantity and number of knowledge, and enabling extra advanced analytics – all in SQL.

It’s not simply the Rockset product that’s been nice. The Rockset engineering group has been a implausible associate. Every time we had a difficulty, we messaged them in Slack and bought a solution rapidly. It’s not the standard vendor relationship – they’ve really been an extension of our group.

A Plethora of Different Actual-Time Makes use of

We’re so pleased with Rockset that we plan to broaden its utilization in lots of areas. Two slam dunks can be group belief and security, resembling monitoring feedback and chat for offensive language, the place Rockset is already serving to prospects.

We additionally wish to use Rockset as a mini-OLAP database to supply real-time studies and dashboards to our sellers. Rockset would function a real-time various to Snowflake, and it will be much more handy and simple to make use of. As an example, upserting new knowledge by way of the Rockset API is immediately reindexed and prepared for queries.

We’re additionally severely trying into making Rockset our real-time characteristic retailer for machine studying. Rockset can be good to be a part of a machine studying pipeline feeding actual time options such because the rely of chats within the final 20 minutes in a stream. Information would stream from Kafka right into a Rockset Question Lambda sharing the identical logic as our batch dbt transformations on prime of Snowflake. Ideally someday we’d summary the transformations for use in Rockset and Snowflake dbt pipelines for composability and repeatability. Information scientists know SQL, which Rockset strongly helps.

Rockset is in our candy spot now. After all, in an ideal world that revolved round Whatnot, Rockset would add options particularly for us, resembling stream processing, approximate nearest neighbors search, auto-scaling to call a number of. We nonetheless have some use instances the place real-time joins aren’t sufficient, forcing us to do some pre-calculations. If we might get all of that in a single platform relatively than having to deploy a heterogenous stack, we’d find it irresistible.

Be taught extra about how we construct real-time indicators in our person House Feed. And go to the Whatnot profession web page to see the openings on our engineering group.

Embedded content material: https://youtu.be/jxdEi-Ma_J8?si=iadp2XEp3NOmdDlm