Open supply PyTorch runs tens of hundreds of assessments on a number of platforms and compilers to validate each change as our CI (Steady Integration). We monitor stats on our CI system to energy

- customized infrastructure, corresponding to dynamically sharding check jobs throughout totally different machines

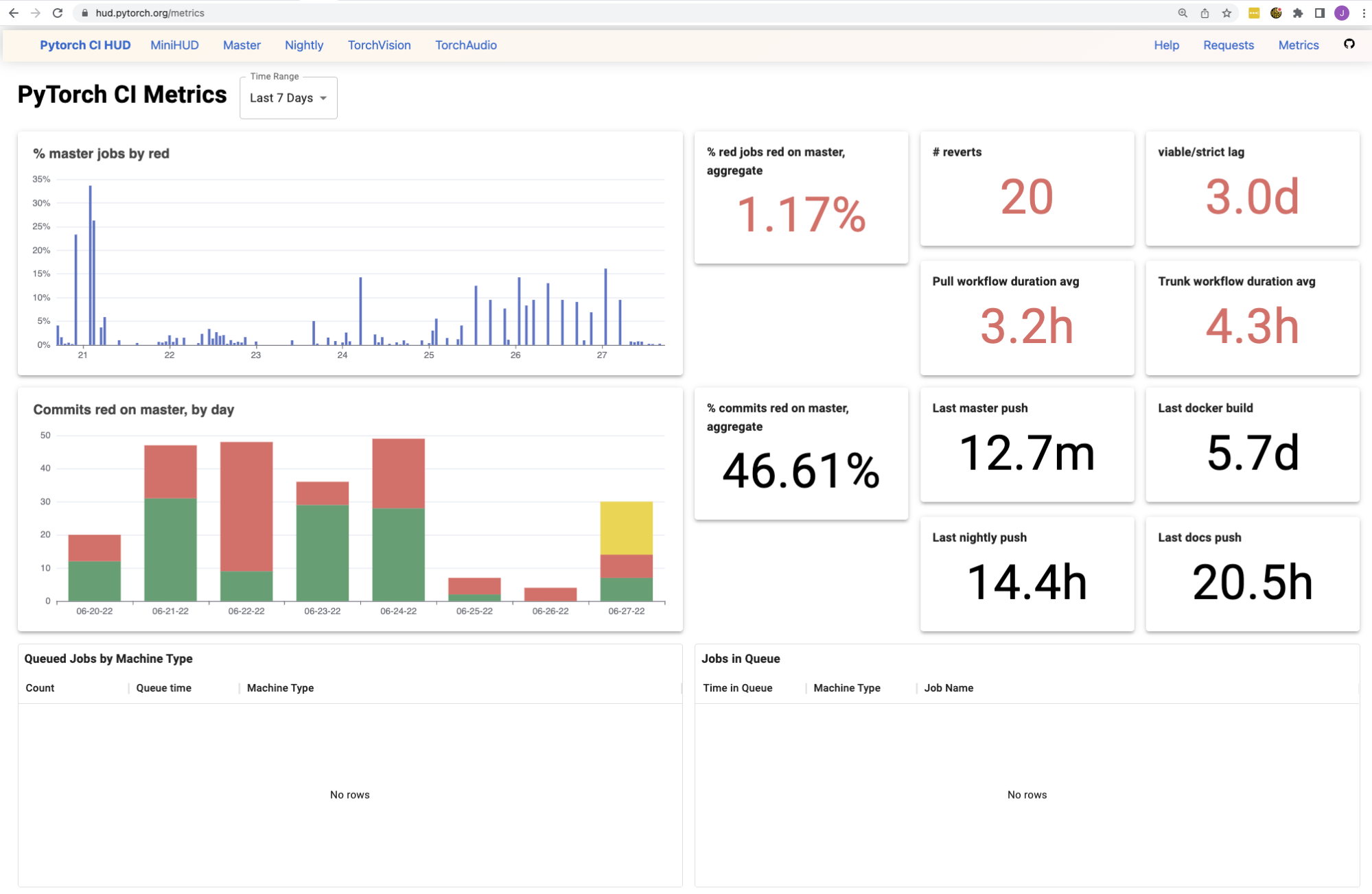

- developer-facing dashboards, see hud.pytorch.org, to trace the greenness of each change

- metrics, see hud.pytorch.org/metrics, to trace the well being of our CI when it comes to reliability and time-to-signal

Our necessities for a knowledge backend

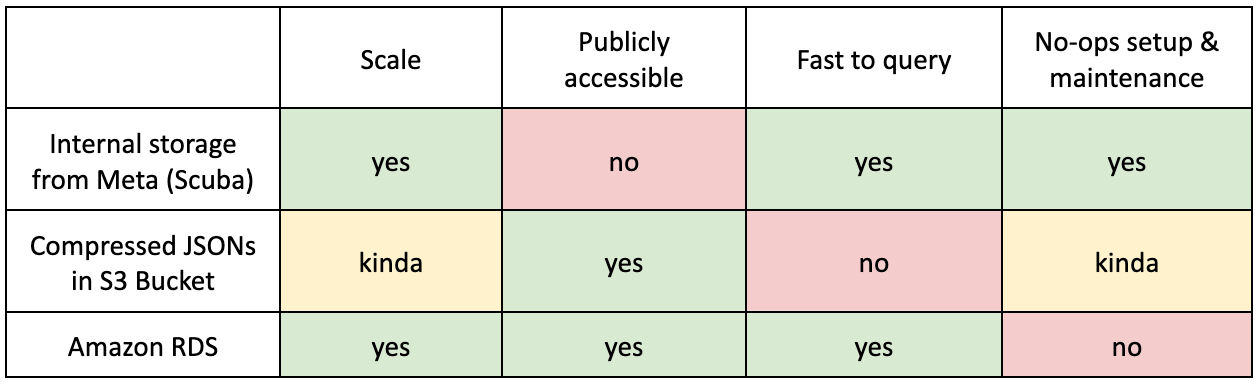

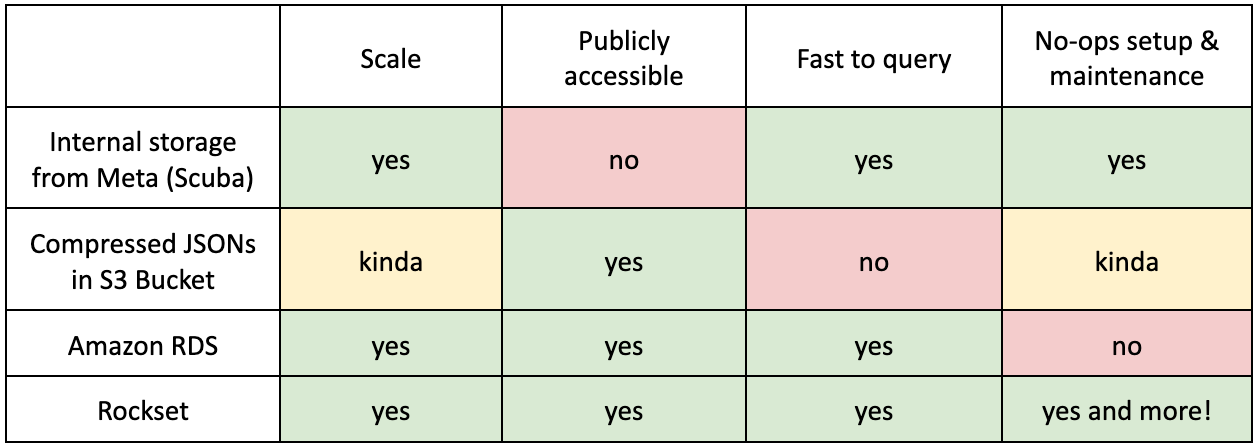

These CI stats and dashboards serve hundreds of contributors, from firms corresponding to Google, Microsoft and NVIDIA, offering them invaluable data on PyTorch’s very advanced check suite. Consequently, we would have liked a knowledge backend with the next traits:

What did we use earlier than Rockset?

Inner storage from Meta (Scuba)

TL;DR

- Professionals: scalable + quick to question

- Con: not publicly accessible! We couldn’t expose our instruments and dashboards to customers though the information we had been internet hosting was not delicate.

As many people work at Meta, utilizing an already-built, feature-full knowledge backend was the answer, particularly when there weren’t many PyTorch maintainers and undoubtedly no devoted Dev Infra crew. With assist from the Open Supply crew at Meta, we arrange knowledge pipelines for our many check instances and all of the GitHub webhooks we might care about. Scuba allowed us to retailer no matter we happy (since our scale is mainly nothing in comparison with Fb scale), interactively slice and cube the information in actual time (no have to be taught SQL!), and required minimal upkeep from us (since another inside crew was preventing its fires).

It feels like a dream till you keep in mind that PyTorch is an open supply library! All the information we had been gathering was not delicate, but we couldn’t share it with the world as a result of it was hosted internally. Our fine-grained dashboards had been seen internally solely and the instruments we wrote on prime of this knowledge couldn’t be externalized.

For instance, again within the outdated days, after we had been trying to trace Home windows “smoke assessments”, or check instances that appear extra prone to fail on Home windows solely (and never on some other platform), we wrote an inside question to characterize the set. The concept was to run this smaller subset of assessments on Home windows jobs throughout improvement on pull requests, since Home windows GPUs are costly and we wished to keep away from operating assessments that wouldn’t give us as a lot sign. Because the question was inside however the outcomes had been used externally, we got here up with the hacky answer of: Jane will simply run the interior question on occasion and manually replace the outcomes externally. As you’ll be able to think about, it was vulnerable to human error and inconsistencies because it was simple to make exterior modifications (like renaming some jobs) and overlook to replace the interior question that just one engineer was taking a look at.

Compressed JSONs in an S3 bucket

TL;DR

- Professionals: sort of scalable + publicly accessible

- Con: terrible to question + not really scalable!

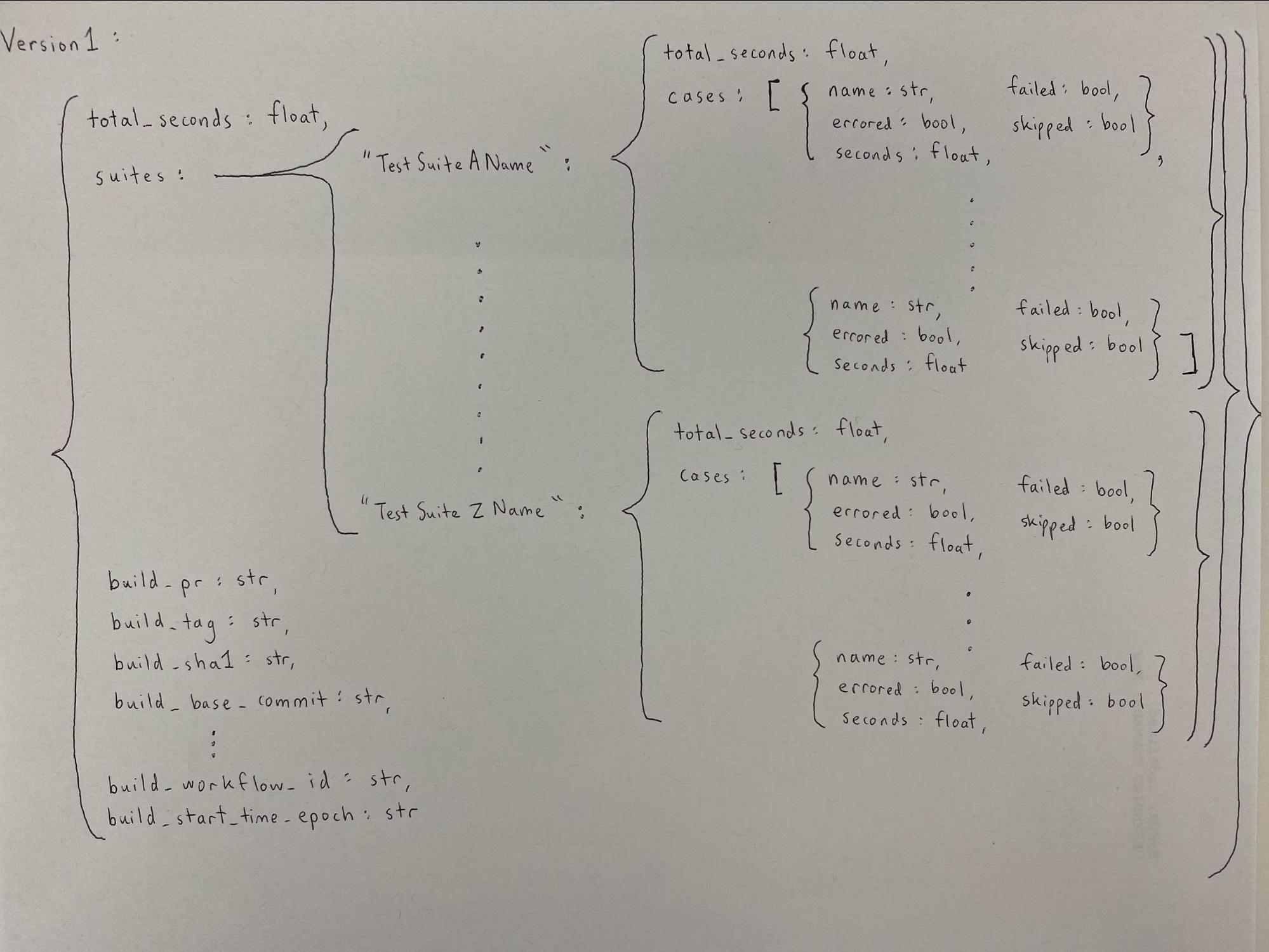

Someday in 2020, we determined that we had been going to publicly report our check occasions for the aim of monitoring check historical past, reporting check time regressions, and automated sharding. We went with S3, because it was pretty light-weight to write down and browse from it, however extra importantly, it was publicly accessible!

We handled the scalability drawback early on. Since writing 10000 paperwork to S3 wasn’t (and nonetheless isn’t) a super choice (it will be tremendous sluggish), we had aggregated check stats right into a JSON, then compressed the JSON, then submitted it to S3. After we wanted to learn the stats, we’d go within the reverse order and doubtlessly do totally different aggregations for our varied instruments.

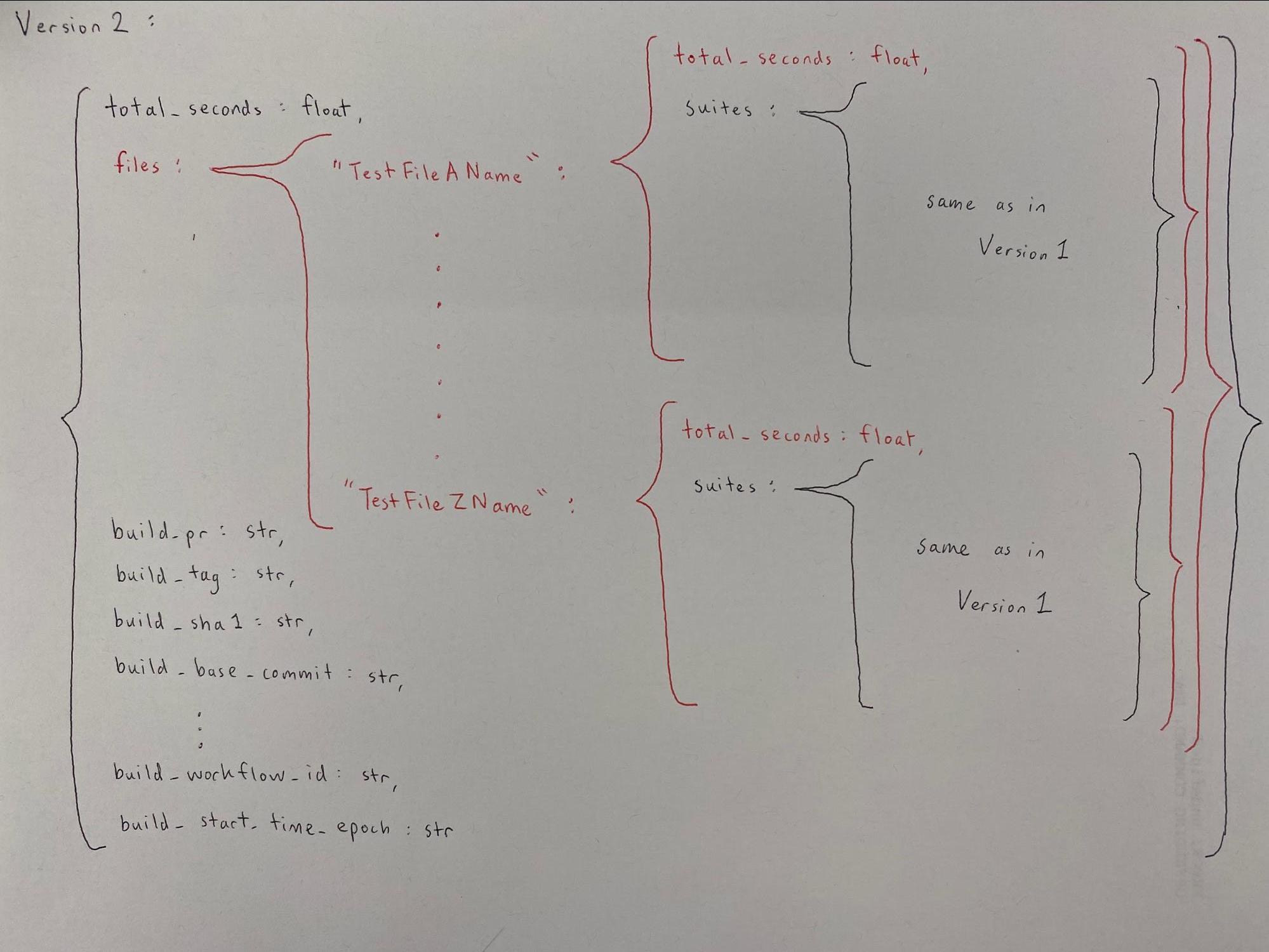

In reality, since sharding was a use case that solely got here up later within the format of this knowledge, we realized just a few months after stats had already been piling up that we must always have been monitoring check filename data. We rewrote our whole JSON logic to accommodate sharding by check file–if you wish to see how messy that was, take a look at the category definitions on this file.

I flippantly chuckle right this moment that this code has supported us the previous 2 years and is nonetheless supporting our present sharding infrastructure. The chuckle is barely mild as a result of though this answer appears jank, it labored wonderful for the use instances we had in thoughts again then: sharding by file, categorizing sluggish assessments, and a script to see check case historical past. It grew to become an even bigger drawback after we began wanting extra (shock shock). We wished to check out Home windows smoke assessments (the identical ones from the final part) and flaky check monitoring, which each required extra advanced queries on check instances throughout totally different jobs on totally different commits from extra than simply the previous day. The scalability drawback now actually hit us. Bear in mind all of the decompressing and de-aggregating and re-aggregating that was taking place for each JSON? We’d have had to try this massaging for doubtlessly a whole lot of hundreds of JSONs. Therefore, as a substitute of going additional down this path, we opted for a unique answer that might permit simpler querying–Amazon RDS.

Amazon RDS

TL;DR

- Professionals: scale, publicly accessible, quick to question

- Con: larger upkeep prices

Amazon RDS was the pure publicly accessible database answer as we weren’t conscious of Rockset on the time. To cowl our rising necessities, we put in a number of weeks of effort to arrange our RDS occasion and created a number of AWS Lambdas to assist the database, silently accepting the rising upkeep price. With RDS, we had been capable of begin internet hosting public dashboards of our metrics (like check redness and flakiness) on Grafana, which was a significant win!

Life With Rockset

We most likely would have continued with RDS for a few years and eaten up the price of operations as a necessity, however one among our engineers (Michael) determined to “go rogue” and check out Rockset close to the top of 2021. The concept of “if it ain’t broke, don’t repair it,” was within the air, and most of us didn’t see rapid worth on this endeavor. Michael insisted that minimizing upkeep price was essential particularly for a small crew of engineers, and he was proper! It’s often simpler to consider an additive answer, corresponding to “let’s simply construct yet one more factor to alleviate this ache”, however it’s often higher to go along with a subtractive answer if accessible, corresponding to “let’s simply take away the ache!”

The outcomes of this endeavor had been shortly evident: Michael was capable of arrange Rockset and replicate the principle parts of our earlier dashboard in beneath 2 weeks! Rockset met all of our necessities AND was much less of a ache to keep up!

Whereas the primary 3 necessities had been constantly met by different knowledge backend options, the “no-ops setup and upkeep” requirement was the place Rockset received by a landslide. Apart from being a completely managed answer and assembly the necessities we had been on the lookout for in a knowledge backend, utilizing Rockset introduced a number of different advantages.

-

Schemaless ingest

- We do not have to schematize the information beforehand. Virtually all our knowledge is JSON and it is very useful to have the ability to write all the things straight into Rockset and question the information as is.

- This has elevated the rate of improvement. We are able to add new options and knowledge simply, with out having to do further work to make all the things constant.

-

Actual-time knowledge

- We ended up transferring away from S3 as our knowledge supply and now use Rockset’s native connector to sync our CI stats from DynamoDB.

Rockset has proved to satisfy our necessities with its potential to scale, exist as an open and accessible cloud service, and question large datasets shortly. Importing 10 million paperwork each hour is now the norm, and it comes with out sacrificing querying capabilities. Our metrics and dashboards have been consolidated into one HUD with one backend, and we are able to now take away the pointless complexities of RDS with AWS Lambdas and self-hosted servers. We talked about Scuba (inside to Meta) earlier and we discovered that Rockset may be very very similar to Scuba however hosted on the general public cloud!

What Subsequent?

We’re excited to retire our outdated infrastructure and consolidate much more of our instruments to make use of a standard knowledge backend. We’re much more excited to search out out what new instruments we might construct with Rockset.

This visitor publish was authored by Jane Xu and Michael Suo, who’re each software program engineers at Fb.