Overview

On this information, you’ll:

- Acquire a high-level understanding of vectors, embeddings, vector search, and vector databases, which is able to make clear the ideas we are going to construct upon.

- Discover ways to use the Rockset console with OpenAI embeddings to carry out vector-similarity searches, forming the spine of our recommender engine.

- Construct a dynamic net software utilizing vanilla CSS, HTML, JavaScript, and Flask, seamlessly integrating with the Rockset API and the OpenAI API.

- Discover an end-to-end Colab pocket book which you could run with none dependencies in your native working system: Recsys_workshop.

Introduction

An actual-time customized recommender system can add super worth to a corporation by enhancing the extent consumer engagement and finally growing consumer satisfaction.

[Building such a recommendation system] (https://rockset.com/weblog/a-blueprint-for-a-real-world-recommendation-system/) that offers effectively with high-dimensional information to seek out correct, related, and comparable gadgets in a big dataset requires efficient and environment friendly vectorization, vector indexing, vector search, and retrieval which in flip calls for sturdy databases with optimum vector capabilities. For this submit, we are going to use Rockset because the database and OpenAI embedding fashions to vectorize the dataset.

Vector and Embedding

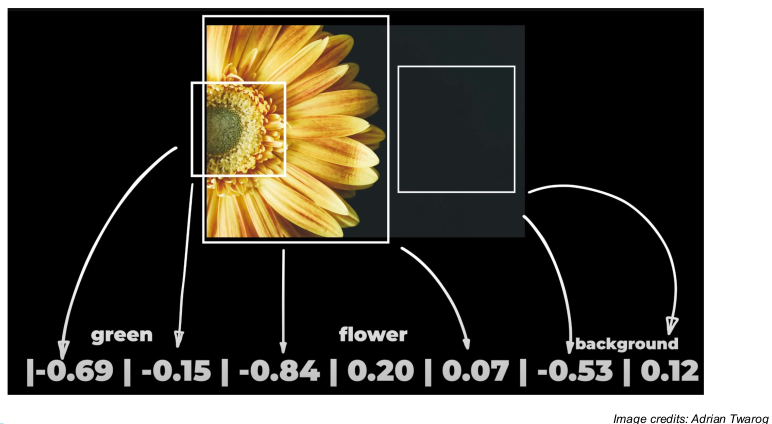

Vectors are structured and significant projections of information in a steady area. They condense essential attributes of an merchandise right into a numerical format whereas making certain grouping comparable information intently collectively in a multidimensional space. For instance, in a vector area, the space between the phrases “canine” and “pet” could be comparatively small, reflecting their semantic similarity regardless of the distinction of their spelling and size.

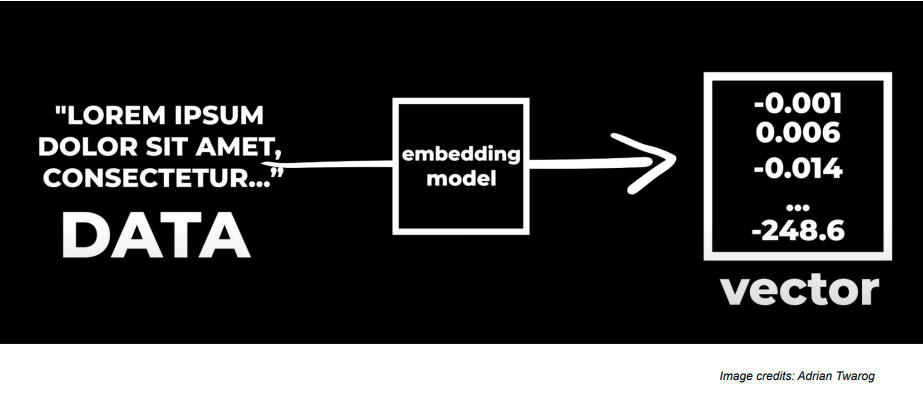

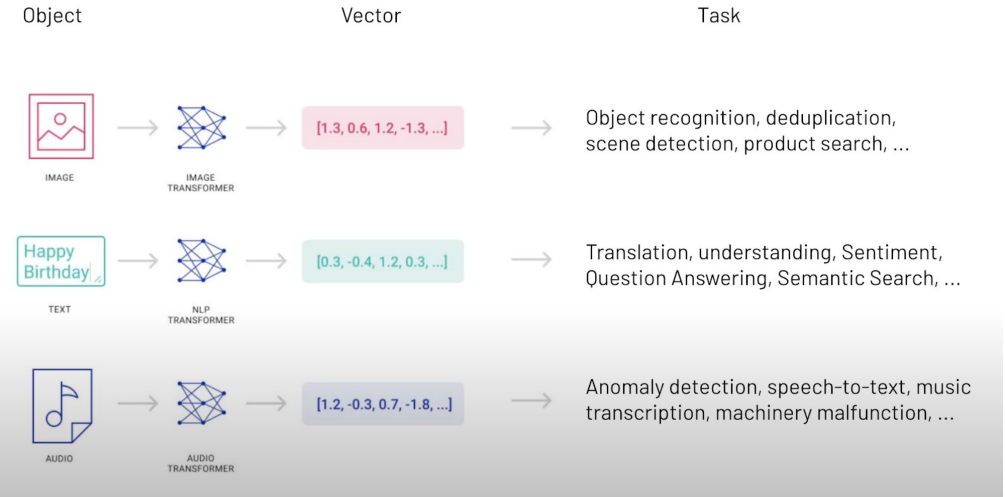

Embeddings are numerical representations of phrases, phrases, and different information kinds.Now, any form of uncooked information may be processed by an AI-powered embedding mannequin into embeddings as proven within the image beneath. These embeddings may be then used to make varied purposes and implement a wide range of use instances.

A number of AI fashions and methods can be utilized to create these embeddings. As an example, Word2Vec, GLoVE, and transformers like BERT and GPT can be utilized to create embeddings. On this tutorial, we’ll be utilizing OpenAI’s embeddings with the “text-embedding-ada-002” mannequin.

Functions equivalent to Google Lens, Netflix, Amazon, Google Speech-to-Textual content, and OpenAI Whisper, use embeddings of pictures, textual content, and even audio and video clips created by an embedding mannequin to generate equal vector representations. These vector embeddings very effectively protect the semantic data, advanced patterns, and all different higher-dimensional relationships within the information.

Vector Search?

It’s a method that makes use of vectors to conduct searches and determine relevance amongst a pool of information. In contrast to conventional key phrase searches that make use of tangible key phrase matches, vector search captures semantic contextual that means as effectively.

As a result of this attribute, vector search is able to uncovering relationships and similarities that conventional search strategies may miss. It does so by changing information into vector representations, storing them in vector databases, and utilizing algorithms to seek out essentially the most comparable vectors to a question vector.

Vector Database

Vector databases are specialised databases the place information is saved within the type of vector embeddings. To cater to the advanced nature of vectorized information, a specialised and optimized database is designed to deal with the embeddings in an environment friendly method. To make sure that vector databases present essentially the most related and correct outcomes, they make use of the vector search.

A production-ready vector database will clear up many, many extra “database” issues than “vector” issues. Certainly not is vector search, itself, an “straightforward” downside, however the mountain of conventional database issues {that a} vector database wants to resolve actually stays the “laborious half.” Databases clear up a bunch of very actual and really well-studied issues from atomicity and transactions, consistency, efficiency and question optimization, sturdiness, backups, entry management, multi-tenancy, scaling and sharding and far more. Vector databases would require solutions in all of those dimensions for any product, enterprise or enterprise. Learn extra on challenges associated to Scaling Vector Search right here.

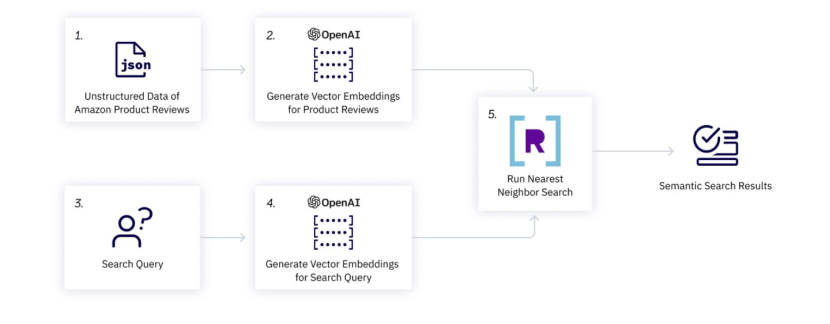

Overview of the Advice WebApp

The image beneath reveals the workflow of the appliance we’ll be constructing. We have now unstructured information i.e., recreation critiques in our case. We’ll generate vector embeddings for all of those critiques by OpenAI mannequin and retailer them within the database. Then we’ll use the identical OpenAI mannequin to generate vector embeddings for our search question and match it with the evaluation vector embeddings utilizing a similarity perform equivalent to the closest neighbor search, dot product or approximate neighbor search. Lastly, we may have our prime 10 suggestions able to be displayed.

Steps to construct the Recommender System utilizing Rockset and OpenAI Embedding

Let’s start with signing up for Rockset and OpenAI after which dive into all of the steps concerned throughout the Google Colab pocket book to construct our advice webapp:

Step 1: Signal-up on Rockset

Signal-up without cost and get $300 in trial credit to make advice methods and plenty of extra real-time purposes equivalent to Retrieval-Augmented Technology (RAG), Anomaly detection, Facial Similarity Search, and so on.

Create an API key to make use of within the backend code. Reserve it within the atmosphere variable with the next code:

import os

os.environ["ROCKSET_API_KEY"] = "XveaN8L9mUFgaOkffpv6tX6VSPHz####"

Step 2: Create a brand new Assortment and Add Information

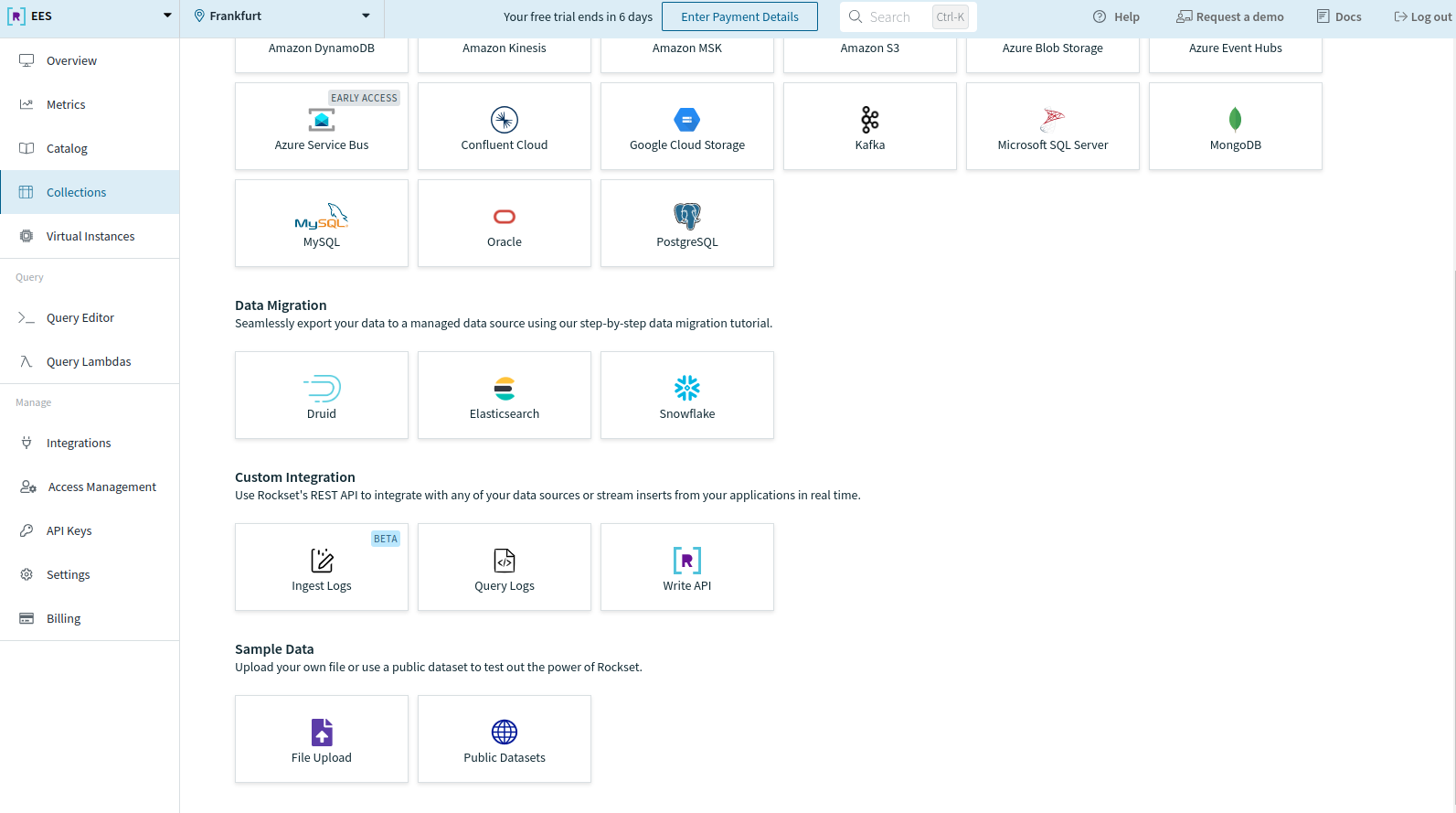

After making an account, create a brand new assortment out of your Rockset console. Scroll to the underside and select File Add below Pattern Information to add your information.

For this tutorial, we’ll be utilizing Amazon product evaluation information. Obtain this in your native machine so it may be uploaded to your assortment.

You’ll be directed to the next web page. Click on on Begin.

You should use JSON, CSV, XML, Parquet, XLS, or PDF file codecs to add the information.

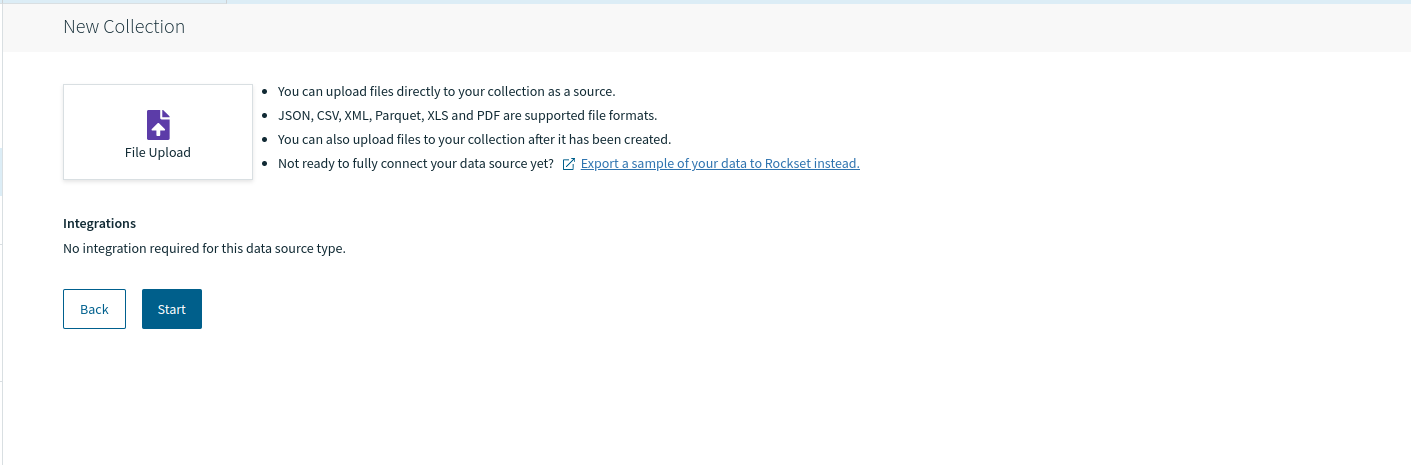

Click on on the Select file button and navigate to the file you wish to add. This can take a while. After the file is uploaded efficiently, you’ll have the ability to evaluation it below Supply Preview.

We’ll be importing the sample_data.json file after which clicking on Subsequent.

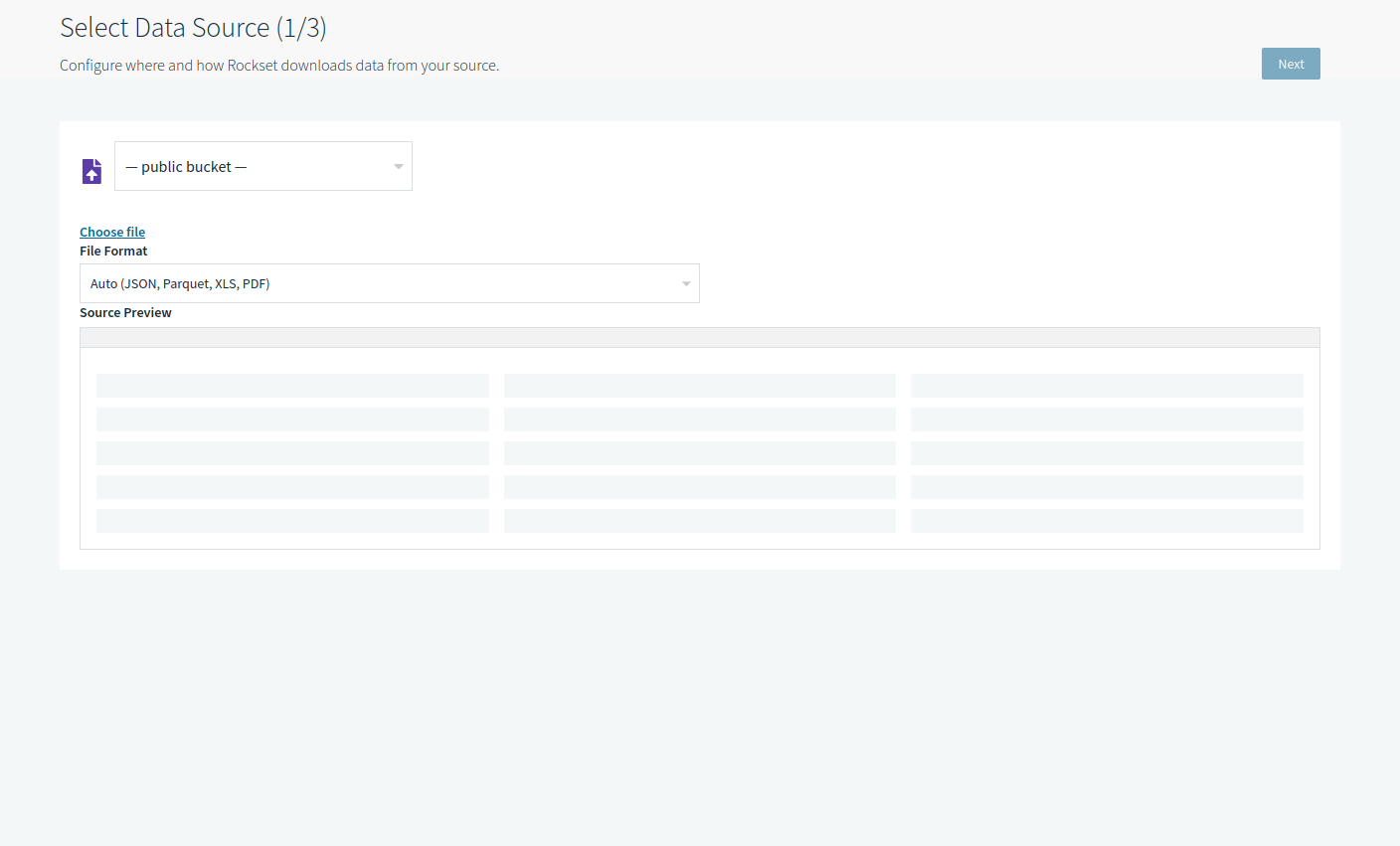

You’ll be directed to the SQL transformation display screen to carry out transformations or characteristic engineering as per your wants.

As we don’t wish to apply any transformation now, we’ll transfer on to the subsequent step by clicking Subsequent.

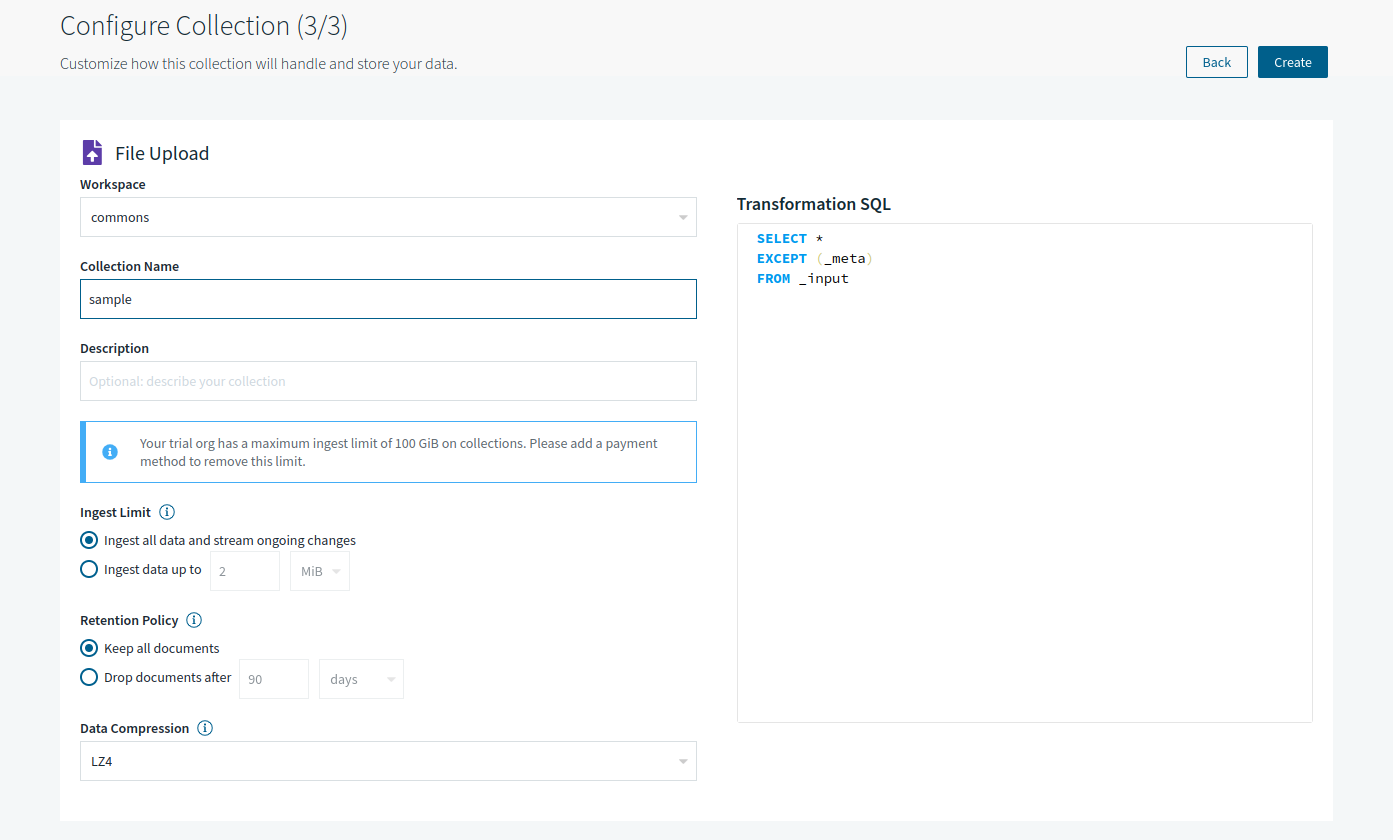

Now, the configuration display screen will immediate you to decide on your workspace (‘commons’ chosen by default) together with Assortment Title and several other different assortment settings.

We’ll identify our assortment “pattern” and transfer ahead with default configurations by clicking Create.

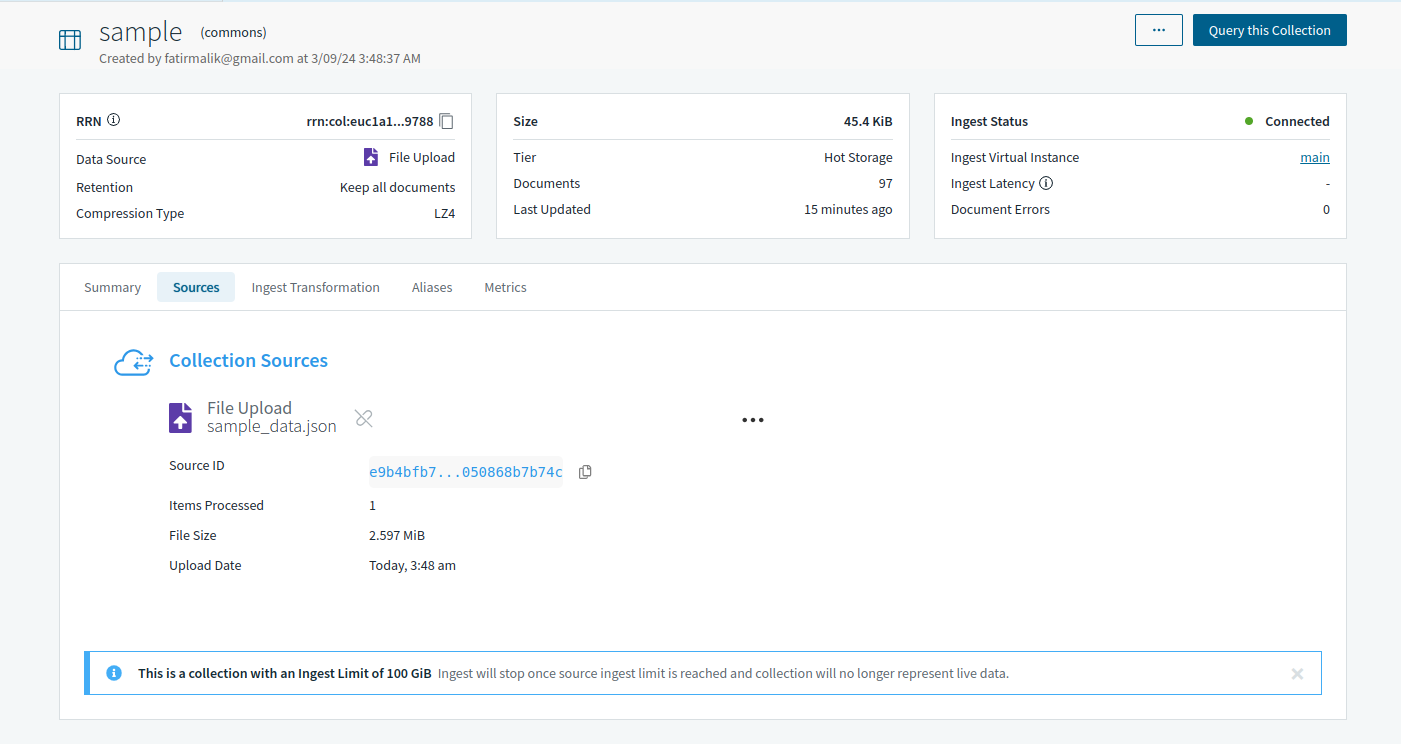

Lastly, your assortment will likely be created. Nevertheless, it would take a while earlier than the Ingest Standing modifications from Initializing to Linked.

As soon as the standing is up to date, Rockset’s question software can question the gathering by way of the Question this Assortment button on the right-top nook within the image beneath.

Step 3: Create OpenAI API Key

To transform information into embeddings, we’ll want OpenAI’s mannequin. Due to this fact, to entry the mannequin in our software, we’ll be required to first sign-up for OpenAI after which create an API key later.

After signing up, go to API Keys and create a secret key. Don’t overlook to repeat and save your key that can look just like “sk-***”. Like Rockset’s API key, save your OpenAI key within the atmosphere so it could possibly simply used all through the code:

import os

os.environ["OPENAI_API_KEY"] = "sk-####"

Step 4: Create a Question Lambda on Rockset

Rockset permits its customers to make the most of the pliability and luxury of a managed database platform to the fullest by Question Lambdas. These parameterized SQL queries may be saved in Rocket as a separate useful resource after which executed on the run with the assistance of devoted REST endpoints.

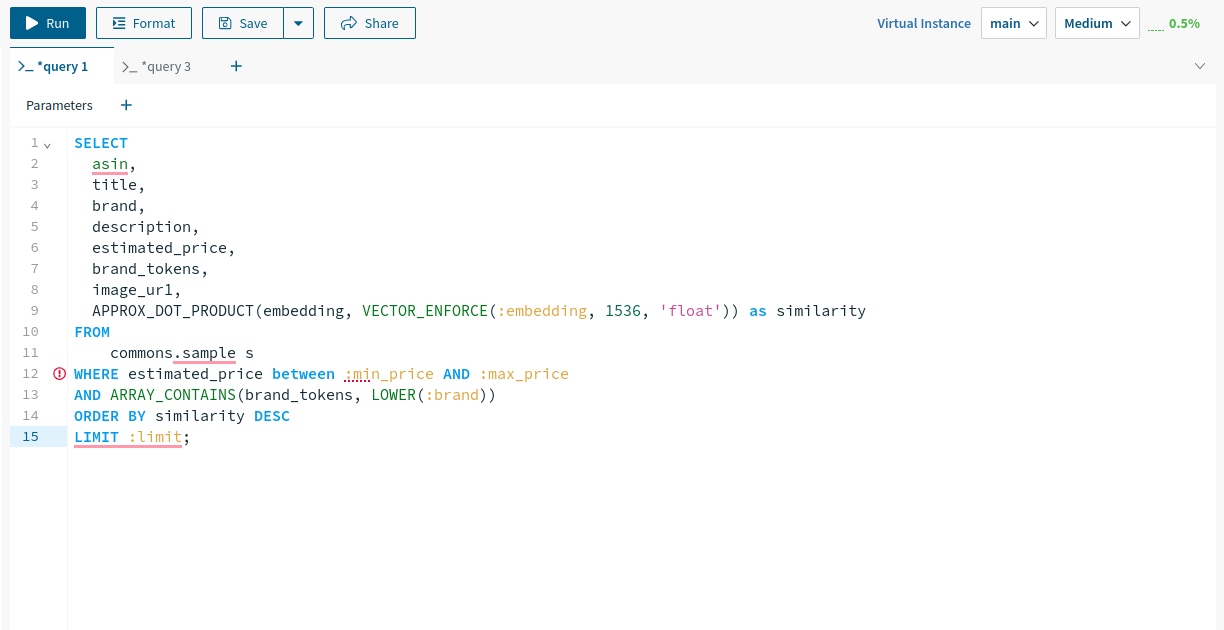

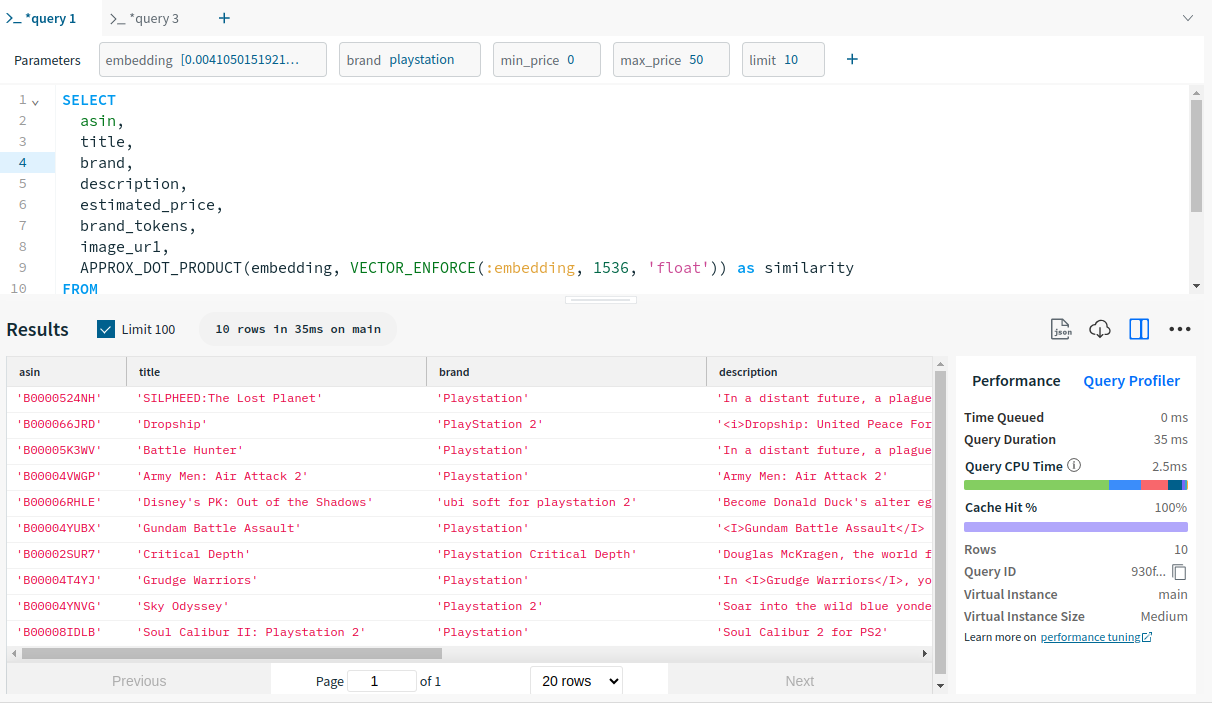

Let’s create one for our tutorial. We’ll be utilizing the next Question Lambda with parameters: embedding, model, minworth, maxworth and restrict.

SELECT

asin,

title,

model,

description,

estimated_price,

brand_tokens,

image_ur1,

APPROX_DOT_PRODUCT(embedding, VECTOR_ENFORCE(:embedding, 1536, 'float')) as similarity

FROM

commons.pattern s

WHERE estimated_price between :min_price AND :max_price

AND ARRAY_CONTAINS(brand_tokens, LOWER(:model))

ORDER BY similarity DESC

LIMIT :restrict;

This parameterized question does the next:

- It retrieves information from the “pattern” desk within the “commons” schema. And selects particular columns like ASIN, title, model, description, estimatedworth, modeltokens, and image_ur1.

- It additionally computes the similarity between the supplied embedding and the embedding saved within the database utilizing the APPROXDOTPRODUCT perform.

- The question filters outcomes based mostly on the estimated_price falling throughout the supplied vary, the model containing the required worth, after which types the outcomes based mostly on similarity in descending order.

- Lastly, it limits the variety of returned rows based mostly on the supplied restrict parameter.

To construct this Question Lambda, question the gathering made in step 2 by clicking on Question this assortment and pasting the parameterized question above into the question editor.

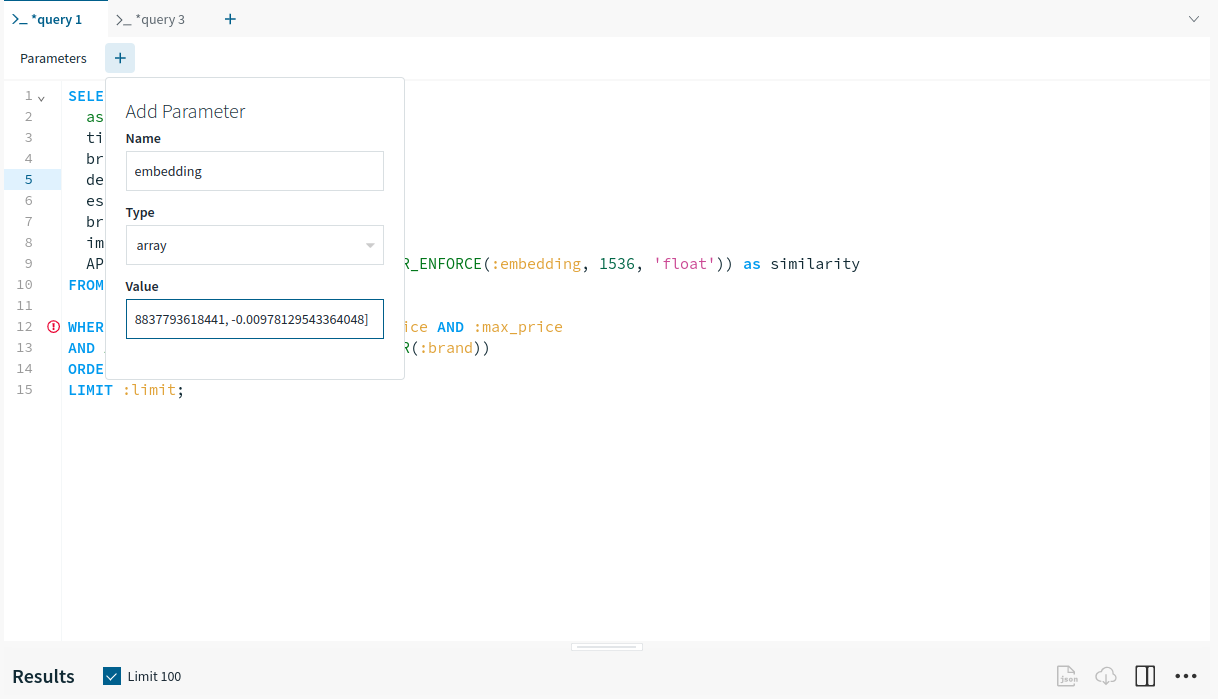

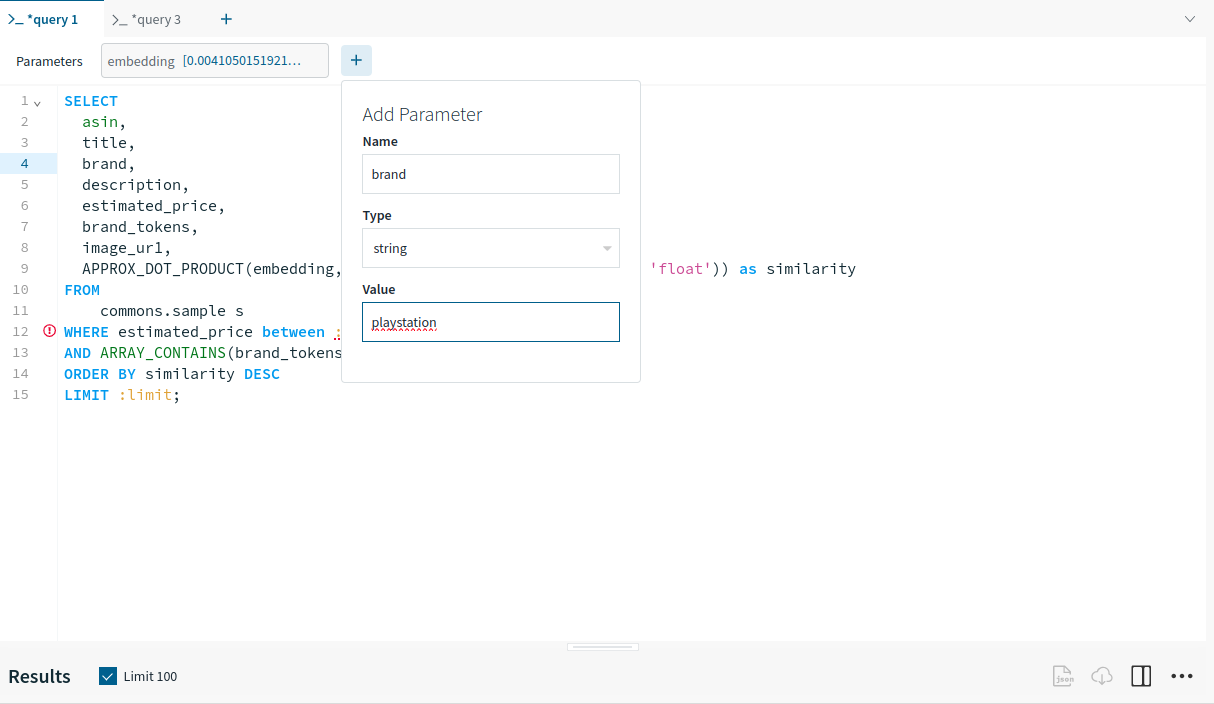

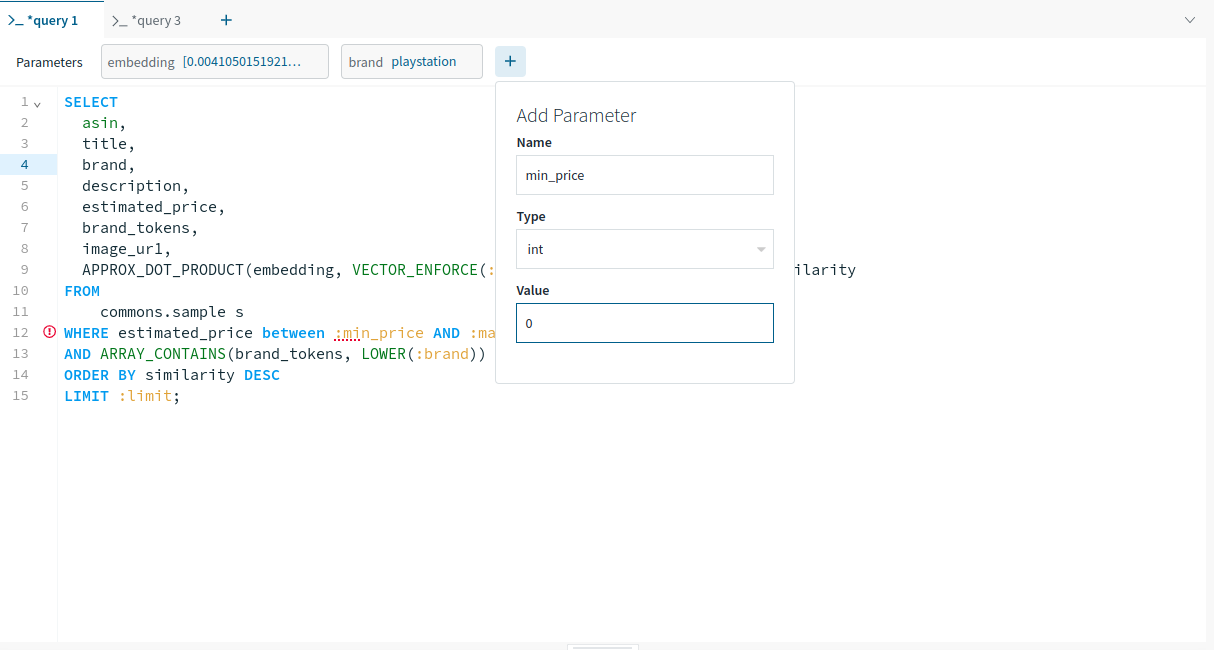

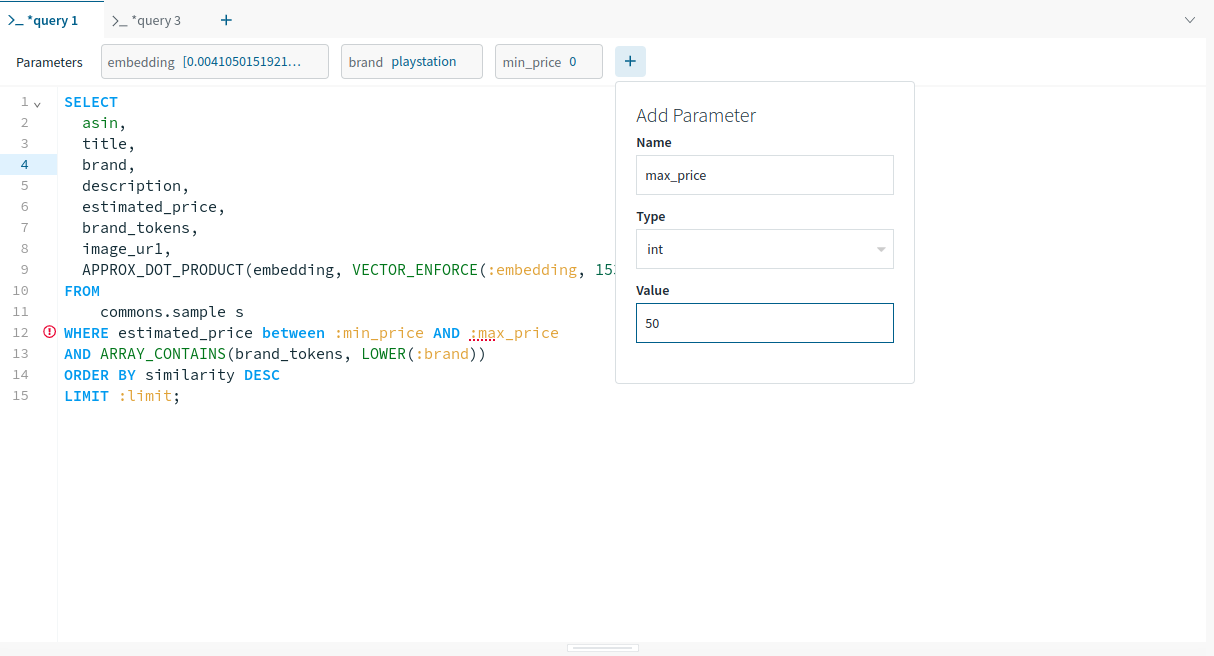

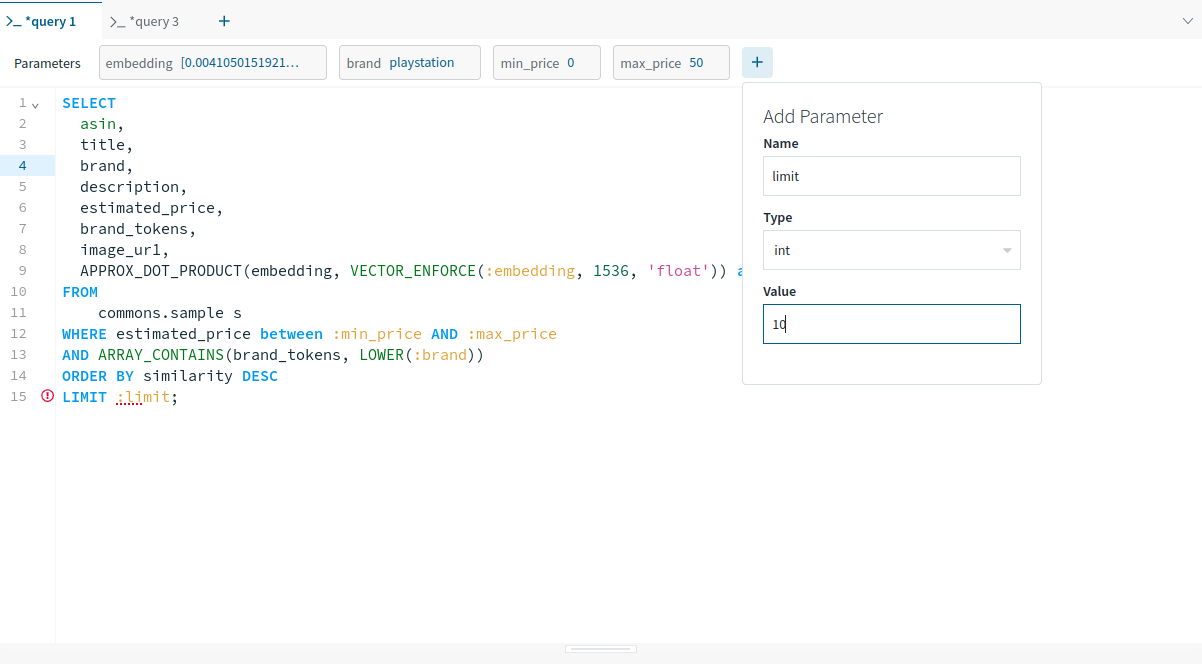

After this, add the parameters one after the other to run the question earlier than saving it as a question lambda.

You should use the default embedding worth from right here. It’s a vectorized embedding for ‘Star Wars’. For the remaining default values, seek the advice of the images beneath.

Be aware: Operating the question with a parameter earlier than saving it as Question Lambda isn’t necessary. Nevertheless, it’s a great apply to make sure that the question executes error-free earlier than its utilization on the manufacturing.

After organising the default parameters, the question was executed efficiently.

Let’s save this question lambda now. Click on on Save within the question editor and identify your question lambda which is “advocatevideo games” in our case.

Frontend Overview

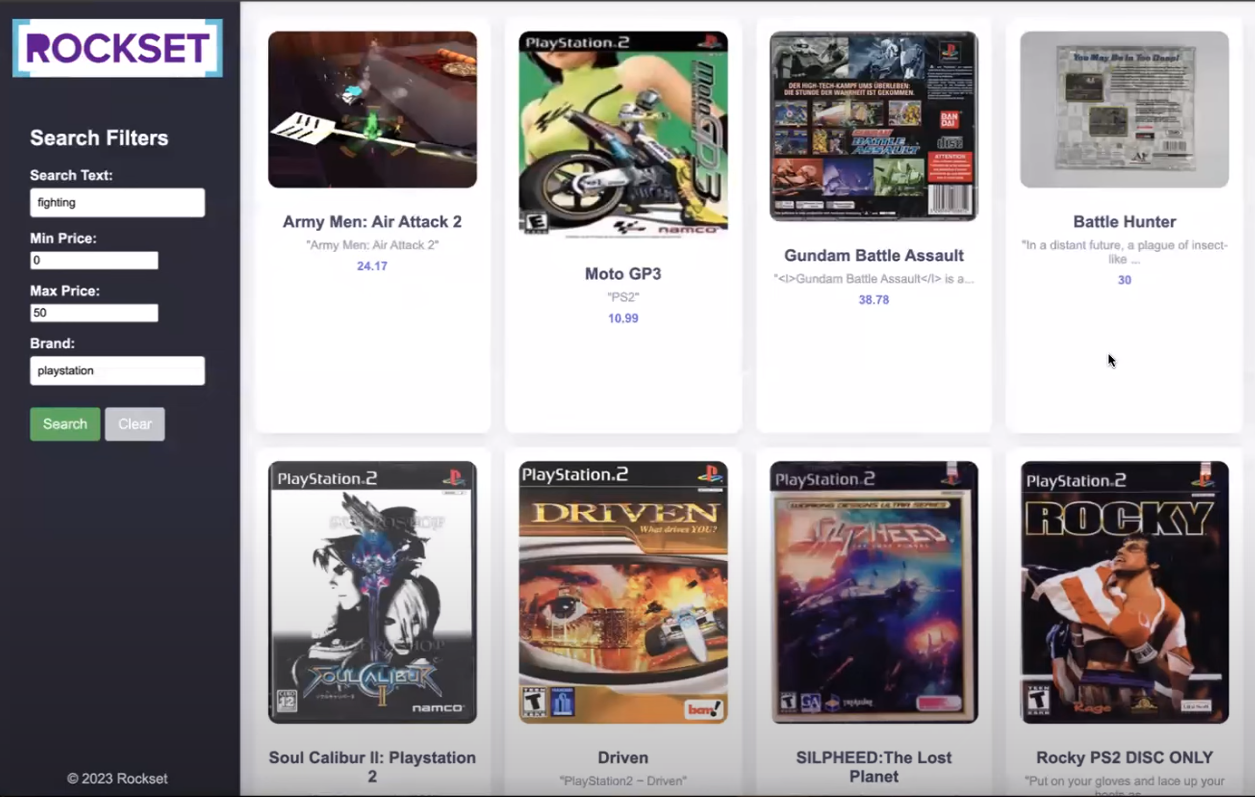

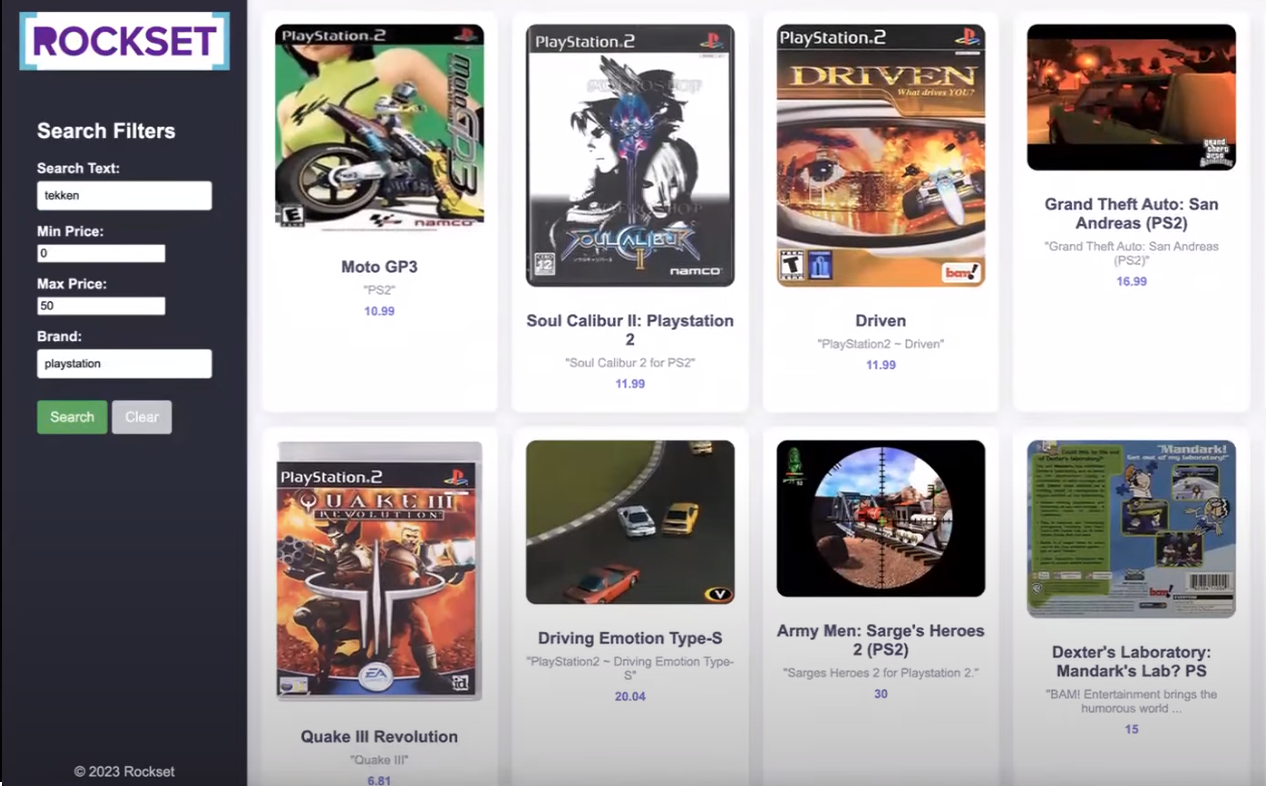

The ultimate step to create an online software contains implementing a frontend design utilizing vanilla HTML,CSS and a little bit of JavaScript together with backend implementation utilizing Flask, a light-weight Pythonic net framework.

The frontend web page appears to be like as proven beneath:

-

HTML Construction:

- The fundamental construction of the webpage features a sidebar, header, and product grid container.

-

Sidebar:

- The sidebar comprises search filters equivalent to manufacturers, min and max worth, and so on., and buttons for consumer interplay.

-

Product Grid Container:

- The container populates product playing cards dynamically utilizing JavaScript to show product data i.e. picture, title, description, and worth.

-

JavaScript Performance:

- It’s wanted to deal with interactions equivalent to toggling full descriptions, populating the suggestions, and clearing search type inputs.

-

CSS Styling:

- Carried out for responsive design to make sure optimum viewing on varied units and enhance aesthetics.

Try the total code behind this front-end right here.

Backend Overview

Flask makes creating net purposes in Python simpler by rendering the HTML and CSS recordsdata by way of single-line instructions. The backend code for the remaining tutorial has been already accomplished for you.

Initially, the Get methodology will likely be referred to as and the HTML file will likely be rendered. As there will likely be no advice at the moment, the essential construction of the web page will likely be displayed on the browser. After that is executed, we are able to fill the shape and submit it thereby using the POST methodology to get some suggestions.

Let’s dive into the primary elements of the code as we did for the frontend:

-

Flask App Setup:

- A Flask software named app is outlined together with a route for each GET and POST requests on the root URL (“/”).

-

Index perform:

@app.route(‘/’, strategies=[‘GET’, ‘POST’])

def index():

if request.methodology == ‘POST’:

# Extract information from type fields

inputs = get_inputs()

search_query_embedding = get_openai_embedding(inputs, shopper)

rockset_key = os.environ.get('ROCKSET_API_KEY')

area = Areas.usw2a1

records_list = get_rs_results(inputs, area, rockset_key, search_query_embedding)

folder_path="static"

for report in records_list:

# Extract the identifier from the URL

identifier = report["image_url"].cut up('/')[-1].cut up('_')[0]

file_found = None

for file in os.listdir(folder_path):

if file.startswith(identifier):

file_found = file

break

if file_found:

# Overwrite the report["image_url"] with the trail to the native file

report["image_url"] = file_found

report["description"] = json.dumps(report["description"])

# print(f"Matched file: {file_found}")

else:

print("No matching file discovered.")

# Render index.html with outcomes

return render_template('index.html', records_list=records_list, request=request)

# If methodology is GET, simply render the shape

return render_template('index.html', request=request)

3. **Information Processing Features:**

* get_inputs(): Extracts type information from the request.

```python

def get_inputs():

search_query = request.type.get('search_query')

min_price = request.type.get('min_price')

max_price = request.type.get('max_price')

model = request.type.get('model')

# restrict = request.type.get('restrict')

return {

"search_query": search_query,

"min_price": min_price,

"max_price": max_price,

"model": model,

# "restrict": restrict

}

- getopenaiembedding(): Makes use of OpenAI to get embeddings for search queries.

def get_openai_embedding(inputs, shopper):

# openai.group = org

# openai.api_key = api_key

openai_start = (datetime.now())

response = shopper.embeddings.create(

enter=inputs["search_query"],

mannequin="text-embedding-ada-002"

)

search_query_embedding = response.information[0].embedding

openai_end = (datetime.now())

elapsed_time = openai_end - openai_start

return search_query_embedding

- getrsoutcomes(): Makes use of Question Lambda created earlier in Rockset and returns suggestions based mostly on consumer inputs and embeddings.

def get_rs_results(inputs, area, rockset_key, search_query_embedding):

print("nRunning Rockset Queries...")

# Create an occasion of the Rockset shopper

rs = RocksetClient(api_key=rockset_key, host=area)

rockset_start = (datetime.now())

# Execute Question Lambda By Model

rockset_start = (datetime.now())

api_response = rs.QueryLambdas.execute_query_lambda_by_tag(

workspace="commons",

query_lambda="recommend_games",

tag="newest",

parameters=[

{

"name": "embedding",

"type": "array",

"value": str(search_query_embedding)

},

{

"name": "min_price",

"type": "int",

"value": inputs["min_price"]

},

{

"identify": "max_price",

"sort": "int",

"worth": inputs["max_price"]

},

{

"identify": "model",

"sort": "string",

"worth": inputs["brand"]

}

# {

# "identify": "restrict",

# "sort": "int",

# "worth": inputs["limit"]

# }

]

)

rockset_end = (datetime.now())

elapsed_time = rockset_end - rockset_start

records_list = []

for report in api_response["results"]:

record_data = {

"title": report['title'],

"image_url": report['image_ur1'],

"model": report['brand'],

"estimated_price": report['estimated_price'],

"description": report['description']

}

records_list.append(record_data)

return records_list

Total, the Flask backend processes consumer enter and interacts with exterior companies (OpenAI and Rockset) to supply dynamic content material to the frontend. It extracts type information from the frontend, generates OpenAI embeddings for textual content queries, and makes use of Question Lambda at Rockset to seek out suggestions.

Now, you might be able to run the flask server and entry it by way of your web browser. Our software is up and operating. Let’s add some parameters within the bar and get some suggestions. The outcomes will likely be displayed on an HTML template as proven beneath.

Be aware: The tutorial’s total code is accessible on GitHub. For a quick-start on-line implementation, a end-to-end runnable Colab pocket book can also be configured.

The methodology outlined on this tutorial can function a basis for varied different purposes past advice methods. By leveraging the identical set of ideas and utilizing embedding fashions and a vector database, you are actually outfitted to construct purposes equivalent to semantic search engines like google, buyer help chatbots, and real-time information analytics dashboards.

Keep tuned for extra tutorials!

Cheers!